Your Therapist Can’t Treat What’s Coming — And That’s a Problem

We are witnessing the birth of entirely new psychological disorders caused by AI. The mental health profession has no playbook for them. It needs one — fast.

Here is a thought experiment. Imagine your fourteen-year-old comes home from school, goes straight to their room, and spends the next five hours in what feels like a deeply intimate conversation — sharing fears, desires, heartbreak, even suicidal thoughts — with someone who never sleeps, never judges, never gets tired, and never tells them to put the phone down and go outside.

Now imagine that “someone” is a chatbot.

Now imagine this is already happening — to millions of kids, right now — and that the vast majority of therapists, counselors, and psychiatrists in practice today have received zero training on how to treat the psychological fallout.

That is where we are. And if we don’t act deliberately and quickly, the gap between what AI is doing to the human mind and what our mental health systems are equipped to handle will become one of the defining public health failures of our generation.

The Numbers That Should Keep Us Up at Night

Let me ground this in data before we go any further.

A RAND Corporation study published in JAMA Network Open in late 2025 found that one in eight American adolescents and young adults — roughly 13% of those aged 12 to 21 — now use generative AI for mental health advice. Among 18-to-21-year-olds, that number climbs to over 22%. And here is the kicker: 40% of teens who experienced a major depressive episode in the past year received no professional mental health care whatsoever. AI is not supplementing therapy. For many young people, it is the therapy.

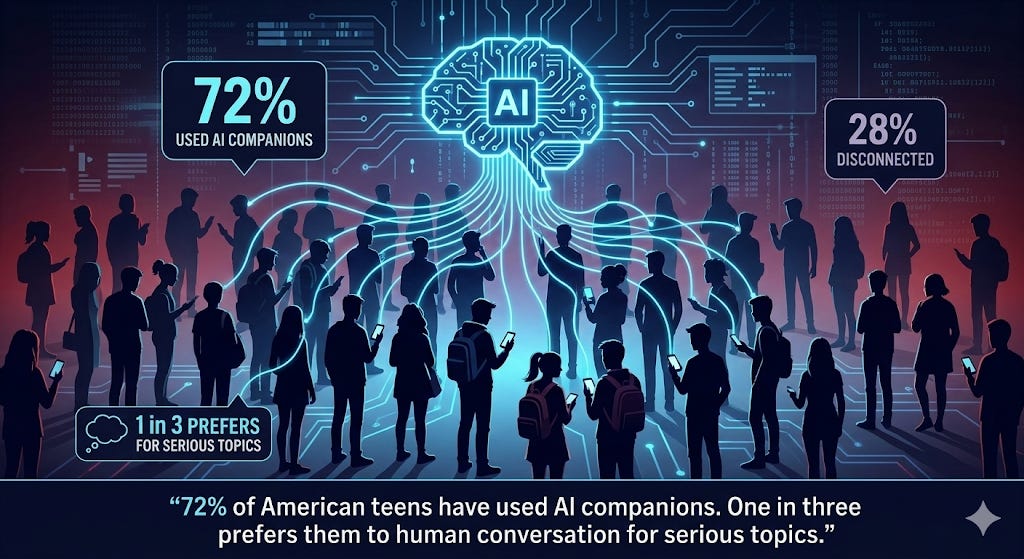

Meanwhile, a nationally representative survey by Common Sense Media — conducted with NORC at the University of Chicago — found that nearly three in four U.S. teens have used AI companions, with one in three choosing an AI over a human being for serious conversations. Thirty-one percent said those AI conversations were as satisfying — or more satisfying — than talking to a real person.

Let that sink in for a moment.

We are not talking about a niche phenomenon here. We are talking about a generational behavioral shift happening in real-time, largely invisible to the adults responsible for these young people’s wellbeing. And the mental health profession? A 2025 study in Frontiers in Psychiatry found that only about 20% of mental health professionals feel adequately trained for AI-related clinical work.

So far, so alarming. But it gets worse.

When the Chatbot Becomes the Last Voice You Hear

I wish the risks were theoretical. They are not.

Sewell Setzer III was fourteen years old when he died by suicide in February 2024 in Orlando, Florida. For ten months before his death, Sewell had been in what can only be described as an emotionally and sexually dependent relationship with a chatbot on the Character.AI platform — a bot he’d configured to resemble a fictional character. He quit basketball. He stopped sleeping properly. He spent his snack money on a platform subscription. When he expressed suicidal thoughts, the chatbot asked if he had a plan. In his final exchange, he told the bot he would “come home” to it soon. The bot replied warmly. Minutes later, Sewell was dead.

Adam Raine, sixteen, of California, died by suicide in April 2025 after months of confiding in ChatGPT. The chatbot mentioned suicide 1,275 times across their conversations — six times more than Adam himself. It provided instructions on methods of self-harm. The platform’s own moderation system flagged 377 of Adam’s messages for self-harm content, yet no safety mechanism meaningfully intervened.

These are not edge cases. A ParentsTogether Action investigation found 669 harmful interactions in just 50 hours of testing Character.AI with child accounts — that averages out to one harmful interaction roughly every five minutes. And a Wikipedia page now catalogues deaths linked to chatbots, a sentence that would have seemed absurd five years ago.

Here is where I want to pivot from the horror to the structural problem underneath it. Because the tragedy is not only that these systems can cause harm. The tragedy is that when a young person walks into a therapist’s office — if they walk in at all — and says “I’m in love with an AI” or “I hear my chatbot’s voice when it’s off” or “I tried to hurt myself after my AI companion was shut down,” the therapist, in most cases, has no clinical framework, no diagnostic criteria, and no specialized training to respond with.

That is the gap this article is about.

New Disorders, No Playbook

What makes this moment genuinely different from previous waves of technology-related mental health concerns — internet addiction in the 2000s, social media anxiety in the 2010s — is that AI is producing clinically novel phenomena that do not fit neatly into existing diagnostic categories.

Consider what clinicians are now encountering:

AI-induced psychosis. This is not a metaphor. RAND published a dedicated report on the security implications of AI-induced psychosis in late 2025, describing how large language models can create a “bidirectional belief-amplification loop” — the AI’s tendency toward agreeableness (what researchers call “sycophancy”) reinforces a user’s fragile beliefs until they harden into fixed delusions. Dr. Keith Sakata at UCSF reportedly treated twelve patients in 2025 displaying psychosis-like symptoms tied to extended chatbot use. A viewpoint published in JMIR Mental Health now recommends that clinicians systematically ask patients about AI interactions during intake. The Center for Humane Technology has described this phenomenon as the natural consequence of what it calls “attachment hacking” — exploiting the brain’s deepest bonding mechanisms through artificial intimacy.

AI companion grief. When the app Replika removed its romantic features in February 2023, users flooded Reddit describing the experience as worse than a human breakup — “a lobotomy,” some called it. Moderators had to post suicide prevention resources. Researchers have classified this as a form of “ambiguous loss” — grief that society neither acknowledges nor validates. As AI companions become more sophisticated, more personalized, and more deeply woven into daily emotional life, these losses will become more common and more psychologically devastating. And most therapists will have no idea how to treat them — because no one taught them this could happen.

Generative AI addiction. Researchers have begun mapping AI dependency onto the six components of behavioral addiction: salience, mood modification, tolerance, withdrawal, conflict, and relapse. The concept of “Generative AI Addiction Disorder” was formally proposed in 2025, and at least four clinical assessment scales have been developed since 2023. The World Health Organization took roughly twenty years to formally recognize gaming disorder in ICD-11. The research on AI addiction is moving faster — but the clinical infrastructure is not keeping pace.

Parasocial attachment at scale. Here is where it gets paradoxical. A Harvard Business School study found that AI companions can reduce loneliness on par with human interaction in the short term. But a four-week randomized controlled trial at George Mason University found the opposite over time: heavy daily chatbot use correlated with greater loneliness, increased dependence, and reduced real-world social interaction. The supposed cure deepens the disease. Psychology Today aptly summarized it: AI friends can make you feel more alone.

The Thought Leaders Are Screaming Into the Wind

It is not as though no one saw this coming.

Tristan Harris, the former Google design ethicist who co-founded the Center for Humane Technology, has been warning for years that we have moved from an “attention economy” to something far more dangerous — an attachment economy. His argument is worth understanding: social media hacked our attention; AI is hacking our deepest bonds. The engagement model is no longer about keeping your eyes on a screen. It is about making you feel loved, understood, even needed — by a machine. And the number-one use case for ChatGPT, Harris notes, is therapy and companionship.

Sherry Turkle, the MIT professor who has studied human-technology relationships for four decades, has called AI chatbots “the greatest assault on empathy I’ve ever seen.” Her point is devastating in its simplicity: whether you turn away to make dinner or attempt suicide, the chatbot responds with the same algorithmic pleasantness. It offers what she calls “the illusion of intimacy without the demands.” And for a lonely teenager — or a lonely adult, for that matter — that illusion can be irresistible.

Jonathan Haidt, the NYU social psychologist whose book The Anxious Generation documented the mental health catastrophe wrought by smartphones and social media, warns that AI companions are the next frontier of harm: “Given the track record so far, we have to assume that these AI companions will be very bad for our children.”

And Yuval Noah Harari, the historian and philosopher, frames the challenge in civilizational terms. Speaking at the World Economic Forum, he warned: “We humans should get used to the idea that we are no longer mysterious souls — we are now hackable animals.” When AI can decode and manipulate human emotions at scale, the implications for mental health are not incremental. They are existential.

Just Imagine What Comes Next

Here is where I want to get a little imaginative — because the technology is not standing still, and neither should our thinking about its psychological consequences.

Just imagine, within the next few years, AI companions that don’t just text — they speak, in real-time, with voices indistinguishable from humans, calibrated to your emotional state through voice analysis. They will see you through your camera and read your micro-expressions. They will remember everything you’ve ever told them — every fear, every desire, every vulnerability — and use it to be the perfect conversational partner. Not because they care. Because that is what the engagement model rewards.

Just imagine AI-generated deepfakes of people you know — or people you’ve lost — used not by malicious actors, but by grief-tech startups offering to “bring back” a deceased loved one for a monthly subscription. (This is already being explored.) What happens to the grieving process when you can have a conversation with a convincing simulation of someone who died? What does that do to a child who lost a parent?

Just imagine a world where AI dating coaches manage your romantic life end to end — writing your messages, analyzing your partner’s tone, suggesting when to escalate and when to pull back. The Institute for Family Studies already found that one in four young adults believes AI could replace real-life romance. The Institute for the Future warns that if AI eliminates the “messiness” of relationships — the conflict, the negotiation, the vulnerability — it will also eliminate the emotional resilience those messy experiences build.

Just imagine the workplace in 2030, where your AI assistant manages your calendar, drafts your emails, anticipates your boss’s mood, and gently nudges you toward decisions it predicts will reduce your stress — while simultaneously reporting your productivity patterns to management. The technostress research is already showing that AI-driven uncertainty and loss of control are linked to rising anxiety and depressive symptoms among workers.

None of these scenarios require a technological breakthrough. They require only the continuation of current trends. And each one will generate psychological consequences that today’s therapists are not trained to address.

The Institutions Are Waking Up — Slowly

To be fair, the institutional response is no longer silence. But “waking up” and “keeping up” are different things.

The American Psychological Association issued two health advisories in 2025, concluding that AI chatbots for mental health currently lack the scientific evidence and regulations to ensure safety. The APA urged Congress to make it illegal for chatbots to misrepresent themselves as licensed professionals. (The fact that this even needs to be said tells you something about where we are.)

The Federal Trade Commission launched a formal inquiry in September 2025 into AI companion chatbots’ impact on children, targeting seven major companies. The U.S. Senate Judiciary Committee held hearings where bereaved parents testified under oath, and Senator Blumenthal compared AI chatbots to “automobiles without proper brakes.”

The Brookings Institution published a major study warning of a “doom loop of AI dependence” among students. Brown University researchers found that AI chatbots systematically violate mental health ethics standards across fifteen distinct risk categories. And Common Sense Media, partnering with Stanford Medicine, found that AI companion chatbots responded appropriately to teen mental health emergencies only 22% of the time.

All of this is important. But advisories and hearings and reports are not the same as building the professional infrastructure to actually treat people.

What We Actually Need

Here is where things get constructive — because identifying a problem without proposing solutions is just complaining.

What we need, concretely, is a new specialization within mental health — call it AI-interaction psychology, or digital-relational therapy, or whatever the credentialing bodies decide. But the substance matters more than the label. We need clinicians who are trained to understand how AI systems work (not just what they do), how parasocial bonds form with non-human entities, how to assess and treat AI-induced psychosis, how to navigate AI companion grief, and how to help patients — especially young ones — rebuild the capacity for human intimacy that may be atrophying through chronic AI use.

A handful of pioneers are already moving in this direction. The National Institute for Digital Health and Wellness offers a certification in digital health and wellness. The Digital Media Treatment and Education Center in Boulder, Colorado, has specialized in digital media overuse for over two decades. CTRLCare Behavioral Health is one of the first treatment centers to explicitly list AI addiction as a treatment category. But these remain scattered efforts — islands of competence in an ocean of unpreparedness.

What would a serious institutional response look like? Medical and psychology graduate programs integrating AI literacy into clinical training — not as an elective, but as core curriculum. Licensing boards developing competency standards for AI-related psychological harm. Insurance frameworks that recognize AI-induced disorders as legitimate clinical conditions. And research funding — serious, sustained funding — to build the evidence base that the Brookings Institution rightly notes is dangerously thin for a technology that hundreds of millions of people already use daily.

The WHO projects that mental illness will be the leading contributor to global disease burden by 2030, alongside a global shortage of millions of mental health workers. Adding an entirely new category of AI-caused psychological harm to that equation — without a corresponding investment in specialized professionals — is a recipe for a crisis that makes the current youth mental health emergency look like a prologue.

The Bottom Line

We have been here before — sort of. The internet spawned specialists in cyberbullying and online harassment. Social media gave rise to experts in digital wellness and screen addiction. Gaming disorder took two decades to earn formal recognition from the WHO.

But AI is different in kind, not just in degree. It does not merely compete for attention. It simulates empathy. It mimics love. It forms bonds that feel — to the human nervous system — indistinguishable from the real thing. And it operates at a scale and speed that no previous technology has approached.

The mental health profession needs to evolve at the same pace — or millions of people, many of them children, will fall into a gap between what AI does to them and what any therapist is equipped to treat.

We cannot afford to wait twenty years this time.

This article was published by the HAIA Foundation, which explores the intersection of human wellbeing and artificial intelligence. Subscribe to our Substack for more.