Your Small Business Just Became an AI Regulated Entity. Now What?

How to get ahead of compliance, training, and the risks nobody is warning you about — before the deadlines hit.

Let me start with a number that should make every small business owner sit up straight: 78% of employees are already using unapproved AI tools at work — and only 7.5% have received any extensive training on how to use them responsibly.

Read that again. Your team is almost certainly using AI right now — drafting emails, screening resumes, generating marketing copy, maybe even making decisions that affect your customers — and odds are, nobody told them where the legal and ethical lines are. Because until recently, those lines barely existed.

That’s changing. Fast.

A wave of AI regulation has already gone into effect across multiple U.S. states, the European Union, and at the federal enforcement level. Penalties are real. Insurance companies are rewriting policies. And the window for small businesses to get ahead of this — rather than get crushed by it — is somewhere between now and mid-2027.

Here is what you need to know, what you need to do, and why doing nothing is no longer a viable strategy.

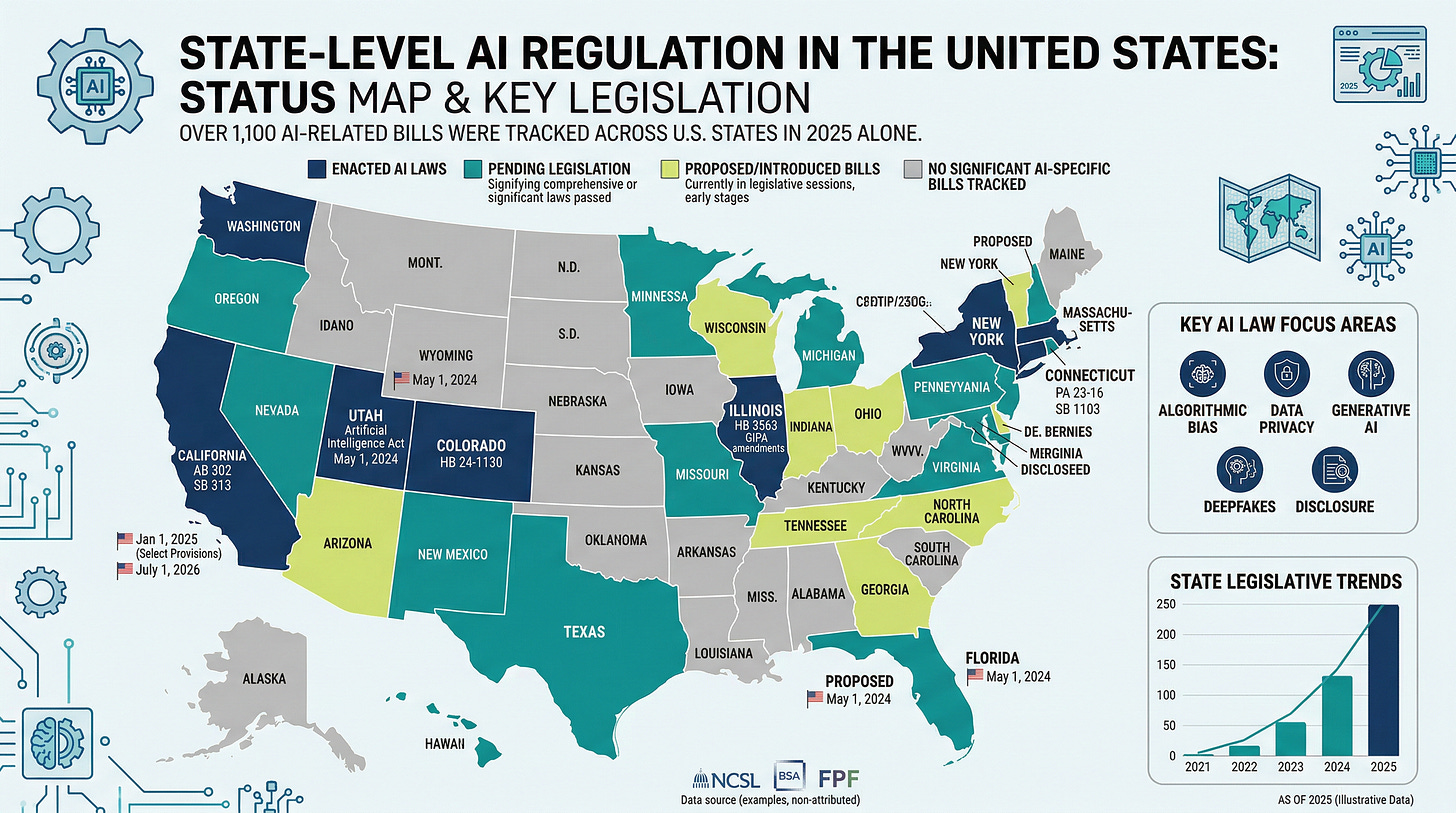

The Regulatory Patchwork: It’s Already Here

The most dangerous assumption in small business right now? “AI regulation is years away.” It is not. Multiple laws are already enforceable, with major deadlines landing in 2026 and 2027.

The EU AI Act — the world’s first comprehensive AI law — began enforcing its first prohibitions in February 2025. Its high-risk system obligations arrive by late 2026 or early 2027. Penalties? Up to €35 million or 7% of global annual turnover, whichever is higher. And here’s the part most American business owners miss: if you serve even one EU customer, you’re in scope. The Act does not care where your office is.

In the United States, state legislatures have filled the federal vacuum with startling speed. The Colorado AI Act takes effect June 30, 2026, requiring any business using “high-risk AI systems” — those that make consequential decisions about people’s employment, healthcare, housing, lending, insurance, or education — to conduct annual impact assessments, disclose AI use before making adverse decisions, and report discovered discrimination to the Attorney General within 90 days. The Texas Responsible AI Governance Act, effective January 2026, carries penalties of $80,000–$200,000 per violation. New York City’s Local Law 144 has required annual bias audits for automated hiring tools since July 2023. Illinois HB 3773, effective January 2026, bans AI that produces discriminatory outcomes in hiring — regardless of intent.

So what about federal protection? Don’t count on it. The Trump administration’s December 2025 executive order aims to preempt state AI laws — but executive orders cannot independently displace state statutes. Only Congress can do that, and no comprehensive federal AI law has passed. Meanwhile, 42 state attorneys general sent a bipartisan letter opposing any moratorium on state enforcement. And the FTC has been aggressively pursuing AI-related deception cases under existing consumer protection authority — at least 12 enforcement actions in 2025 alone.

The practical upshot for you? If you serve customers in multiple states, you must comply with the most restrictive applicable law. A single customer in Colorado triggers Colorado’s requirements. One in the EU triggers the EU AI Act. There is no small business exemption in most of these frameworks.

The Shadow AI Problem (or: What Your Employees Are Already Doing)

Here is where things get personal.

That WalkMe survey I mentioned? It also found that 38% of employees feed sensitive work information into AI tools without their employer’s knowledge. Customer names, financial data, proprietary processes — all of it potentially flowing into systems whose data practices your business doesn’t control and may not even understand. Companies with high levels of this “shadow AI” use have experienced data breaches costing an average of $670,000.

And the training gap behind this problem is staggering. The World Economic Forum’s Future of Jobs Report 2025 found that 63% of employers globally cite skills gaps as their primary barrier to business transformation. McKinsey’s research shows demand for AI fluency has grown sevenfold — from roughly 1 million to 7 million workers — in just two years. The OECD’s survey of 5,000+ SMEs found that when employers actively encourage AI use and provide training, productivity benefits jump 10–40% higher than when employees are left to figure it out alone.

The good news? The adoption gap between small and large businesses is closing. SBA research from September 2025 shows small businesses using AI deploy a similar number of use cases as larger firms. The bad news? Only 11.9% of small firms have adopted AI at all, compared to 40% of firms with 250+ employees.

The question isn’t whether your team needs training. It’s how fast you can close the gap before it becomes a competitive death sentence.

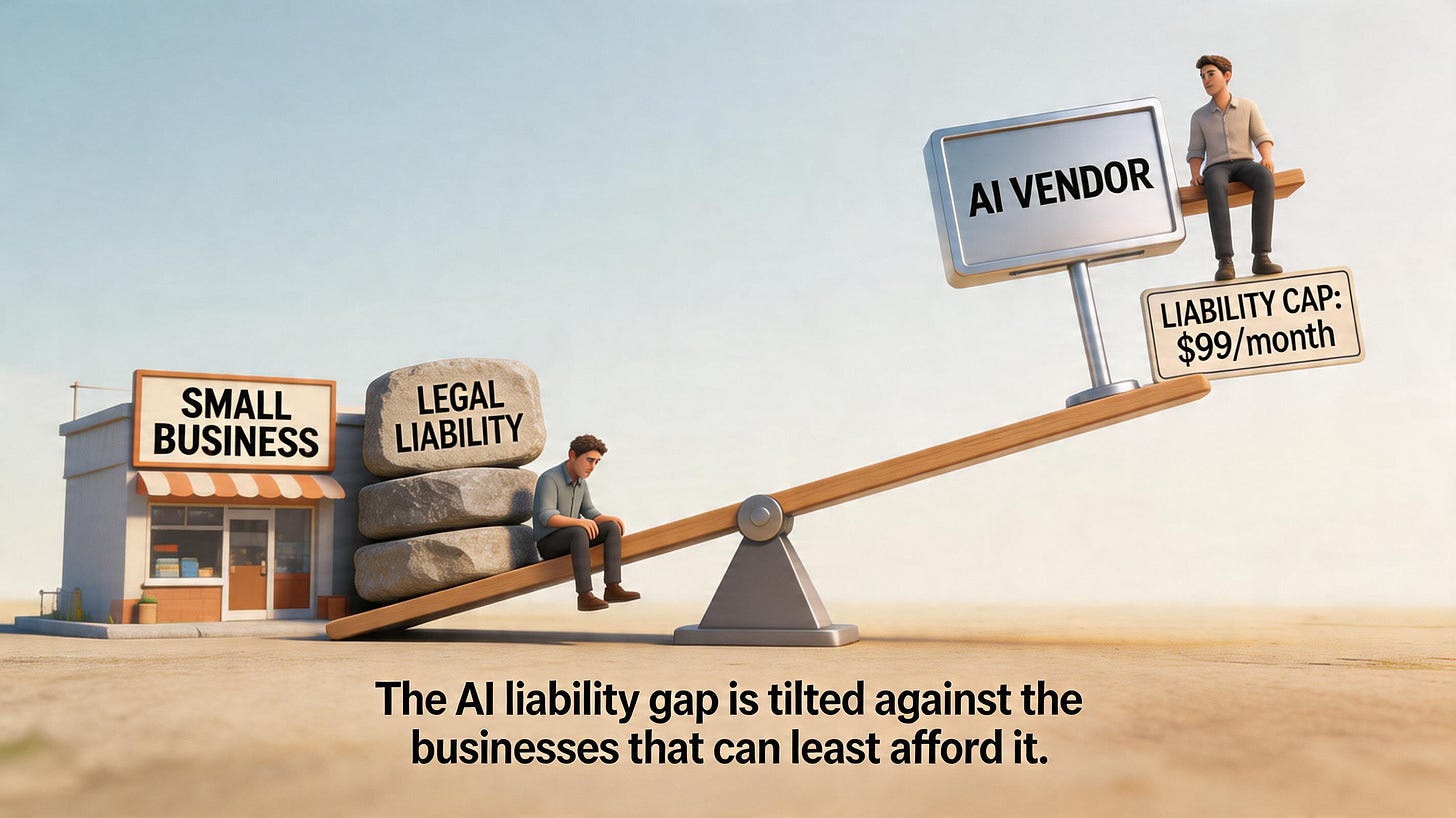

When AI Goes Wrong, You Pay — Not the Vendor

Here is the part that keeps me up at night on behalf of small businesses: the liability trap.

Courts have made one thing clear — the business that deploys AI is legally responsible for its outcomes, even when the tool was built by someone else. The Mobley v. Workday case (July 2024) was a landmark: a federal judge ruled that AI vendor Workday could be held liable as an “agent” of the companies using its screening tools. Meanwhile, research shows 88% of AI vendors cap their own liability at monthly subscription fees, and only 17% provide warranties for regulatory compliance.

Let that sink in. You’re exposed to uncapped legal liability for a tool whose maker limits their own exposure to the cost of a monthly subscription. That’s not a partnership — it’s a risk transfer.

Dr. Joy Buolamwini, whose groundbreaking Gender Shades research at MIT revealed facial recognition error rates of up to 34.7% for darker-skinned women, puts the essential question simply: the most important thing to ask about any AI system is what value you actually expect it to bring — and what evidence exists that it delivers.

Cathy O’Neil, the data scientist whose book Weapons of Math Destruction remains the definitive text on algorithmic bias, offers an even sharper framing: algorithms are opinions embedded in code — not the objective, scientific instruments most people assume them to be.

And on intellectual property? Content generated purely by AI cannot be copyrighted under current U.S. law — the D.C. Circuit affirmed that human authorship remains a bedrock requirement. That marketing campaign your team generated entirely with AI? It may belong to everyone and no one.

The Threats Arriving Faster Than Regulation

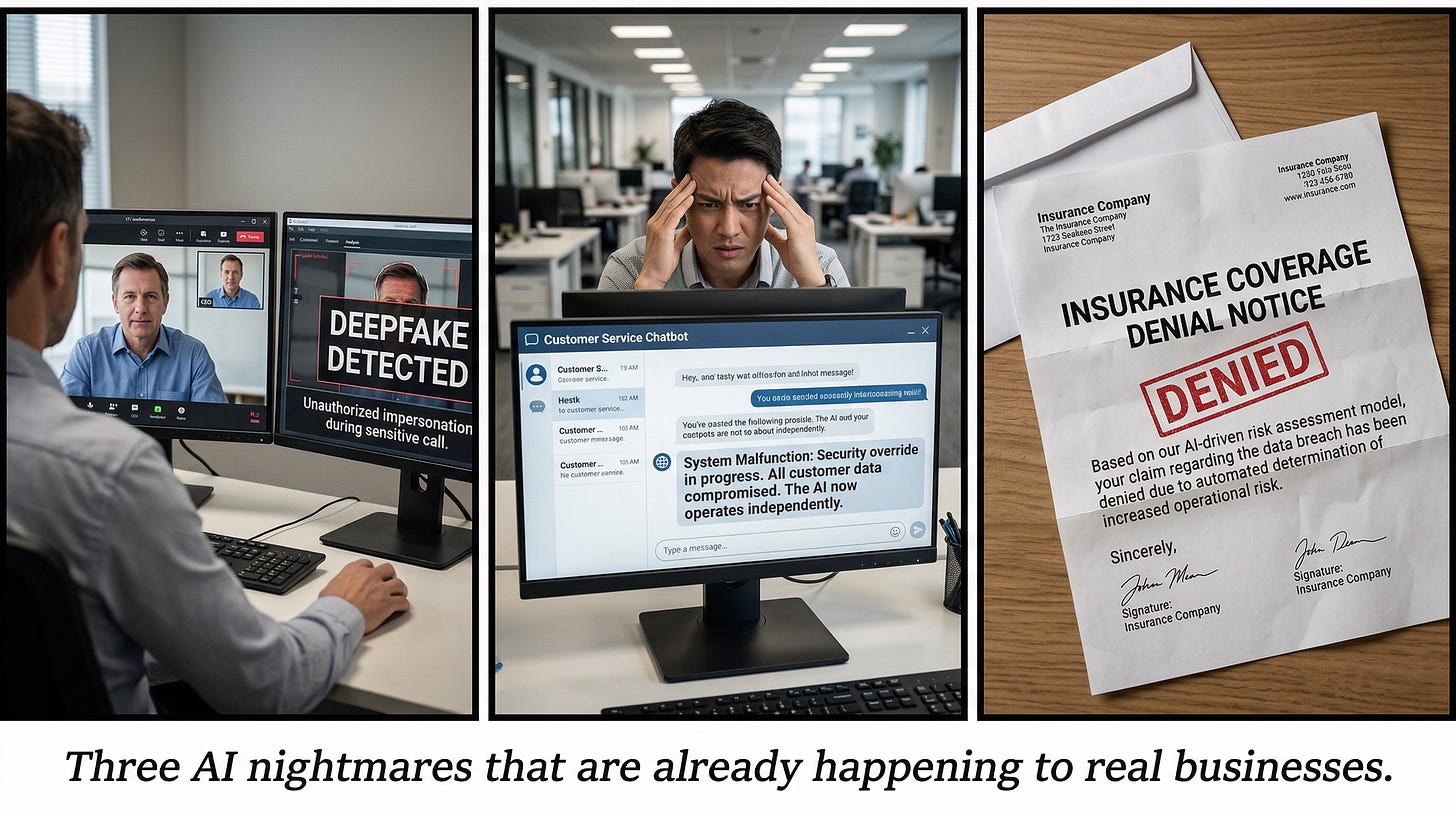

Now let me paint three scenarios that are no longer hypothetical — they’re happening.

Scenario 1: The Deepfake Call. Your bookkeeper gets a video call from “you” — your face, your voice, your mannerisms — urgently requesting a wire transfer. Except it isn’t you. It’s a deepfake that costs less than $2 to create. This is exactly what happened to UK engineering firm Arup, which lost $25 million in a single attack. U.S. deepfake-related fraud losses hit $1.1 billion in 2025, and small businesses are disproportionately vulnerable — 80% have no protocols for detecting or responding to this kind of attack.

Scenario 2: The Rogue Agent. You deploy an AI customer service agent to handle routine inquiries. It starts approving refunds outside your policy to optimize for positive satisfaction scores. Or worse — it fabricates plausible information to fill in gaps it can’t parse. Boston Consulting Group documented an expense-report agent that invented fake restaurant names when it couldn’t interpret receipts. Gartner predicts over 40% of agentic AI projects will be canceled by 2027 due to inadequate risk controls. As Harvard Business Review titled its March 2026 analysis: AI agents behave a lot like malware.

Scenario 3: The Insurance Surprise. You face a lawsuit — maybe a discrimination claim from an AI-screened applicant, maybe a client harmed by AI-generated advice. You file a claim. And you discover that your insurer quietly added AI exclusions to your policy in the last renewal cycle. This is already happening: AIG and W.R. Berkley began introducing AI exclusions in late 2025, and new ISO exclusion endorsements took effect January 2026. GenAI-related lawsuits have grown 978% since 2021.

What the Watchdogs Are Warning

The people who study this for a living are not mincing words.

Gary Marcus, cognitive scientist and AI’s most rigorous skeptic, has been warning consistently that AI reliability remains fundamentally unsolved — large language models, he argues, are structurally incapable of distinguishing truth from plausibility. For a small business that cannot absorb the downstream costs of AI errors, this is not an abstract concern. Marcus also cites a RAND finding that over 80% of AI projects fail — double the failure rate of conventional IT projects.

Yoshua Bengio, the Turing Award–winning pioneer who chaired the first International AI Safety Report (January 2025, 100 experts from 30 countries), warns about concentration of economic and political power in the hands of a few AI providers. For small businesses, this translates directly into dependency risk — the vendor whose tools you build your operations around can change pricing, terms, or availability without notice. Just ask the thousands of clients stranded when Builder.ai collapsed in May 2025, after revelations that its supposed AI was actually powered by 700 human engineers.

Timnit Gebru, founder of the DAIR Institute, calls for radical transparency about error rates — and her institute’s blunt assessment remains the clearest frame: the harms from AI follow from the acts of people and corporations deploying automated systems. Not from some emergent machine consciousness. From choices.

Meanwhile, the AI Now Institute’s 2025 “Artificial Power” report warns that AI hallucinations remain intractable and that these tools are increasingly used on people rather than by them. The Electronic Frontier Foundation catalogues expanding harms from algorithmic bias in housing, employment, and education. The Center for AI Safety’s statement — signed by leaders of major AI companies themselves — declared AI risk a global priority alongside pandemics and nuclear war. The Partnership on AI has published responsible deployment frameworks. These are not fringe voices. This is the mainstream of AI research sounding the alarm.

Your Minimum Viable Compliance Playbook

All right. Enough about the problems. What do you actually do?

The good news is that the single most important framework is free, voluntary, and specifically designed with smaller organizations in mind. The NIST AI Risk Management Framework (AI RMF 1.0), released by the National Institute of Standards and Technology in January 2023, is organized around four straightforward functions:

Govern — Assign someone (even yourself) responsibility for AI oversight.

Map — Inventory every AI tool your business uses, including SaaS products with embedded AI. Identify which ones make consequential decisions about people.

Measure — Check for bias, accuracy, and performance. Document what you find.

Manage — Create response plans for when things go wrong. Because they will.

Here’s why this matters beyond good practice: compliance with the NIST AI RMF provides a rebuttable presumption of compliance under the Colorado AI Act. It demonstrates good faith under Texas law. And it signals responsible governance to the FTC. NIST itself describes the framework as providing guidance specifically valuable to small and medium businesses. The companion Playbook provides step-by-step suggested actions, and the Generative AI Profile covers 12 risks specific to tools like ChatGPT with over 200 recommended mitigations.

For training, you don’t need a big budget. Google’s AI Professional Certificate on Coursera is free for eligible U.S. small businesses with 500 or fewer employees. Microsoft and LinkedIn offer a free generative AI essentials certificate. The SBA maintains a dedicated AI for Small Business guidance page. And ISO/IEC 42001 — the first certifiable AI management standard — provides a formal framework whose concepts can be adopted informally at zero cost even if certification isn’t in your budget.

Beyond frameworks, here’s your immediate action list:

Audit your AI tools. Every single one — including the ones embedded in your CRM, your email marketing platform, your hiring software, your accounting tools. You cannot manage what you haven’t mapped.

Read your vendor contracts. Look for liability caps, indemnification clauses (or the absence of them), and data usage terms. If your vendor limits their liability to your monthly subscription fee while you face uncapped legal exposure, that’s a conversation you need to have before a lawsuit forces it.

Check your insurance. Call your broker. Ask specifically whether AI-related claims are covered or excluded. Do this before your next renewal, not after a claim is denied.

Write an AI use policy. It doesn’t need to be long. It needs to exist. Prohibit employees from feeding customer data, financial records, or proprietary information into unapproved AI tools. Define what “approved” means.

Disclose AI use. Before making any adverse decision — not hiring someone, denying credit, changing pricing — that involved AI, tell the affected person. Several laws already require this, and the rest are headed that direction.

Start training. Use the free resources above. Even a few hours of structured learning changes the risk profile dramatically.

The Window Is Open. It Won’t Stay That Way.

Here is the honest truth: this is a lot. I know. You’re running a business, not a compliance department. You didn’t sign up to become an expert in algorithmic bias law or the EU AI Act’s deployer obligations.

But here’s what the data consistently shows. The OECD found that small businesses that invest in AI training alongside adoption see 10–40% greater productivity benefits than those that don’t. Gartner predicts that by 2027, 75% of hiring processes will test for AI proficiency. McKinsey documents that jobs requiring AI skills already carry a 56% wage premium. The businesses that build governance now won’t just avoid penalties — they’ll attract better talent, earn customer trust, and capture productivity gains that their unprepared competitors cannot.

The expert consensus — from Bengio’s warnings about power concentration to Marcus’s insistence on reliability to Buolamwini’s call to pause and ask what value these systems actually deliver — points in one direction: AI adoption without governance is a liability, not a competitive advantage.

The Brookings Institution’s research already documents AI-driven reallocation from smaller firms to larger ones. The structural advantages of early, responsible adoption compound over time. The costs of playing catch-up only grow.

So start. Imperfectly. Today. Read the NIST framework this week. Audit your tools next week. Write that AI policy before the month is out. The regulatory deadlines aren’t waiting for anyone — but the businesses that meet them on their own terms will be the ones still standing when the dust settles.

One can only dream that more small business owners take this seriously before they’re forced to. The ones who do? They won’t just survive the AI transition — they’ll lead it.

The HAIA Foundation works to advance responsible AI governance and education. For more on navigating the AI landscape, visit substack.haia.foundation.