When Every Law Is Perfectly Enforced, We Are All Criminals

The laws of Western democracies were written with a quiet assumption baked into their DNA: that enforcement would always be imperfect. AI is about to shatter that assumption — and we are not ready.

Let me start with something that might sound counterintuitive.

Selective enforcement of the law is not a bug. It is a feature.

For centuries, Western legal systems have operated under a quiet bargain between the state and its citizens. Legislators write laws broadly — sometimes very broadly — giving the executive branch maximum tools to go after the worst offenders. But everyone involved understands (even if no one says it out loud) that these laws will never be enforced against everyone, all of the time. Police officers let you off with a warning. Prosecutors exercise discretion. Tax authorities audit a fraction of returns. The sheer impossibility of catching every violation acts as an invisible safety valve, softening the hard edges of statutes that were written to be deliberately over-inclusive.

This is not some happy accident. As the late Harvard Law professor William Stuntz documented in his landmark paper on criminal law’s expansion, the system works like a one-way ratchet: legislators constantly broaden what counts as a crime, while prosecutors accumulate discretion over whom to actually pursue. The law on the books and the law in practice were designed to diverge. Harvey Silverglate put it more bluntly in Three Felonies a Day: the average American professional unknowingly commits multiple federal crimes daily. You and I are walking around right now, technically in violation of statutes we have never heard of — and that is only tolerable because no one is watching closely enough to notice.

So far, so good. Here is where things get terrifying.

The machines are watching now

What happens when someone is watching — not someone, actually, but something — and it never blinks, never gets tired, never exercises mercy, and never looks the other way?

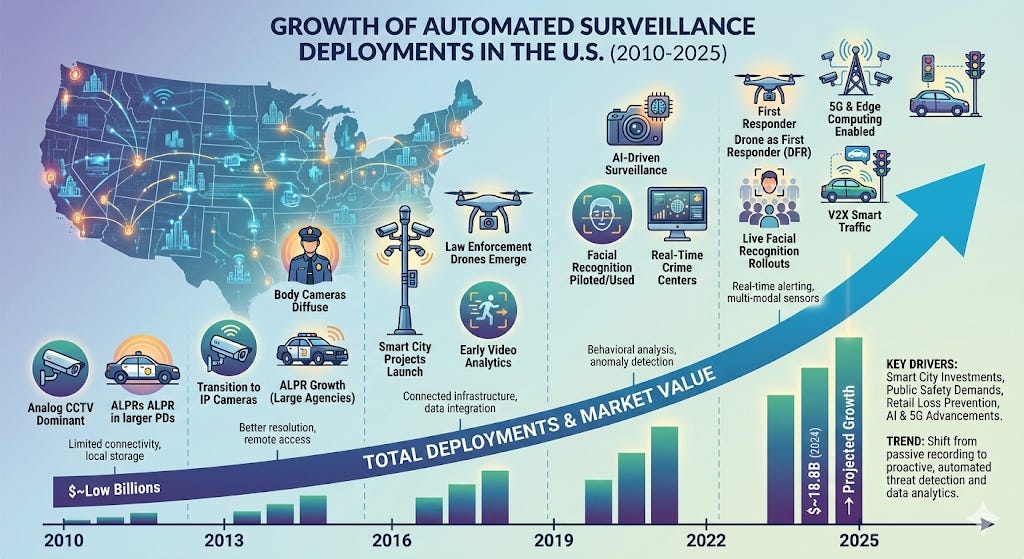

AI-powered enforcement is no longer theoretical. It is here. Speed cameras that ticket every car going 1 mph over the limit. Algorithms that flag every tax return with a suspicious deduction. Facial recognition that identifies you walking down a public street. Content ID bots that take down your YouTube video because three seconds of background music matched a copyrighted track.

The Electronic Frontier Foundation tracks over 11,700 surveillance technology deployments by U.S. police agencies. The Brennan Center for Justice has documented how AI-enabled data fusion pulls together airline records, border crossings, and social media into detailed portraits of people not suspected of any crime. And Lawfare’s analysis offered this chilling observation: at the extreme end, a single person controlling an automated enforcement system could achieve dominance over a population without needing any human allies at all.

We have already seen what happens — and it is not pretty

If you think this is speculative, let me walk you through what has already gone wrong.

The Netherlands, 2021. The Dutch Tax and Customs Administration deployed an algorithm to detect childcare benefit fraud that flagged families partly based on “foreign-sounding names” and dual nationality. At least 35,000 families were falsely accused and driven into poverty. Over 2,000 children were removed from their homes. The entire Dutch cabinet resigned. Amnesty International titled its report “Xenophobic Machines.”

Australia, 2016–2019. The government’s “Robodebt“ scheme used an automated system to compare welfare payments against averaged income data — a mathematically flawed approach. It issued debt notices to roughly 400,000 people with an approximate 80% false positive rate. The Federal Court declared it unlawful. The government repaid A$751 million.

Michigan, 2013–2015. The state’s MiDAS unemployment fraud system automated all fraud determinations while laying off a third of agency staff. It issued over 60,000 fraud findings with a 93% error rate. Roughly 40,000 people were wrongly accused. Wages were garnished. At least 11,000 families filed for bankruptcy.

Every single one of these cases follows the same pattern: a system designed to enforce rules without discretion, mercy, or contextual understanding — producing results that human beings, once they found out, found intolerable.

Now imagine this at full scale

Here is where I want you to use your imagination — because the technology is evolving faster than the law can adapt.

Imagine a city where every traffic law is enforced perfectly. Not just speed limits — every law. That rolling stop at 6 AM on an empty street? Ticket. Changed lanes without signaling for exactly 100 feet? Ticket. Your registration lapsed by one day? Automated boot on your tire. In a single month, the average driver might accumulate thousands of dollars in fines — not because they are reckless, but because the laws were written with the assumption that most violations would go undetected.

Now scale that to tax enforcement. The IRS is already deploying machine learning that runs roughly six times per tax year, learning with each iteration. What happens when AI scrutinizes every deduction on every return — not the current 1–2% audit rate, but 100%? Stanford’s research already found that Black taxpayers claiming the EITC are audited 3–5 times more often due to algorithmic patterns. What happens when those patterns scale to everyone?

Or consider intellectual property. YouTube’s Content ID already processes over 400 hours of video uploaded per minute. It once flagged a nine-minute microphone test as copyrighted music. Under the DMCA, bots sent over 700 million takedown requests in a single year. What happens when that logic extends to every form of creative expression?

The scholars have been warning us

A growing body of legal scholarship is coalescing around a single, uncomfortable thesis: if we are going to have near-perfect enforcement, we need far more lenient laws.

Christina Mulligan, writing as early as 2008, argued that the discomfort people feel about perfect enforcement stems from unease about the underlying laws themselves — laws nobody actually wanted enforced to the letter. Woodrow Hartzog and colleagues made the case that enforcement inefficiency is a deliberate safeguard, and that anyone automating enforcement should be required to engineer slack into the system.

Bart Custers of Leiden University took it further in 2023: just as genetic variation is necessary for species to adapt, some room to break the law is necessary for legal systems to evolve. Perfect enforcement freezes the law in place — eliminating civil disobedience and making it impossible to challenge unjust rules.

Most provocatively, William Fallon argued in the Connecticut Law Review (2024) that government technology can now detect and punish every instance of illegal behavior without violating the Fourth Amendment — a capability he called “dystopian power.” And Timo Rademacher proposed an entirely new legal doctrine: a recognized right to violate the law — to restrict enforcement technologies in a principled manner.

Read that again. Serious legal scholars are arguing we may need a formal right to break the law.

What China tells us about where this road leads

I am not going to pretend any Western democracy is on the brink of authoritarianism. But it would be intellectually dishonest to ignore the country that has gone furthest down this road.

China’s social credit system — described by the French Institute of International Relations as “a chimera with real claws” — is not the unified dystopian score that Western media often portrays. It is a fragmented patchwork of blacklists and enforcement mechanisms. But the effects are real: millions of citizens have been blocked from buying plane or train tickets based on court blacklists.

The critical insight from NYU’s Center for Human Rights and Global Justice: the system does not need to create new laws. It merely enforces existing ones — comprehensively, automatically, and without discretion. A system that enforces every law perfectly is only as just as its worst statute. And when the worst statute criminalizes peaceful protest or online speech, perfect enforcement becomes perfect oppression.

So what do we actually do about this?

Here is where the pragmatist in me takes over. Sounding the alarm is important, but it is not enough. Five distinct reform arguments have emerged from this literature, and I think all of them deserve serious consideration:

First, penalty reduction. When enforcement becomes more certain, penalties should become proportionally lower. San Francisco’s SPUR institute noted New York City’s automated speed cameras charge $50 fines versus $600 from officers. If you catch everyone, punishment needs to reflect that.

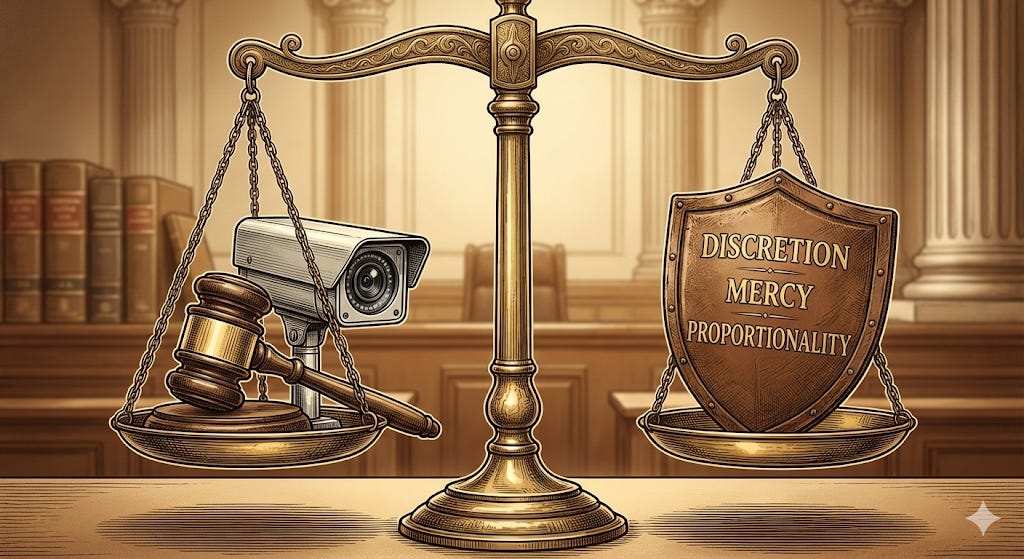

Second, mandatory discretion engineering. Anyone introducing automation into enforcement should be legally required to build in tolerance thresholds, contextual exceptions, and human review triggers. The machine should not be more rigid than the human it replaces.

Third, sunset clauses and enforcement audits. Every law subject to automation should be reviewed for its effects under near-perfect enforcement. Would this law be tolerable if applied to everyone, every time? If not, rewrite it.

Fourth, algorithmic impact assessments. The AI Now Institute proposed assessments modeled on environmental impact reviews — requiring agencies to publicly disclose all automated enforcement systems and their logic before deployment.

Fifth, substantive protections for minor illegality. De minimis thresholds below which automated enforcement simply does not apply, regardless of technical capability. Not because we want to encourage lawbreaking, but because a society where every minor infraction triggers automatic consequences is not one most of us would choose to live in.

The bottom line

The question is not whether enforcement technology will reshape law. It already has. The question is whether democratic societies will revise their laws proactively — building back in the discretion, mercy, and proportionality that human enforcers once provided naturally — or wait for the next algorithmic catastrophe to force the issue.

Because here is the uncomfortable truth no politician wants to say out loud: many of our laws are only tolerable because they are not fully enforced. That was always the quiet bargain. And the machines are about to break it.

The Heritage Foundation and the ACLU do not agree on much. But they agree on this: we have too many laws, enforced with too little accountability, and technology is making the problem exponentially worse. When the political right and left both tell you the house is on fire, it might be time to grab an extinguisher.

One can only dream that our legislators will act before the next algorithm ruins another 35,000 lives.

This article was published by the HAIA Foundation. For more analysis on technology, governance, and civil liberties, subscribe to our Substack.

The deployment of AI with large error rates is less tech news than evidence of specific corrupt governments (which are problematic even without the tech). Regarding the discretion that legislation assumes, AI used to classify cases that would be upheld in appellate court if prosecuted (rather than classify cases of broken law). So I don’t see tech as problematic for handling crime. In fact, I think corruption cannot be solved without unseating some people who hold power, but not everyone who holds power deserves to have it taken away, so ending corruption may require automated surveillance of the most powerful.

When I think of AI, I think of Bungie's Inc. Halo's franchise AI. But yea, government have the 'need' to use surveillance as excuse to stay on their tip toes, and as a means to justify increased prices. Now, if government have the ability to invest so much resources in building surveillance, security, etc, etc... can't we do the same for the greater good? It's the exact response to 'Why cure, when you can treat it recurrently"