When AI Doesn’t Need Robots — It Has Us

The most disruptive path to AI-controlled physical labor may not run through factory-built humanoids but through the human body itself.

While the tech industry pours billions into humanoid robots that can barely work a two-hour shift, brain-computer interfaces are advancing from medical curiosities toward consumer devices — and the existing global workforce of 8 billion self-healing, self-reproducing humans already follows algorithmic instructions via smartphones and scanners. The convergence of these trends suggests a provocative alternative to the robot revolution: AI may colonize the body before it replaces it.

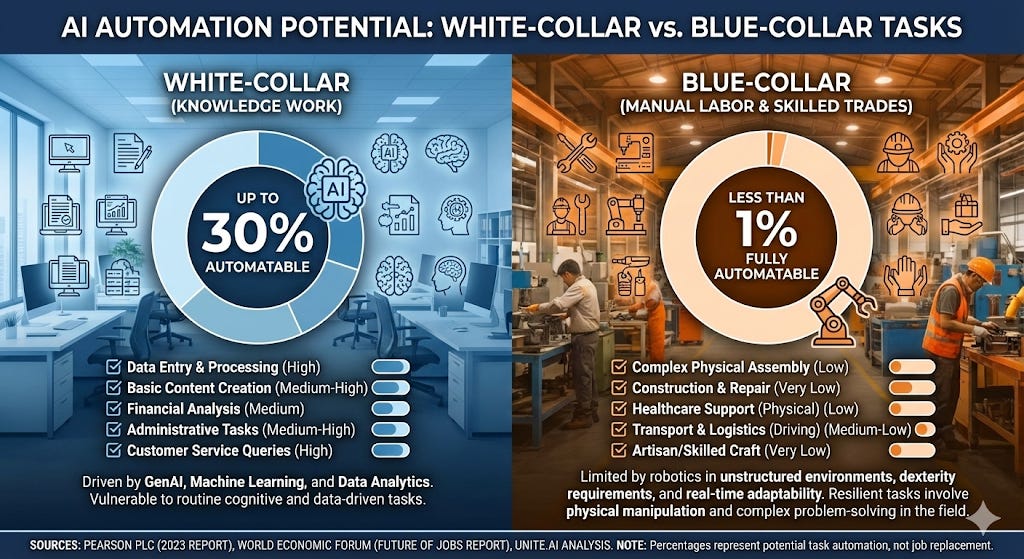

White collar jobs fall first, confirming AI’s cognitive-before-physical pattern

The data is now overwhelming: AI disrupts knowledge work before physical work, inverting a half-century of automation patterns. Goldman Sachs‘ landmark March 2023 report found that 300 million jobs worldwide could be affected by generative AI, with the highest exposure concentrated in office and administrative support (46% of tasks automatable), legal work (44%), and architecture/engineering (37%). Building and grounds maintenance sat at just 1%. Their updated 2025 analysis, authored by Joseph Briggs and Sarah Dong, projects generative AI could raise labor productivity by ~15% in developed markets at full adoption, but notes AI adoption remains “remarkably low” — only 9.3% of U.S. companies use generative AI in production.

McKinsey‘s June 2023 report, “The Economic Potential of Generative AI,” quantified the value at $2.6 to $4.4 trillion annually — comparable to the United Kingdom’s entire GDP. The critical finding was what McKinsey called “reverse skill bias”: unlike previous automation waves that hit lower-skilled workers hardest, generative AI disproportionately impacts higher-educated knowledge workers. Their November 2025 follow-up, “Agents, Robots, and Us,” sharpened the picture: AI software agents could perform tasks occupying 44% of U.S. work hours, while robots could handle only 13%. The gap between AI’s cognitive reach and physical reach has never been wider.

The IMF‘s January 2024 report found nearly 40% of global employment exposed to AI, rising to 60% in advanced economies. IMF Managing Director Kristalina Georgieva called AI “a tsunami hitting the labor market” and warned at Davos 2026: “My appeal is, wake up. AI is for real, and it is transforming our world faster than we are getting a handle on.” The World Economic Forum‘s “Future of Jobs Report 2025” projects 170 million new jobs created but 92 million displaced by 2030, with the fastest-declining roles all cognitive: cashiers, administrative assistants, bank tellers, data entry clerks. Meanwhile, the Kellogg School at Northwestern, analyzing 200 years of innovation data, found AI would decrease relative demand for white-collar jobs while blue-collar jobs grow — “a 50-year trend in the making,” per researcher Bryan Seegmiller.

Ford CEO Jim Farley captured the zeitgeist bluntly: “AI will replace literally half of all white-collar workers.” Stanford‘s Digital Economy Lab found entry-level hiring in AI-exposed jobs has already dropped 13% since large language models proliferated. The pattern is clear — and it creates the vacuum that the robot industry promises to fill.

Humanoid robots face a decade-long bottleneck that humans don’t

The promise of humanoid robots replacing blue-collar workers runs into a wall of physics, engineering, and economics that software AI never faced. Moravec’s Paradox — the observation that physical tasks trivial for humans are extraordinarily hard for machines — remains unbroken. UC Berkeley roboticist Ken Goldberg, publishing in Science Robotics in August 2025, calculated a “100,000-year data gap”: the text data used to train LLMs would take a human 100,000 years to read, but “we don’t have anywhere near that amount of data to train robots, and training robots is much more complex.” His conclusion: “The blue-collar jobs, the trades, are very safe. I don’t think we’re going to see robots doing those jobs for a long time.”

The technical limitations are severe. Dexterity is the core bottleneck — the human hand has ~17,000 specialized touch receptors and 27 degrees of freedom. Tesla‘s latest Optimus hands manage 22 degrees of freedom with no meaningful tactile sensing. McKinsey’s 2025 assessment concluded: “Until humanoids achieve greater mechanical bandwidth and smarter sensorimotor integration, they will remain confined to repetitive, low-complexity tasks in structured environments.” Battery life is equally constraining: most humanoid robots operate for only 2–4 hours per charge under real conditions. Bain & Company‘s 2025 Technology Report stated that achieving a full eight-hour shift “could take up to 10 years or even longer.”

Costs remain prohibitive. Current production costs range from $30,000 to $500,000 per unit depending on the model, with McKinsey’s teardown analysis showing actuators alone consuming 40–60% of total costs. Each humanoid requires up to 44 harmonic drives at $2,000–$5,000 each. Assembly takes approximately four days per unit due to 200+ cables. Tesla’s Optimus, despite Elon Musk‘s $20,000 price target, currently costs an estimated $120,000–$150,000 to produce and operates at “less than half the efficiency of human workers” in Tesla’s own factories. At their October 2024 “We, Robot” event, Bloomberg revealed the robots mingling with guests were “in fact remotely operated by humans.”

The skeptics are formidable. Rodney Brooks, co-founder of iRobot and former MIT lab director, published a September 2025 essay calling the current humanoid approach “pure fantasy thinking,” arguing that “a lot of money will have disappeared, spent on trying to squeeze performance from today’s humanoid robots. But those robots will be long gone and mostly conveniently forgotten.” Gartner predicted in January 2026 that “fewer than 20 companies will scale humanoid robots for manufacturing to production stage by 2028.” The International Federation of Robotics acknowledged that “if and when a mass adoption of humanoids will take place remains uncertain.”

The supply chain adds another layer of vulnerability. China controls ~63% of key component manufacturing and 70% of the global humanoid robot supply chain. Goldman Sachs identified high-precision screws as “a surprising bottleneck” — global production lacks capacity for million-unit robot production. Tesla’s Optimus program was hobbled by Chinese rare earth export restrictions in 2025.

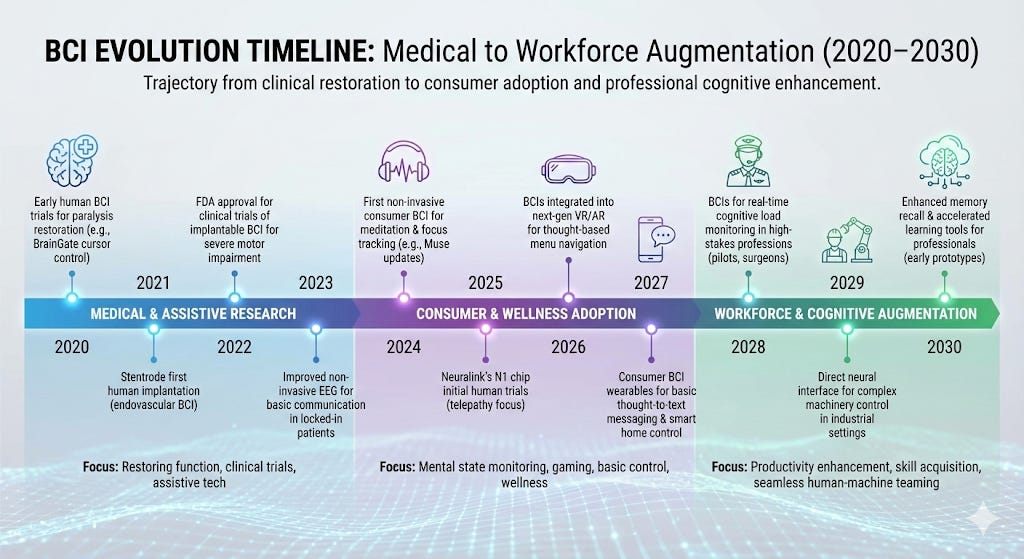

Brain-computer interfaces are advancing faster than most realize

While robots stumble over battery life and dexterity, BCIs are quietly achieving milestones that would have seemed impossible five years ago. Neuralink has implanted devices in seven quadriplegic patients as of mid-2025, with patient Noland Arbaugh playing chess, browsing the web, and controlling a phone entirely through thought. A second patient with ALS narrated and edited a YouTube video using only brain signals. Neuralink received FDA approval in May 2025 for speech restoration and is expanding trials to Canada, the UK, Germany, and the UAE, with plans for 20,000 implants per year at five clinics.

The competition is fierce and producing remarkable results. Synchron‘s Stentrode — implanted through the jugular vein without open brain surgery — achieved 100% accurate deployment across 10 patients with zero device-related serious adverse events. CEO Tom Oxley announced Synchron is “moving into the stage” of contemplating a pivotal trial. In May 2025, Apple unveiled a BCI Human Interface Device protocol, and Synchron became the first BCI maker to achieve native integration with iPhones, iPads, and Apple Vision Pro. Users can now control Apple devices with their thoughts.

Speech BCIs have shattered records. UC Davis achieved 97% accuracy in brain-to-speech translation, published in the New England Journal of Medicine. UCSF set the Guinness World Record at 78 words per minute. Stanford demonstrated inner speech detection — decoding imagined, unspoken thoughts — in a study published in Cell in August 2025. An ALS patient used a speech BCI independently at home for over two years, producing more than 237,000 sentences while working full-time. Columbia University, under DARPA‘s Neural Engineering System Design program, produced a single chip with 65,536 electrodes and wireless data transfer at 100 Mbps — 100× higher than any competing wireless BCI.

The non-invasive frontier is equally significant. Meta shipped the Neural Band with its Ray-Ban Display glasses in September 2025 at $799 — the first mass-market neural interface product, using surface electromyography to read electrical signals traveling from brain to hand muscles. Sam Altman began building BCI startup Merge Labs in August 2025. The BCI market, estimated at $1.74 billion in 2022, is projected to reach $6.2 billion by 2030, with Morgan Stanley estimating an early total addressable market of $80 billion and a potential $400 billion opportunity.

DARPA has spent $500 million proving brains can be accelerated

The military-industrial complex has been the quiet engine of BCI advancement, and its programs reveal capabilities directly applicable to workforce augmentation. DARPA has invested over $500 million in the BRAIN Initiative since 2013 and initiated at least 40 programs related to neurotechnology over the past two decades. An Asimov Press analysis found that roughly half of invasive neural interface companies in the U.S. trace their funding directly or indirectly to DARPA.

The Targeted Neuroplasticity Training (TNT) program, launched in 2016 with over $50 million distributed to eight research teams, explicitly aims to “advance capabilities beyond normal levels.” Program manager Doug Weber targeted a 30% improvement in learning rate using vagus nerve stimulation during training for foreign language acquisition, marksmanship, and intelligence analysis. The University of Texas at Dallas demonstrated that vagus nerve stimulation paired with training “restored movements, reduced pain, increased feeling, improved memory and possibly sped up learning.” The University of Maryland received $8.58 million to study vagus nerve stimulation’s effect on second language acquisition via an earbud delivering low-voltage electrical signals.

The results from adjacent programs are striking. DARPA’s Restoring Active Memory (RAM) program demonstrated 37% improvement in short-term memory and 35% improvement in long-term memory using closed-loop hippocampal stimulation. A 2012 DARPA-funded study on transcranial direct current stimulation (tDCS) found improvements that “increased to a factor of two after a one-hour delay” — which DARPA’s Defense Sciences Office called “one of the largest effects on learning ever reported.” A subsequent study on laparoscopic surgical skills found that tDCS enabled subjects to reach the same skill level in two-thirds the time.

The N3 (Next-Generation Nonsurgical Neurotechnology) program, funded at $104–$125 million, aims to develop high-performance bidirectional brain-machine interfaces for able-bodied service members without surgery. Program manager Dr. Al Emondi stated: “DARPA is preparing for a future in which a combination of unmanned systems, artificial intelligence, and cyber operations may cause conflicts to play out on timelines that are too short for humans to effectively manage.” Battelle‘s “BrainSTORMS” system uses magnetoelectric nanoparticles injected into the brain. Rice University‘s minutely invasive approach uses viral vectors delivering synthetic proteins. Both advanced to Phase II.

The DoD’s “Cyborg Soldier 2050“ report, authored by the Biotechnologies for Health and Human Performance Council, identified four enhancement technologies feasible by 2050: ocular enhancements with data feeds to the optical nerve, programmed muscular control via optogenetic bodysuits, auditory enhancement, and direct neural enhancement enabling two-way data transfer between humans and machines. The report predicted these enhancements would be “largely driven by civilian demand and a robust bio-economy.” China is racing in parallel: its Brain Project, estimated at $1 billion through 2030, emphasizes brain-machine integration, and the U.S. Commerce Department sanctions against the Academy of Military Medical Sciences cited research into “purported brain-control weaponry.”

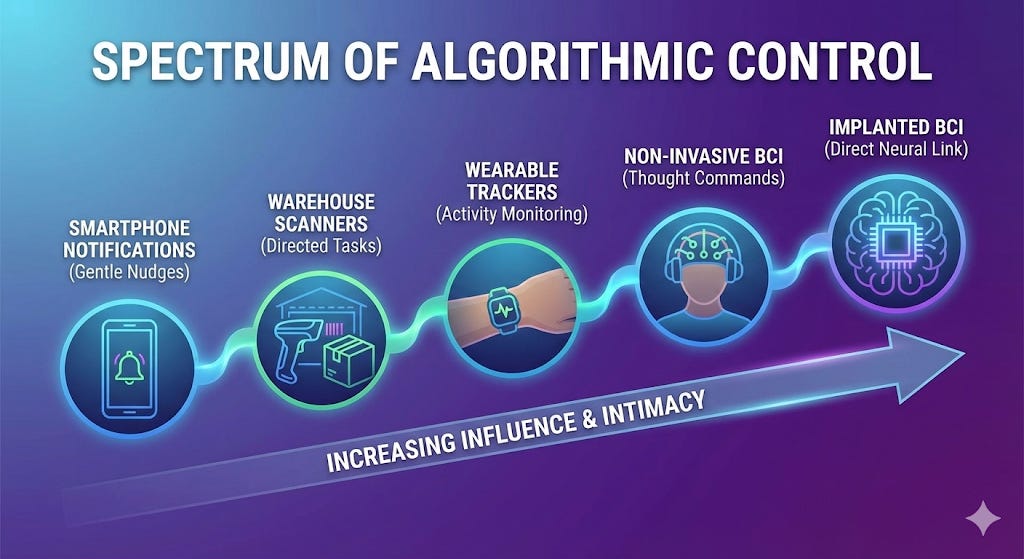

Humans already serve as AI’s physical agents — without implants

The thesis that humans could become AI-directed physical agents is not speculative — it describes the current reality for millions of workers. Amazon‘s algorithmic management system tracks individual worker productivity in real-time through barcodes, handheld scanners, workstation computers, AI cameras, and the A-to-Z app. Workers are expected to pack approximately 420 boxes per hour. The system automatically generates warnings and terminations without supervisor input; at one Baltimore facility, approximately 300 workers were fired in a single year for productivity reasons alone. Teke Wiggin of Northwestern University described the system as an “electronic whip” that “tracks the minutes workers are not working, enforces algorithmically-generated quotas, and automatically fires workers.”

The gig economy extends this model to 19 million platform workers globally. Human Rights Watch‘s May 2025 report, “The Gig Trap,” documented that across Uber, DoorDash, Instacart, Lyft, and others, “workers are assigned orders, supervised, paid, and fired by algorithms.” Six of seven companies examined use opaque, frequently changing algorithms to calculate pay — workers don’t know their compensation until after completing the job. UPS‘s ORION system directs 55,000 drivers using 1,000 pages of optimization code, processing 200,000+ routing options per route. The cultural shift was explicit: “Drivers were no longer route planners. They became executors of the algorithm.”

Call center AI completes the picture. Modern systems analyze 100% of customer interactions, monitoring tone, sentiment, script adherence, empathy, and pause duration in real time. One agent described the experience: “I feel like I’m in a driving test that never ends.” The OECD‘s February 2025 survey found over 70% of managers reported their firms used at least one automated tool to instruct, monitor, or evaluate employees. The academic framework for this is “digital Taylorism” — AI as the ultimate efficiency manager decomposing labor into measurable, monitorable discrete units. The European Parliament responded in December 2025 with a vote of 451-45 demanding new rules on algorithmic management.

The critical insight, as Stacy Mitchell of the Institute for Local Self-Reliance observed: “Workers are treated like robots in effect because they’re monitored and supervised by these automated systems.” The smartphone and scanner already function as a rudimentary brain-computer interface — translating algorithmic decisions into human physical action. BCI would simply remove the screen.

The economic logic favors augmenting humans over building robots

The economic case for BCI-augmented humans over manufactured robots rests on a fundamental asymmetry: humans are already deployed, self-maintaining, and abundant. There are 8 billion humans already operational and distributed globally. They self-heal — human bones have “impressive self-healing capabilities,” per Frontiers in Robotics and AI, while robot self-repair remains in infancy. A 2024 Nature article on robot self-healing noted the technologies “sit mostly in isolation.” Humans reproduce without factories, supply chains, or rare earth minerals. They run on roughly 2,000–2,500 calories per day (~100 watts) — the energy equivalent of a lightbulb — while humanoid robots drain batteries in 2–4 hours and require charging infrastructure.

MIT‘s CSAIL study found that the majority of the time, it would be cheaper for companies to continue using human workers than automate with AI computer vision systems. Researcher Neil Thompson noted: “Many tasks wouldn’t be economically attractive to automate for years or even decades.” MIT economist David Autor reinforced this at a 2025 conference: “In many parts of the world, labor is still cheaper than automation.” Garry Kasparov‘s insight, now canonical in the augmentation literature, demonstrated that “weak human + machine + better process was superior to a strong computer alone and, more remarkably, superior to a strong human + machine + inferior process.”

The cost comparison is stark. A humanoid robot costs $30,000–$500,000 to purchase, requires $5,000–$50,000 in hidden integration costs, works 2–4 hours per charge, and needs a supply chain stretching to Chinese rare earth processors. A BCI-augmented human worker could theoretically operate on existing food infrastructure, work full shifts, navigate unstructured environments with 17,000 touch receptors per hand, and cost the price of a neural interface plus a software subscription. The global BCI market may reach $6.2 billion by 2030 — a fraction of the projected $38 billion humanoid robot market by 2035 — yet could augment millions more workers.

Philosophers and ethicists saw this coming — and raised alarms

The philosophical groundwork for “humans as biological robots” stretches back to Julien Offray de La Mettrie‘s 1747 L’Homme Machine (”Man a Machine”), which extended Descartes’ animal-as-automaton thesis to humans. The modern update is Yuval Noah Harari‘s “hackable humans” framework. His equation, presented at the World Economic Forum: “Biological knowledge × Computing power × Data = the Ability to Hack Humans.” Harari warned: “We humans should get used to the idea that we are no longer mysterious souls. We are now hackable animals.” He predicted that within 10–30 years, algorithms “could also tell you what to study at college and where to work and whom to marry.”

Ray Kurzweil‘s The Singularity Is Nearer (2024) predicts that by the late 2030s, “nanorobots the size of red blood cells will go into our brains, noninvasively, through the capillaries and provide wireless communication between the top layer of our brains and additional digital neurons hosted in the cloud.” His framing: “It won’t be us versus AI. We’re going to be made much more intelligent by merging with AI.” Kevin Warwick‘s Project Cyborg (2002) already demonstrated the concept physically — his nervous system was connected to the internet, controlling a robot arm at the University of Reading remotely from Columbia University. He described how “my body was effectively extended over the Internet.”

A provocative reframing comes from Robert Sparrow and Adam Henschke’s 2023 paper in Parameters, the U.S. Army War College journal. Challenging the “centaur warfighting” concept (humans and AI as equal partners), they argued future human-AI teams would more likely be “minotaurs” — humans under AI command and supervision — a direct inversion of the human-in-the-loop paradigm.

The ethical resistance is mounting. Rafael Yuste of Columbia University founded the NeuroRights Foundation, proposing five neurorights: mental privacy, personal identity, free will/agency, equitable access to cognitive augmentation, and protection from algorithmic bias. His survey found 29 of 30 consumer neurotechnology companies’ user agreements would give the company full rights to brain data — which he called “predatory.” Chile became the first country to constitutionally protect neurorights in October 2021, giving personal brain data the same legal status as an organ. In August 2023, Chile’s Supreme Court ordered U.S. BCI company Emotiv to erase brain data collected from a former senator — the first neurorights enforcement action.

Kate Crawford‘s Atlas of AI (2021) documented how AI “re-imagines workers not as people but as units of productivity, optimizing their movements and output while disregarding bodily strain.” Shoshana Zuboff‘s The Age of Surveillance Capitalism warned of “instrumentarian power” that “works its will through the automated medium of an increasingly ubiquitous computational architecture.” The ACM‘s FAccT 2022 paper on BCIs warned of a fundamental autonomy crisis: “the shift from human to device decision making is implicit in the control exercised by ‘intelligent’ BCI devices” — a person may believe they are making choices when the BCI operates independently.

The policy landscape is scrambling to catch up

Major institutions are sounding alarms but remain behind the technology curve. The RAND Corporation‘s 2020 report, “Brain-Computer Interfaces: U.S. Military Applications and Implications,” warned that “hacking BCI capabilities could theoretically provide adversaries with direct pathways into the emotional and cognitive centers of operators’ brains.” The AI Now Institute‘s 2023 Landscape Report concluded that “worker surveillance is fundamentally about employers gaining and maintaining control over workers.” The ILO‘s May 2025 working paper found one in four jobs at risk of being transformed by generative AI, with a significant gender disparity — jobs at highest automation risk constitute 9.6% of female employment versus 3.5% of male in high-income countries.

The OECD Employment Outlook 2023 reported 27% of jobs in OECD countries at high risk of automation, while surveys found 62% of finance workers felt increased pressure from AI-related data collection. Pew Research Center found a stark perception gap: 56% of AI experts believe AI will have a positive impact over 20 years, versus only 17% of the general public. Among workers specifically, 52% are worried about AI in the workplace. The EU AI Act, effective August 2024, classifies AI systems used in employment as “high-risk,” requiring risk assessments, documentation, and human oversight. In December 2025, the European Parliament voted 451-45 demanding additional rules on algorithmic management, with the European Trade Union Confederation calling for “human in command” rights.

The OECD’s 2019 Recommendation on Responsible Innovation in Neurotechnology and UNESCO‘s 2023 Declaration on the Ethics of Neuroscience and Neurotechnology represent the most developed international frameworks for BCI governance. Colorado and Minnesota have incorporated neurological data into privacy laws. But as the Morningside Group’s landmark Nature paper warned: the pace of neurotechnology development is outstripping ethical and regulatory frameworks, creating risks to “privacy, agency, identity” and threatening to “raise social inequities.”

The infrastructure is already in place

The convergence path is visible in the data. White-collar AI displacement is accelerating while humanoid robots remain a decade from meaningful deployment — trapped behind Moravec’s Paradox, 2-hour battery lives, and supply chains concentrated in a single geopolitical rival. Meanwhile, BCI technology has crossed from laboratory to clinic to consumer device in under three years. DARPA has spent half a billion dollars proving that neural stimulation can double learning speed and improve memory by 37%. Millions of workers already function as algorithm-directed physical agents through smartphones and warehouse scanners. The philosophical frameworks — from La Mettrie to Harari to Sparrow’s “minotaurs” — describe this trajectory with increasing precision.

The novel insight is not that any one of these trends is dangerous in isolation. It is that together, they form a coherent economic logic: why build a $150,000 robot that works two hours and can’t open a jar, when you could augment a human who already works eight hours, self-heals, self-reproduces, and navigates any environment on Earth?

The cyborg future didn’t arrive through surgical implants — it arrived through the smartphone in every warehouse worker’s hand. BCI simply removes the remaining friction.

The question is not whether this convergence will occur but whether the neurorights frameworks, labor protections, and ethical guardrails being built in Chile, the EU, and scattered institutions will arrive in time to ensure that the human body remains sovereign territory.

Published by the HAIA Foundation. For more on AI ethics, human augmentation, and the future of labor, subscribe at substack.haia.foundation.

Ooooo. So needed this read early this morning 🪬