The Upgrade You Can’t Refuse: Technology, Evolution, and the Coming Age of Brain-Computer Interfaces

Why the pattern that left stick-shift drivers, landline loyalists, and smartphone skeptics behind is about to repeat—this time inside your skull. And why that should concern all of us.

Here is a pattern that has repeated with remarkable consistency for the past century: a new technology emerges, early adopters gain advantages, the mainstream follows, and those who refuse to adapt find themselves progressively marginalized—economically, professionally, and socially. We’ve seen it with automatic transmission, landline phones, mobile phones, smartphones, and now artificial intelligence. Each wave feels optional at first, then convenient, then necessary, then mandatory for participation in modern life.

The next wave is already here, and it’s not a device you’ll carry in your pocket. It’s a device that will sit inside your brain.

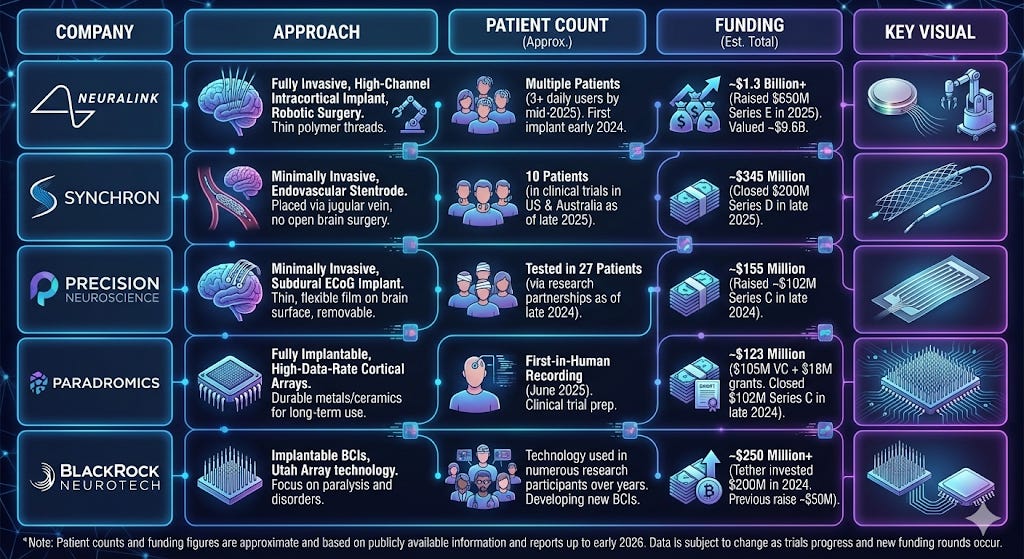

Brain-computer interfaces—BCIs—have moved from science fiction to clinical reality faster than most people realize. Neuralink has implanted devices in a dozen patients. Synchron is threading electrodes through jugular veins. Precision Neuroscience just received the first FDA clearance for a next-generation wireless brain interface. The technology works. The question isn’t whether BCIs will become widespread—the investment dollars, the FDA approvals, and the clinical results suggest that’s increasingly likely. The question is what happens to those who choose not to plug in, and whether we’re prepared for a world where the pressure to augment your cognition becomes as irresistible as the pressure to own a smartphone.

This isn’t a piece advocating for or against neural enhancement. It’s an examination of a pattern—and a warning about what we need to preserve before that pattern repeats itself in the most intimate domain possible: our own minds.

The Merciless Logic of Technological Adoption

Let’s start with cars, because cars are simple and the data is unambiguous.

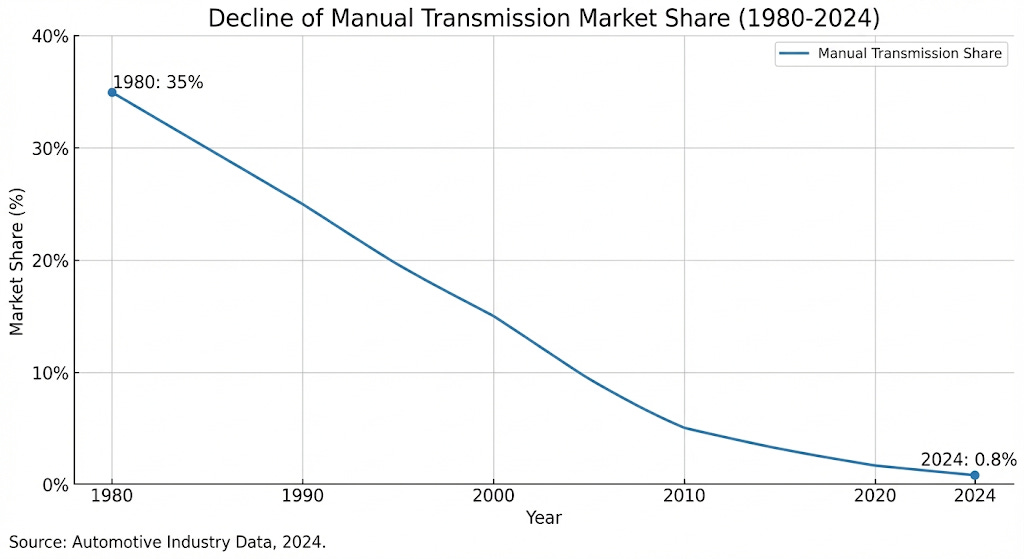

In 1980, 35% of vehicles sold in the United States had manual transmissions. Today, that number has collapsed to 0.8%. Not eight percent—zero point eight. The decline wasn’t gradual; it followed what innovation theorists call an S-curve, with a long plateau, a sudden drop, and then near-total extinction. Manufacturers now routinely eliminate manual options entirely because they’re incompatible with features like adaptive cruise control—and the electric vehicle transition eliminates multi-gear transmissions altogether.

If you were a professional driver in 1985 who insisted on stick shift, you had options. By 2000, fewer options. By 2015, you were a curiosity. Today, you’re essentially unemployable in most transportation and logistics roles. The market didn’t care about your preferences, your skills, or your arguments about fuel efficiency and driver engagement. The market moved on.

Now consider phones. According to Pew Research Center, cell phone ownership in the United States rose from nascent tracking in the early 2000s to 98% of Americans by 2025. The International Telecommunications Union documented that a 10% increase in mobile broadband penetration correlates with 2.5% GDP growth per capita in developing economies. IEEE research found that over 80% of middle-skill jobs now require digital proficiency.

What happened to the 2% who still don’t have cell phones? They’re not participating in the modern economy in any meaningful way. They can’t receive two-factor authentication codes. They can’t use rideshare apps. They can’t access most government services efficiently. They’ve been—and I use this word deliberately—excluded.

Smartphones accelerated this pattern further. Pew data shows ownership jumped from 35% of U.S. adults in 2011 to 91% by 2024. A 2011 study found stark employment correlations: 48% of full-time employees owned smartphones versus only 27% of unemployed individuals. Research published in Sage Journals documented how smartphones “increase work efficiency and productivity, strengthen work relationships through organizational communication, information sharing, and collaboration.”

The pattern is consistent: adopt the technology, gain leverage; refuse the technology, lose ground. Every. Single. Time.

AI Is Moving Faster Than Any Previous Wave

Here is where things get interesting—and alarming.

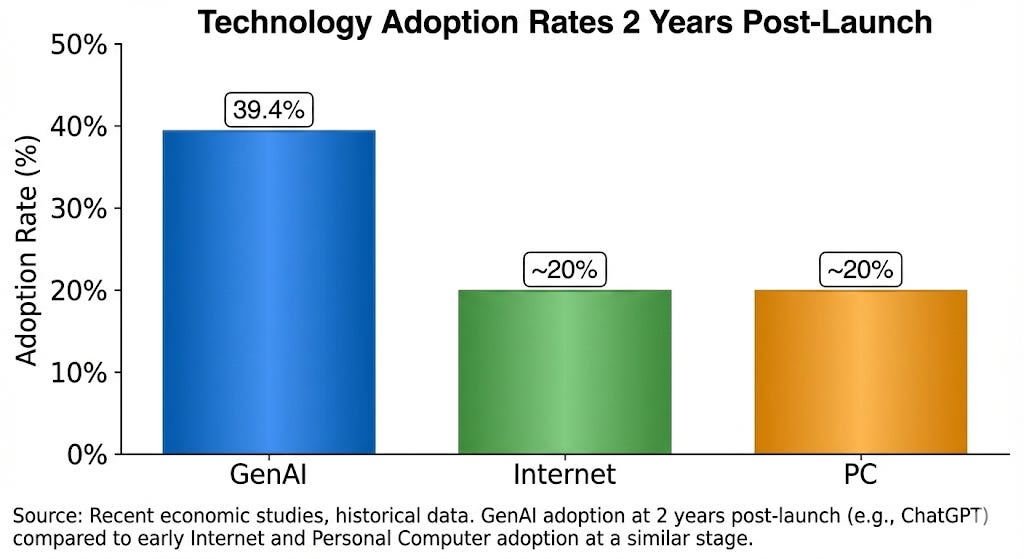

Generative AI is outpacing every previous technology adoption curve in recorded history. According to a 2024 analysis by economists Alexander Bick, Adam Blandin, and David Deming published through the Federal Reserve Bank of St. Louis, 39.4% of Americans aged 18-64 used generative AI within two years of ChatGPT’s launch. That’s roughly double the adoption rate of the internet or personal computers at the same point post-introduction.

The productivity evidence is now robust across multiple peer-reviewed studies, and the numbers are striking:

A Stanford/MIT study of 5,172 customer support agents handling 3 million conversations found a 14-15% average productivity increase from AI assistance—rising to 34% for novice workers. A Harvard Business School study of 758 BCG consultants found that for tasks within AI’s capabilities, consultants completed 12.2% more tasks, 25.1% faster, with 40%+ higher quality. Microsoft’s research found developers using GitHub Copilot completed coding tasks 55.8% faster than control groups.

And here’s the kicker: the wage implications are already materializing. PwC’s 2024-2025 analysis found workers with AI skills command a 56% wage premium—up from 25% the previous year—with wages rising twice as fast in AI-exposed industries. A study published in PNAS found that unequal ChatGPT adoption is already exacerbating existing workforce inequalities, with women 16 percentage points less likely to use ChatGPT for work.

So here we are, in early 2026, watching a familiar pattern accelerate: those using AI tools are pulling ahead, those refusing to adapt are falling behind, and the gap is widening faster than any previous technology transition. The people who dismissed ChatGPT as a fad in 2023 are now scrambling to catch up—or quietly accepting that certain career paths have closed to them.

This brings us to the obvious question: What comes next?

BCIs Have Arrived. Most People Just Haven’t Noticed Yet.

The brain-computer interface landscape has transformed dramatically since 2023, and the progress is worth understanding in detail—because this technology is no longer theoretical.

Noland Arbaugh, a 29-year-old quadriplegic from a 2016 diving accident, became Neuralink’s first human patient on January 28, 2024, at Barrow Neurological Institute. He achieved cursor control on day one and broke the 2017 world record for BCI cursor speed and precision. He’s now playing chess, video games, and browsing the internet—using only his thoughts. By September 2025, Neuralink had implanted 12 patients across the US, Canada, UK, and UAE, with the company targeting 20-30 participants by end of 2025.

Synchron has taken a different approach with its Stentrode device, which is implanted through the jugular vein without open-brain surgery—a procedure taking a median of 20 minutes. Their COMMAND study reported 100% success on the primary safety endpoint with no device-related serious adverse events. The company has raised $345 million, with investors including Jeff Bezos and Bill Gates.

Precision Neuroscience, founded by Benjamin Rapoport (a former Neuralink co-founder who left citing safety concerns), achieved a historic milestone in March 2025: the first FDA 510(k) clearance for a next-generation wireless BCI company. They’ve implanted 37 patients and reached a $500 million valuation.

Paradromics received FDA approval in November 2025 for their Connect-One study targeting speech restoration. Blackrock Neurotech claims the largest patient footprint, with their Utah Array technology used in 40+ of approximately 50 people worldwide with implanted BCIs.

Right now, these devices are being used for medical purposes: restoring communication to paralyzed patients, treating depression, managing Parkinson’s symptoms. That’s where the technology starts. But if you think it will stay there, you haven’t been paying attention to how technology adoption works.

The Trajectory That Should Concern You

Let me sketch out a trajectory that isn’t science fiction—it’s an extrapolation of documented patterns.

Phase 1 (Now–2030): Medical applications expand. BCIs become standard treatment for severe paralysis, treatment-resistant depression, and neurodegenerative diseases. Insurance begins covering some procedures. Public perception shifts from “experimental” to “established medical technology.” The devices get smaller, safer, and cheaper.

Phase 2 (2030–2035): Enhancement applications emerge. Researchers demonstrate that BCIs can enhance memory retention, accelerate learning, or improve focus in healthy individuals. The first “cognitive enhancement” clinics open in jurisdictions with permissive regulations—think medical tourism, but for brain upgrades. Early adopters are wealthy individuals, competitive professionals, and military personnel. Ethical debates intensify, but the technology spreads.

Phase 3 (2035–2040): Competitive pressure builds. Studies show that BCI-enhanced workers outperform unenhanced colleagues by measurable margins—faster information processing, better memory recall, seamless human-AI collaboration. Employers begin quietly preferring enhanced candidates, though explicit discrimination remains illegal. Universities report that enhanced students are outperforming their peers. Parents begin considering enhancement for their children’s competitive futures.

Phase 4 (2040+): The new normal. BCIs become as common as smartphones—perhaps more so. Those without enhancement find themselves at a persistent disadvantage across education, employment, and social interaction. A significant portion of the population has devices connected directly to their nervous systems, integrated with AI assistants, cloud services, and each other.

Does this sound implausible? Consider that in 2007, the idea of carrying a computer in your pocket that tracks your location, monitors your health, and mediates most of your social interactions would have seemed dystopian to many. Now we call it Tuesday.

Here Is Where I Start Getting Worried

Everything I’ve described so far follows a familiar pattern: technology creates advantages, adoption spreads, holdouts lose ground. We’ve seen this movie before. What makes BCIs different—what should give us pause—is that this technology interacts directly with the neural substrate of identity and cognition.

When you put down your smartphone, you’re still you. When a device is embedded in your neural tissue, the distinction between tool and self becomes considerably blurrier.

Let me walk through three categories of concern that deserve serious attention.

The Hacking Problem

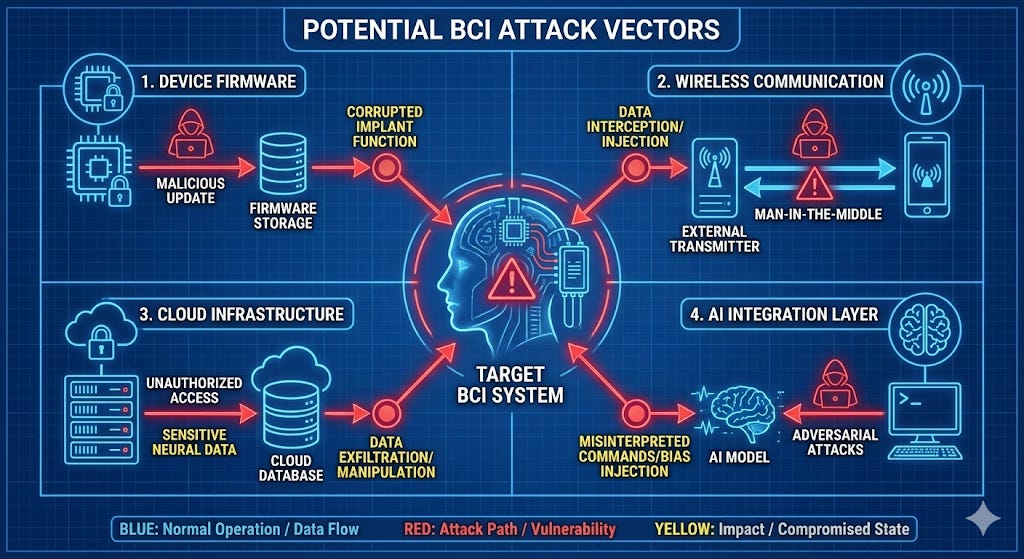

Researchers at the University of Oxford have coined the term “brainjacking” to describe the unauthorized control of brain implants. The foundational paper, published in World Neurosurgery by Laurie Pycroft and colleagues, identified two categories of attacks: blind attacks requiring no patient-specific knowledge (disabling stimulation, draining batteries, stealing information) and targeted attacks enabling impairment of motor function, alteration of impulse control, modification of emotions, induction of pain, and modulation of reward systems.

Read that last sentence again. We’re talking about the possibility of remotely inducing pain, altering someone’s emotional state, or manipulating their reward system—the neural machinery that determines what feels pleasurable and what feels aversive.

The seminal demonstration of neural data extraction came from researchers including Ivan Martinovic (Oxford) and Dawn Song (UC Berkeley) at the 2012 USENIX Security Symposium. Using a consumer-grade Emotiv EPOC headset—not even an implanted device—they showed that EEG signals could reveal credit card numbers, PIN codes, dates of birth, and home locations, with private information entropy decreasing by 15-40% compared to random guessing.

Kevin Fu, the first Director of Medical Device Cybersecurity at the FDA, testified to Congress in 2025: “A bad actor who discovers a vulnerability could disable patient monitors during surgeries, spoof vital signs in intensive care units, or hijack infusion pumps to administer incorrect dosages.”

Now extrapolate that to devices with 1,024 electrodes threaded through your brain tissue.

Every connected device in history—every single one—has eventually been hacked. Smartphones, cars, pacemakers, insulin pumps, baby monitors, smart home devices, voting machines. The idea that brain-computer interfaces will somehow be the exception to this universal pattern is, to put it charitably, optimistic.

And unlike a hacked email account or a compromised credit card, a compromised neural interface doesn’t have a “change password” option.

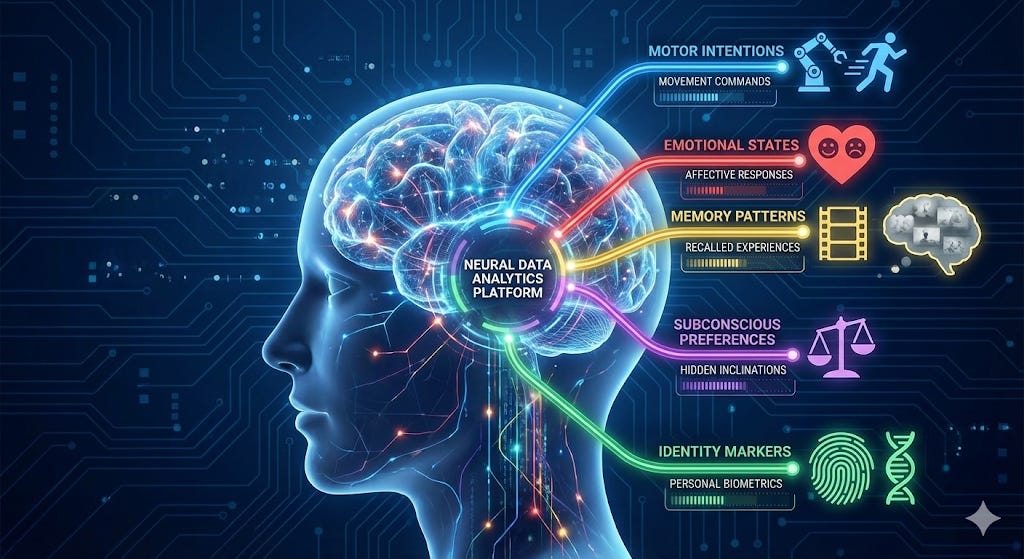

The Privacy Problem

Your brain is the last private space. Every thought, every memory, every fleeting impulse and half-formed desire—these have, throughout human history, been yours alone unless you chose to share them. BCIs change that calculus fundamentally.

Nita Farahany, a Duke University professor and current President of the International Neuroethics Society, has been sounding this alarm for years. In her book The Battle for Your Brain, she defines cognitive liberty as “the right to self-determination over our brains and mental experiences, as a right to both access and use technologies, but also a right to be free from interference with our mental privacy and freedom of thought.”

In an interview with Harvard Gazette, Farahany warned: “What neurotechnology makes possible is moving from an era where you have a skull as a fortress, an impenetrable fortress that your thoughts live within, to one in which your thoughts can be accessed.”

Rafael Yuste, a Columbia University neuroscientist who founded the NeuroRights Foundation, frames the stakes even more starkly: “We’re talking about the possibility of creating a hybrid human, which could divide humanity into two species: augmented and non-augmented. Mental augmentation should be in the forefront of the human-rights debate.”

Imagine a world where your employer can monitor your focus levels in real-time. Where advertisers can measure your emotional response to their pitches at the neural level. Where insurance companies can assess your mental health by analyzing your brain activity patterns. Where governments can detect “disloyal” thoughts before you’ve even articulated them to yourself.

This isn’t paranoid speculation—these are use cases that companies are actively exploring. Consumer neurotechnology devices are already being marketed for “wellness monitoring” and “productivity optimization.” The neural data they collect is, in most jurisdictions, completely unregulated.

The Atrophy Problem

Here’s something we don’t talk about enough: when you outsource a cognitive function to technology, the underlying neural capacity can degrade.

Researchers at McGill University found that greater lifetime GPS experience correlates with worse spatial memory during self-guided navigation, with a three-year longitudinal study finding that GPS use was associated with steeper decline in hippocampal-dependent spatial memory. Lead researcher Véronique Bohbot expressed fears that “reducing the use of spatial navigation strategies may lead to earlier onset of Alzheimer’s or dementia.”

The foundational “Google Effect“ study by researchers at Columbia, Wisconsin-Madison, and Harvard demonstrated that when people expect future access to information, they have lower recall of the information itself but enhanced recall for where to access it. The internet has become a form of transactive memory—we remember where to find things rather than the things themselves.

Aviation provides perhaps the starkest warning. The FAA issued Safety Alert 13002 in 2013 recommending that pilots turn off autopilot and hand-fly during low-workload conditions because “continuous use of autoflight systems does not reinforce a pilot’s knowledge and skills in manual flight operations.” A University of Cranfield study found that 77% of commercial pilots reported their manual flying skills had deteriorated due to automation. The Asiana Airlines Flight 214 crash in San Francisco was attributed partly to automation dependency—three trained pilots allowed the aircraft to crash due to inadequate manual flying skills.

Now imagine this pattern applied not to navigation or flying, but to thinking itself.

If a BCI handles your memory retrieval, does your biological memory atrophy? If an AI co-processor assists with complex reasoning, do your unassisted reasoning capabilities decline? If emotional regulation can be outsourced to a device, what happens to the neural circuits that normally develop through the hard work of managing your own feelings?

And here’s the really uncomfortable question: if your device malfunctions—or gets hacked—and you’ve lost the underlying capabilities, what happens then?

The Coming Cognitive Divide

Let me paint a picture of a society that isn’t particularly dystopian—just unequal in a new way.

It’s 2045. BCI technology has matured considerably. The devices are smaller, safer, and more capable. Insurance covers medical applications, and enhancement applications have become available through a combination of regulated clinics and medical tourism. Approximately 30% of adults in wealthy countries have some form of neural interface—a rate comparable to smartphone ownership in 2010.

In education: Enhanced students consistently outperform their unenhanced peers. They can access information instantaneously, maintain focus for longer periods, and integrate with AI tutoring systems at a level that feels like cheating to those without implants. Elite universities report that 60% of their incoming class has some form of cognitive enhancement. Debates rage about fairness, accommodation, and what “authentic achievement” even means anymore.

In employment: Knowledge work has bifurcated. High-end positions increasingly assume neural interface capabilities—not as a formal requirement (that would be illegal in most places), but as a practical necessity. Try being a competitive analyst when your peers can process information at three times your speed. Try being a surgeon when your colleagues have tremor-correction and enhanced pattern recognition built into their motor cortex. The premium for enhancement has become a barrier to entry.

In social life: Communication patterns have diverged. Enhanced individuals can share thoughts and experiences at a bandwidth that feels alien to the unenhanced. Social media has evolved into something more like social telepathy—at least among those with the hardware. Cultural production increasingly assumes neural interface access. The unenhanced experience the world like someone trying to participate in TikTok culture through a fax machine.

In politics: A new civil rights movement emerges around “cognitive equality.” Debates echo historical conflicts over access to education, healthcare, and economic opportunity—but with higher stakes. The enhanced worry about restrictions on their capabilities; the unenhanced worry about becoming a permanent underclass. Both sides have legitimate grievances.

Francis Fukuyama warned about precisely this scenario in Our Posthuman Future back in 2002: “If the wealthy have cognitive advantages that lead to more wealth and further cognitive advantages (in a reinforcing feedback loop), there is a downstream concern that we might face a deeper societal ‘cognitive divide’ between enhanced and unenhanced individuals.” A recent paper in PLOS Biology echoed this concern, noting that such a divide could prove more fundamental than any previous form of inequality.

This isn’t a prediction—it’s a plausible trajectory based on how previous technologies have stratified society. The digital divide provided empirical precedent: research has documented how technology disparities can exacerbate existing inequalities rather than resolving them. There’s no reason to assume brain-computer interfaces will be different.

What We Need to Preserve

Here is where I want to shift from analysis to advocacy—not advocacy for or against BCIs, but advocacy for preserving something essential regardless of which path we collectively choose.

Cognitive Liberty Must Work Both Ways

Marcello Ienca at the Technical University of Munich, along with Roberto Andorno at the University of Zurich, proposed four fundamental neurorights in their landmark 2017 paper: cognitive liberty (freedom from coercive neurotechnology use), mental privacy (protection from unauthorized access to neural information), mental integrity (protection from harm to neural computation), and psychological continuity (preservation of personal identity from unconsented alteration).

The concept of cognitive liberty itself was coined earlier by neuroethicist Wrye Sententia and legal theorist Richard Glen Boire, defined as “the right of each individual to think independently and autonomously, to use the full power of his or her mind, and to engage in multiple modes of thought.”

The key insight is that cognitive liberty protects both the right to enhance and the right to refuse enhancement. As Sententia put it: “Cognitive liberty’s strength is that it protects those who do want to alter their brains, but also those who do not.”

This needs to be enshrined in law before the technology matures—not after, when economic pressures will make such protections feel like anachronistic obstacles.

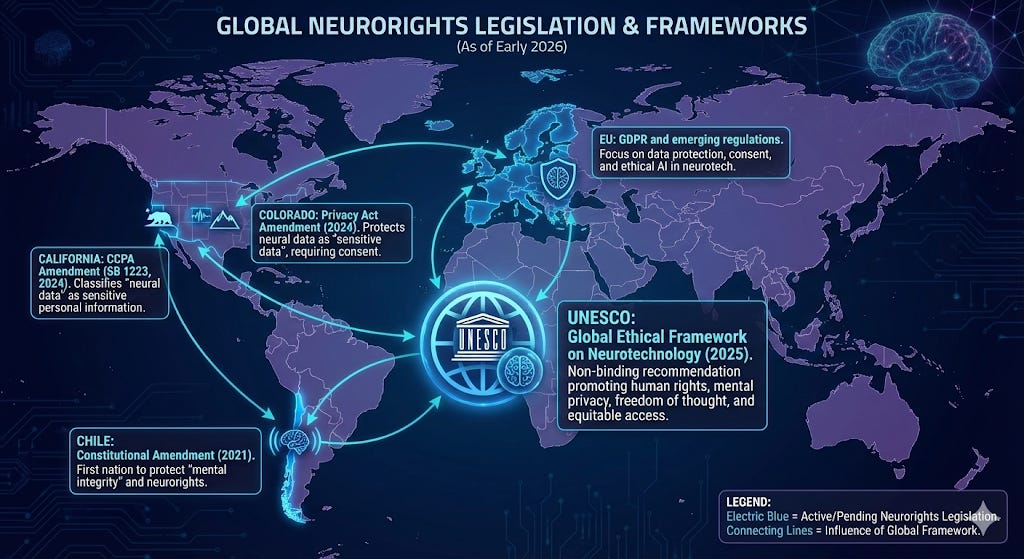

We Need Regulatory Frameworks Now

Some progress is happening, though unevenly.

Chile became the first country to constitutionally protect neurorights in October 2021, amending its constitution to state that the law must “especially protect brain activity, as well as the information derived from it.” In August 2023, Chile’s Supreme Court issued the world’s first court ruling on neuroprivacy, finding that a U.S. company violated constitutional rights by retaining neural data without explicit consent—classifying neural data as equivalent to human organs.

UNESCO adopted the Recommendation on the Ethics of Neurotechnology in November 2025—the first global normative framework. It protects mental privacy, bans non-therapeutic neurotechnology use on children, warns against workplace productivity monitoring, and requires explicit consent. The OECD had earlier adopted the first international standard in December 2019.

The EU AI Act, which entered into force in August 2024, specifically mentions BCIs as posing potential “unacceptable risk” due to the potential for subliminal manipulation. In the United States, Colorado became the first state to define “neural data” in law in 2024, with California following.

But regulatory frameworks are racing against a technology curve that shows no signs of slowing. And historical experience suggests that once a technology becomes economically essential, restrictions become politically difficult to maintain.

We Need Cognitive Fail-Safes

This is perhaps the most counterintuitive recommendation, but I believe it’s the most important: we need to deliberately preserve unaugmented cognitive capabilities as a resilient backup system.

The aviation industry learned this lesson the hard way. Pilots now train to fly without automation precisely because they’ve discovered that automation dependency creates dangerous skill atrophy. The FAA’s recommendation to hand-fly during low-workload conditions isn’t nostalgia—it’s safety engineering.

Apply this logic to cognitive enhancement: even if BCIs become widespread, we need societal investment in maintaining the ability to think, remember, navigate, and decide without neural assistance. This means educational curricula that develop unassisted capabilities. Mental exercises that keep biological neural pathways strong. Regular “unplugged” periods that prevent complete dependence on augmentation.

Think of it as cognitive cross-training. Athletes don’t just train their primary sport—they develop complementary capabilities that provide resilience and prevent injury. A society increasingly dependent on neural augmentation needs the equivalent: systematic development of backup cognitive systems that can function when the primary systems fail.

And they will fail. Every technology fails eventually. The question is whether we’ll have preserved the capability to function when it does.

The Choice We Haven’t Made Yet

I want to be clear about something: I’m not arguing against brain-computer interfaces. The technology offers genuine benefits for people with paralysis, depression, neurodegenerative diseases, and other conditions. The medical applications are already transforming lives.

What I’m arguing is that we need to make conscious choices about this technology rather than sleepwalking into adoption driven purely by competitive pressure. We need to decide—collectively, deliberately, with full awareness of the tradeoffs—what we want to preserve about human cognition and human autonomy.

The pattern of technological adoption is real. The economic pressure to adopt BCIs will likely become intense. But patterns can be interrupted. Choices can be made. We regulated nuclear technology rather than letting it proliferate freely. We developed safety standards for automobiles rather than accepting whatever the market produced. We can shape how neural interfaces integrate into society rather than simply accepting the default trajectory.

The people who refused to adopt previous technologies—manual transmission loyalists, landline holdouts, smartphone skeptics—were indeed left behind. But the situations aren’t perfectly analogous. Putting down your smartphone doesn’t diminish your smartphone-using capabilities. Removing a neural interface from someone whose biological cognitive systems have atrophied over years of disuse is a different matter entirely.

We have a window—probably a narrow one—to establish the frameworks, the rights, the educational investments, and the cultural norms that will shape how this technology integrates into human life. That window closes once adoption reaches critical mass and the technology becomes, like the smartphone, simply assumed.

The question isn’t whether you’ll want a neural interface. Given enough competitive pressure, almost everyone wants whatever technology provides advantage. The question is whether we’ll preserve the right to refuse, the capability to function without it, and the humanity that exists independent of our technological augmentation.

That’s the choice we haven’t made yet. And we’re running out of time to make it deliberately rather than having it made for us by default.

The HAIA Foundation works to ensure that advanced technology serves human flourishing. For more on the intersection of AI, neurotechnology, and human rights, subscribe to our Substack.

This article arrives at a crucial moment, providing a profound analysis of BCI implicatios that brilliantly builds on your previous astute observations.