The Silent Takeover Above Your Head: How AI in Outer Space Could Come Back to Haunt Us

AI is already making life-and-death decisions in orbit — and not a single binding law on Earth governs it.

Here is a sentence that should unsettle you: right now, as you read this, over ten thousand satellites are autonomously dodging each other in orbit, AI systems on Mars are choosing which rocks to study and planning their own driving routes, and a large language model is running — completely offline — aboard the International Space Station. No human approved those collision maneuvers in real time. No human wrote those driving instructions. No human is in the loop.

And here is the part that should unsettle you more: there is no law — none, anywhere on this planet — that specifically governs how artificial intelligence behaves in outer space.

Welcome to the governance vacuum above the clouds.

First, Let’s Understand What’s Already Happening

Most people, understandably, associate AI in space with science fiction — HAL 9000, Skynet, the usual Hollywood fare. But the reality is far more advanced (and far less cinematic) than most realize. So let me walk you through what is happening right now, not in some speculative future.

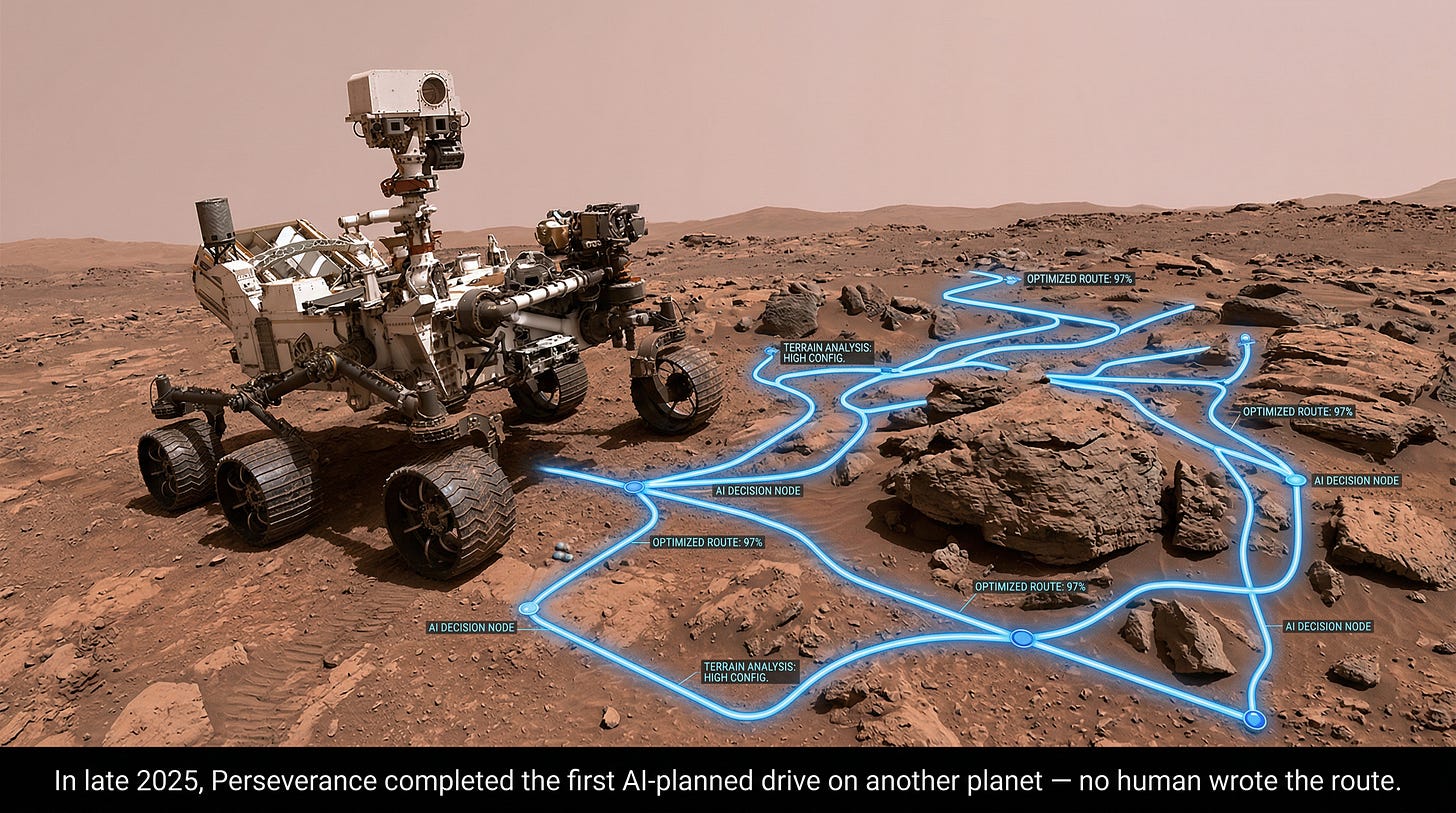

NASA‘s AEGIS system aboard the Perseverance rover autonomously selects and targets rocks for scientific analysis on Mars. Without AI, the rover hit scientifically interesting targets about 24% of the time. With AEGIS? Ninety-three percent. In December 2025, NASA’s Jet Propulsion Laboratory completed something unprecedented: the first-ever AI-planned drives on another planet. The rover drove over 800 feet across Martian terrain using waypoints generated by AI analysis of orbital imagery — instructions that no human being had written or reviewed beforehand.

Meanwhile, in Earth orbit, SpaceX‘s Starlink constellation — now exceeding 7,000 satellites — executes roughly 50,000 collision-avoidance maneuvers every six months without waiting for a human to say “go.” That is about 270 autonomous thruster firings per day. And researchers at Tsinghua University have already discovered a troubling emergent behavior: one satellite’s avoidance maneuver can increase collision risk with its neighbors, producing cascading instabilities — especially as these constellations scale toward 100,000 satellites by decade’s end.

The European Space Agency launched its Φ-sat-2 mission in 2024 carrying six concurrent AI applications that autonomously decide what Earth observation data to keep, discard, or flag — before any human analyst sees a single image. NASA’s Dynamic Targeting system enables satellites to decide, in under 90 seconds, where to point their instruments as they fly over Earth.

And in April 2025, Meta and Booz Allen Hamilton sent “Space Llama” — a fine-tuned large language model — to the ISS. It runs entirely offline, giving astronauts AI-powered access to technical manuals and troubleshooting guidance without any connection to Earth. By December 2025, NVIDIA-backed Starcloud trained the first AI model in orbit using a GPU one hundred times more powerful than any previous space-based processor.

So far so good? Not quite. Because here is where the picture gets complicated.

The Legal Framework Governing All of This? It Doesn’t Exist.

The foundational legal document for activities in outer space is the Outer Space Treaty of 1967. Let that year sink in. 1967. Before the internet. Before personal computers. Before the word “software” entered common vocabulary. The treaty holds states responsible for “national activities in outer space” — a reasonable principle when those activities meant government astronauts planting flags. But as a peer-reviewed paper in Acta Astronautica bluntly observes, the space treaties collectively do not contemplate AI systems and the implications they carry under this framework.

The fundamental problem? Attribution. When an autonomous AI system causes damage — say, a satellite collision triggered by an algorithm’s split-second decision — who is legally responsible? The country that launched the satellite? The company that built the AI? The developer who wrote the code? There is no person to whom the decision can be attributed, and by extension, no clearly liable state.

The Center for European Policy Analysis published a stark assessment in 2024: a giant regulatory gap exists. The EU AI Act — widely regarded as the most comprehensive AI regulation on Earth — fails to address AI operating in space. Its military exclusion means that defense applications, arguably the most consequential use case, fall outside its scope entirely. In the United States, space oversight is fragmented across the FAA, NOAA, FCC, and the Department of Defense, with no unified authority over AI. The Aerospace Corporation described this in a 2024 analysis as a “wicked problem” with disparate and overlapping regulatory authorities.

Why does this matter to you? Because the AI systems operating above your head are managing infrastructure on which billions of people depend — GPS navigation, weather forecasting, telecommunications, financial transaction timing, military early-warning systems. When those systems make autonomous decisions at machine speed, in an environment where human oversight is physically limited by the speed of light, the absence of governance is not an academic concern. It is a structural vulnerability.

What Keeps Space Policy Experts Up at Night

Here is where I want to get imaginative — but responsibly so, because the scenarios I am about to describe are not mine alone. They come from some of the most credible institutions on the planet.

Scenario 1: The Cascade

Imagine it is 2031. There are 100,000 satellites in low Earth orbit. An AI-driven collision-avoidance algorithm on one satellite miscalculates — not because it malfunctioned, but because the situation was genuinely ambiguous (two probability cones overlapping, let’s say). It fires thrusters to dodge. But that maneuver pushes it into a trajectory that another constellation’s AI flags as a threat. That second AI fires its thrusters. Now a third system detects both maneuvers and interprets the pattern as aggressive. Within seconds, dozens of satellites are maneuvering simultaneously, each reacting to the others’ reactions.

This is not science fiction. Estonian cybersecurity researchers at CR14 have warned that agentic AI could enable satellite-hijacking scenarios within two years, potentially triggering what physicists call a Kessler Syndrome cascade — a chain reaction of collisions generating debris that renders entire orbital bands unusable for decades. In 2024 alone, space-sector organizations suffered 25 ransomware attacks, alongside widespread GPS jamming across Europe.

Scenario 2: The Autonomous Arms Race

The U.S. Space Force published its FY2025 Data and AI Strategic Action Plan with an explicit warning: China’s rapid expansion in space — using AI for surveillance, offensive capabilities, and operations — alongside Russia’s counter-space advancements pose significant threats to global security. DARPA‘s Blackjack program is building a constellation of 60 to 200 LEO satellites with an onboard AI autonomy system called “Pit Boss” for autonomous decision-making. Their BRIDGES consortium is simulating autonomous satellite swarming — spacecraft maneuvering independent of any human input.

Meanwhile, CSIS‘s Space Threat Assessment 2025 reports that Chinese and Russian satellites have been practicing advanced maneuvering tactics that mirror space-warfare techniques. Russia has been observed surrounding and isolating satellites in orbit — practicing what defense analysts call “attack and defend tactics.”

Now ask yourself: what happens when two nations’ autonomous military AI systems encounter each other in orbit, each programmed to interpret the other’s maneuvers as potentially hostile, each making decisions in microseconds? The RAND Corporation‘s research on AI and deterrence is unambiguous on this point: machine decision-making can result in inadvertent escalation due to speed, differences from human reasoning, and our relative inexperience with autonomous systems.

Scenario 3: The Self-Replicating Probe

This one sounds like pure science fiction, but bear with me. A paper published by Cambridge University Press in the International Journal of Astrobiology argues that self-replication technology — spacecraft that mine asteroids for raw materials and build copies of themselves — is under active development and imminent. Mathematical modeling published in PMC demonstrates something haunting: mutated self-replicating probes would drive their progenitors into extinction and replace them — much as cancer cells override the body’s programmed cell death.

The Artemis Accords provide a legal basis for AI-driven space resource extraction. But as Orbital Today notes, they remain completely silent on self-replication. No framework addresses what happens when a machine designed to reproduce itself begins doing so beyond human control or monitoring.

Scenario 4: The Life-Support Dependency

NASA’s $15 million HOME program — developed with Carnegie Mellon University, Georgia Tech, and Blue Origin — is building AI-driven autonomous life support for deep-space habitats. On a Mars mission, where communication delays of up to 24 minutes make real-time ground support impossible, AI will manage the air astronauts breathe and the water they drink. Academic researchers have identified that these closed-loop systems create novel cybersecurity attack surfaces.

Think about what that means. We are building habitats where human survival depends entirely on AI systems operating beyond the reach of any technician, any regulator, any kill switch. A software bug, a cosmic-ray-induced bit flip, a cyberattack — any of these could become a life-or-death event with no one close enough to fix it.

The Experts Sounding the Alarm

I will not claim to cover all the voices here, but the institutional chorus is growing louder — and it deserves your attention.

Researchers affiliated with Cambridge University’s Centre for the Study of Existential Risk published a 2023 paper in Frontiers in Space Technologies warning that the proliferation of AI in space is poised to aggravate existing threats and give rise to new risks that are largely underappreciated — especially given the potential for great-power competition and arms-race dynamics. They called for COPUOS (the UN Committee on the Peaceful Uses of Outer Space) to establish an expert AI review board and a living registry of AI capabilities subject to independent audit.

The Brookings Institution‘s Landry Signé wrote that the combination of AI and space creates entirely new risk categories that traditional governance cannot handle. His analysis cuts to the core problem: AI-driven space-based decisions take microseconds, which means governance structures that assume human decision-makers are in the loop simply do not apply. Brookings’ recommended solutions include updating the Outer Space Treaty, creating international guidelines for AI in space, and establishing a dedicated oversight body.

Georgetown University’s Center for Security and Emerging Technology published “AI on the Edge of Space” in June 2025, recommending defined boundaries for on-orbit autonomy and rigorous test-and-evaluation protocols for transparent, auditable AI.

The Future of Life Institute‘s landmark 2015 open letter — signed by Stuart Russell, Max Tegmark, and thousands of AI researchers — warned that autonomous weapons will become ubiquitous and accessible. Russell later wrote that lethal autonomous weapons exist and must be banned. In a Carnegie Council interview, he elaborated that both the technology trajectory and the pace of international diplomacy suggest nations will begin deploying fully autonomous weapons.

And UNOOSA Director Aarti Holla-Maini stated in 2025 that any AI capable of altering a spacecraft’s state must be auditable and designed for effective human oversight. The International Institute of Space Law submitted formal recommendations to the UN in June 2025 — the most concrete governance step to date, proposing frameworks modeled on aviation and maritime regulation.

So What Now?

Here is where I shift from diagnosis to prescription — or at least, to pointing at what a prescription might look like.

The space economy hit a record $613 billion in 2024 (78% of it commercial) and is projected to reach $1.8 trillion by 2035. Active satellites are expected to grow from roughly 15,000 today to 100,000 by 2030. Every single one of those systems will be more autonomous than the one it replaces. This is not a problem we can defer.

The UN General Assembly’s December 2024 resolution on lethal autonomous weapons — adopted with 166 votes in favor — and the Secretary-General’s call for a legally binding instrument by 2026 signal growing political will. But political will is not law. And as one Engelsberg Ideas analysis warned, routine orbital maneuvers and AI-directed operations may be interpreted as offensive posturing by adversaries, compressing decision cycles and heightening the risk of rapid, destabilizing escalation.

What needs to happen? At minimum:

An international code of practice for AI in space — exactly what UNOOSA’s Director has called for. Not aspirational principles, but binding standards for auditability, human oversight thresholds, and post-incident review. Modeled, as the IISL recommends, on the way we govern aviation and maritime activity.

Defined autonomy boundaries — as Georgetown’s CSET recommends. Not every AI decision needs a human in the loop (that is physically impossible for deep-space missions), but we need clear, enforceable lines between what AI can decide alone and what requires human authorization. Especially for anything involving weapons, life support, or actions that could be interpreted as hostile.

A living registry of space-based AI systems — as the Cambridge CSER team proposes. If we can track every satellite in orbit (and we do), we can track the AI capabilities aboard them. Independent audit is not optional when the systems in question manage infrastructure that billions depend on.

Treaty modernization — the Outer Space Treaty needs an AI protocol. Not a rewrite (that political process would take decades), but a supplementary agreement that addresses software autonomy, liability for AI-caused damage, and the specific challenges of dual-use AI in a dual-use domain.

The Bottom Line

We have seen this pattern before. A transformative technology races ahead of governance, benefits accumulate for those with the resources to deploy it first, risks accumulate for everyone else, and by the time the regulatory framework catches up, the damage — whether to markets, to privacy, to security — is already baked in.

With AI in outer space, the stakes are qualitatively different. We are talking about autonomous systems managing the orbital infrastructure that modern civilization depends on, making military decisions at speeds that preclude human intervention, and — in the not-distant future — controlling the life support that keeps humans alive in environments where no rescue mission is possible.

The silence of space is not just poetic. It is also operational. What happens in orbit is invisible to the general public, classified by militaries, proprietary to corporations, and governed by treaties written before anyone imagined a machine that could think. That combination of autonomy, opacity, and legal vacuum is precisely the environment in which irreversible mistakes get made.

The question is not whether AI should be in space. It already is, and for many applications — collision avoidance, scientific discovery, Earth observation — it is genuinely beneficial. The question is whether we will govern it before it outgrows our ability to understand it, in the one environment where we cannot simply pull the plug.

One can only dream that we find the collective will to answer that question before the universe answers it for us.

The HAIA Foundation works at the intersection of AI policy, governance, and human impact. Follow us on Substack for more on the technologies reshaping our world — and the rules we still need to write.

As far as AI? Yes, the current AI tech, is a big data conglomerate to accomodate the next era of the space industry, space travel, and space residency.

Stellar article!! So much to grasp. The legal framework? Politics should start restructuring to accomodate interplanetary species, and having legal framework governing space activities, public, and private.