The Second Chance: How AI Could Return What the Industrial Revolution Stole

Or how we might squander it all over again

In 1930, John Maynard Keynes made a prediction that now reads like science fiction. Within a century, he wrote, technological progress would solve “the economic problem” and our grandchildren would work perhaps fifteen hours a week—spending the rest of their time on leisure, culture, and the people they love.

We are now five years from that deadline. How did we do?

Not well. Americans work more hours annually than workers in any other wealthy nation. Eighty percent report feeling “time poor.” Parents describe their schedules in the language of combat—juggling, surviving, barely keeping heads above water. The fifteen-hour workweek remains a punchline, not a policy goal.

But here is where things get interesting. Artificial intelligence has emerged as a technology capable of automating not just manual labor (we have had that for two centuries) but cognitive work—the reports, the scheduling, the emails, the soul-crushing administrative tasks that colonize modern life. The productivity gains are real and measurable. The question that will define the next generation is brutally simple: Will we use AI to reclaim our time, or will we let corporations capture those gains while the rest of us work harder than ever?

This is not a rhetorical question. Both futures are already unfolding simultaneously.

What the Factories Took

Before we talk about what AI might give back, we need to understand what was taken.

Prior to industrialization, most families worked together. The cottage industry system meant parents and children labored side-by-side in homes, with task-oriented rhythms dictated by seasons and sunlight rather than factory whistles. Medieval workers enjoyed roughly one-third of the year off through church holidays and festivals. France’s ancien régime guaranteed working only 185 of 365 days.

Then came the factories, and everything changed.

Working hours effectively doubled—from roughly 1,500-2,300 annual hours to 3,100-3,600 during peak industrialization. Twelve to sixteen hour days became standard, six days a week, with virtually no paid holidays. Children as young as four entered the mills; by the 1820s, half of English workers were under age twenty. The 1833 Factory Act—considered progressive reform—permitted children aged nine to thirteen to work forty-eight hours weekly.

But the deeper theft was not just hours. Historian E.P. Thompson documented how industrial capitalism transformed humanity’s relationship with time itself—shifting from natural, task-oriented patterns where social life and labor intermingled to rigid clock-regulated factory discipline. As Britannica notes, industrialization “radically disrupts this more or less autonomous family economy. It takes away the economic function of the family, and reduces it to a unit of consumption and socialization.”

Parents went to factories. Children went to different factories (or eventually, schools designed on factory models). The family as a working unit—a team that produced together, ate together, rested together—dissolved into isolated individuals selling hours to employers.

We have been trying to claw back that time ever since.

The Evidence for Hope

So what does the actual evidence say about whether shorter work hours are feasible? Quite a lot, actually—and it is remarkably encouraging.

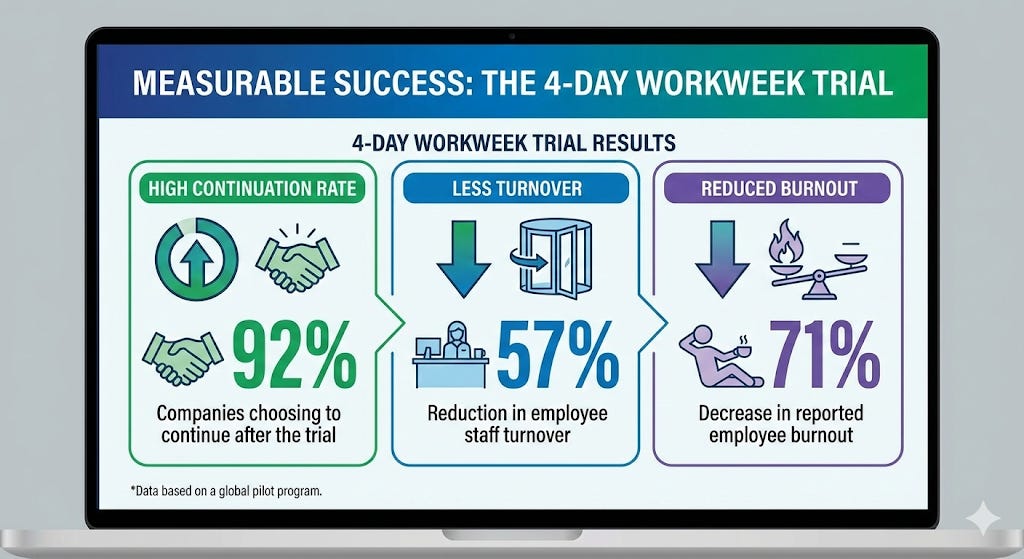

The UK’s four-day workweek trial in 2022 was the world’s largest: sixty-one companies, 2,900 workers, six months. The results defied skeptics. Ninety-two percent of participating companies continued with four-day weeks after the trial ended. Revenue rose 1.4 percent on average during the trial and thirty-five percent compared to previous years. Staff turnover dropped by fifty-seven percent. Sick days fell by sixty-five percent. Seventy-one percent of workers reported reduced burnout.

One year later, eighty-nine percent of companies were still operating four-day weeks, with over half declaring the change permanent. Fifteen percent of employees said no amount of money would convince them to return to five days.

Iceland’s experiment is even more striking. After trials running from 2015 to 2019, fifty-one percent of the entire Icelandic workforce now works shorter hours. The country’s GDP grew five percent in 2023—second only to Malta among wealthy European economies.

Now layer AI productivity gains on top of this. Research from the St. Louis Federal Reserve found that workers using generative AI save 5.4 percent of work hours, translating to roughly thirty-three percent higher productivity per hour. A Stanford and World Bank survey found AI reduced time for common work tasks by more than sixty percent on average—writing tasks that took eighty minutes dropped to twenty-five.

Business leaders are taking notice. Jamie Dimon, CEO of JPMorgan Chase, predicted in late 2025 that developed economies would be working three-and-a-half-day weeks within a few decades. Bill Gates has suggested AI could enable a two-day workweek within ten years.

The math works. The technology exists. The trials prove it is viable.

So why am I worried?

The Warning Signs

Because there is another set of data—equally rigorous, far more troubling—showing that AI is currently being deployed to intensify work rather than liberate workers from it.

A 2025 study published by the Centre for Economic Policy Research found something remarkable: workers in AI-intensive occupations have increased their weekly hours relative to less-exposed jobs. Moving from the twenty-fifth to seventy-fifth percentile in AI exposure correlates with working 3.15 additional hours per week.

Read that again. The workers most exposed to “labor-saving” AI are working longer, not shorter.

An Upwork study revealed the mechanism. Seventy-seven percent of employees using AI report it has added to their workload. Thirty-nine percent spend more time reviewing and moderating AI-generated content. Twenty-one percent are being asked to do more work directly because of AI tools. Meanwhile, twenty percent of C-suite executives explicitly expect employees to work longer hours due to AI.

This is the bait-and-switch. Corporations adopt AI tools, capture the productivity gains, and rather than reducing hours or sharing profits, they lay off workers and demand more from those who remain—or they deploy the technology to monitor remaining employees with unprecedented precision.

The surveillance economy has exploded. Seventy-four percent of US employers now use online tracking tools. Sixty-one percent use AI-powered monitoring to evaluate staff performance. Fifty-nine percent of employees report stress or anxiety from workplace surveillance. The global “bossware” market hit $587 million in 2024 and is projected to reach $1.4 billion by 2031.

Shoshana Zuboff, Harvard Business School professor emerita and author of The Age of Surveillance Capitalism, captures the dynamic: “’Innovation’ is a dog whistle that says ‘don’t pass any laws.’ What’s really meant by innovation is preservation of the status quo, enabling the architects of AI to keep driving toward their commercial objectives.”

Daron Acemoglu, the MIT economist who won the 2024 Nobel Prize, warns that AI’s current trajectory is simply wrong: “We’re using it too much for automation and not enough for providing expertise and information to workers.”

Why This Matters: The Case for Time Wealth

Here is why this is not merely an economic debate but a profoundly human one.

Ashley Whillans, a Harvard Business School professor who studies time and happiness, has documented that “time affluence”—the feeling of having control and enough time on an everyday basis—independently predicts happiness even after controlling for income. Her research shows that people who prioritize time-saving purchases over material goods report greater life satisfaction, with the least wealthy participants benefiting most.

The implications for families are direct. Research consistently shows that parental presence buffers children against stress, that educational “active time” with parents is the most important determinant of childhood development, and that insufficient parent-child quality time is associated with lower flourishing in young children.

But here is the crucial nuance: quality matters more than quantity. Studies from Melissa Milkie at the University of Toronto found that the actual amount of time mothers spend with young children does not impact development nearly as much as how they spend time together. Time spent with stressed, anxious, sleep-deprived parents can actually harm children.

This is the synthesis that makes AI’s potential so significant. Technology that reduces time burdens could enable parents to be more present, less exhausted, and capable of higher-quality interactions—addressing precisely what the research shows benefits children most.

Consider the “second shift” that sociologist Arlie Hochschild famously documented. American mothers bear 27.2 hours weekly of combined childcare and housework—more than double fathers’ 12.9 hours. Women have thirteen percent less free time than men overall; women aged thirty-five to forty-four have twenty-three percent less.

Household automation has precedent. Appliances reduced housework from fifty-eight hours weekly in 1900 to eighteen hours by 1975—freeing forty hours. Oxford researchers project AI could automate up to forty percent of remaining household chores within the next decade. If realized, that represents hours returned directly to family presence.

Two Futures

Let me paint two pictures of 2035, because both are genuinely possible.

The first future: Parents work four days weekly on creative tasks that machines genuinely cannot do. AI handles meal planning, scheduling, and routine household coordination. A modest universal basic income covers baseline needs, funded by taxes on AI-generated productivity. Tuesdays and Fridays are spent with children, at community spaces, pursuing whatever humans find meaningful when survival is no longer a daily struggle. Companies that adopted four-day weeks a decade ago now dominate talent acquisition; the five-day holdouts cannot compete for workers.

The second future: Those who still have jobs monitor AI systems that track other workers’ productivity. Algorithms schedule shifts unpredictably, making reliable childcare impossible. The AI that promised freedom instead watches every keystroke, every bathroom break, every moment of “unproductive” thought. Neighbors laid off from white-collar jobs survive on meager benefits while corporations post record profits. Working parents see their children primarily through exhaustion. The leisure Keynes predicted went to shareholders.

Both scenarios extrapolate from current trends. The difference between them is not technological—it is political.

The Determining Variable

Since 1973, the United States has taken less than eight percent of productivity gains as reduced hours, while Western European countries have taken three to four times that amount. American workers put in 400 more hours annually than German workers—roughly ten extra weeks per year. The difference is not technology. It is policy, union strength, and collective choice.

Juliet Schor, the Boston College economist who led the four-day workweek research and whose new book Four Days a Week synthesizes this evidence, captures the central tension: “The impact of AI on work is ultimately a question about control—at many levels. Control over how the technology is used. Control over who reaps its rewards.”

The Autonomy Institute, a UK think tank, projects that twenty-eight percent of the British workforce could work thirty-two-hour weeks by 2033 thanks to AI—if societies choose to distribute gains that way. Organizations like 4 Day Week Global are proving the model works across industries and countries.

This is where you come in. Not as a passive observer of technological change, but as a citizen capable of demanding that productivity gains translate into time—time for your children, your parents, your communities, yourself.

The Industrial Revolution stole family time through a historic rupture between work and home. Two centuries later, we have a technology capable of returning what was taken. Whether it does so depends entirely on whether we insist upon it—through the policies we support, the companies we work for, and the futures we refuse to accept.

AI can give us back our time.

But only if we take it.

The HAIA Foundation explores how emerging technologies can serve human flourishing rather than diminish it. Subscribe to our Substack for more on AI, ethics, and the futures we are building together.

Utopia can only be achieved by naturally dealing with our ego for the greater good, and having politics for the same purpose. The human ego has for so long seeked the upper hand, a step ahead of the individuals surrounding him/her. Dystopia is more giving the owner a run for their ownership, and assets. Earth might going to Dysyopia. Utopia is achievable as planetary colonies graudally expands, and human self sufficiency is reached. Some many hundreds of years down the road.