The Quiet Grief of the AI-Augmented Worker

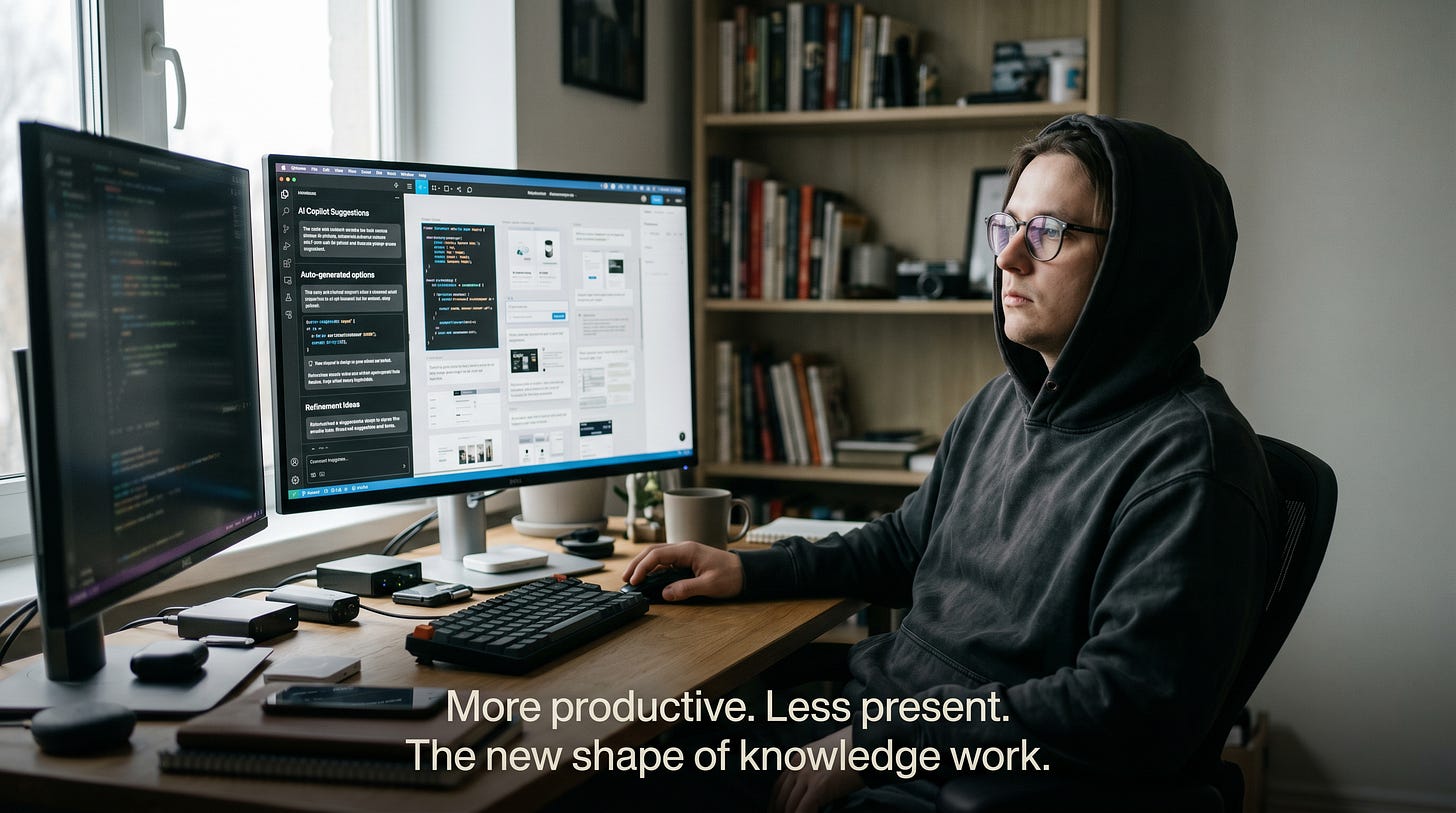

Why so many software engineers, designers, writers, and illustrators feel hollow — even as their output goes up

A friend of mine — senior engineer, fifteen years in, the kind of person who used to talk about a clever recursive solution the way other people talk about a vintage Bordeaux — called me a few months ago and said something I haven’t been able to shake.

“I’m faster than I’ve ever been. And I’ve never enjoyed the job less.”

He wasn’t complaining about a bad project. He wasn’t burned out in the usual sense. He had access to the best AI tools money can buy, ship velocity that would make his 2015 self weep with envy, and a manager who loves him. And yet — somewhere in the daily ritual of prompt, accept, prompt, accept, review, accept — the thing that used to feel like his had quietly become the thing he supervises.

He’s not alone. Not by a long shot.

This article is about a phenomenon that doesn’t yet have a clean name — the slow draining of meaning from work that AI is supposed to be making better. It’s not a doom story. It’s not a tech-panic story. It’s something more interesting (and, I think, more urgent): a story about what happens to people when the parts of work that gave them flow, pride, and identity are gradually outsourced to a very capable machine.

Let’s get into it.

So what’s actually happening?

Here’s where things get uncomfortable. The most rigorous study of AI’s effect on experienced developers — run by METR in mid-2025 — took 16 senior open-source engineers, gave them top-tier AI tooling on their own real codebases, and measured what happened. The developers predicted AI would speed them up by 24%. After the experiment, they believed they had been sped up by 20%. They were actually 19% slower.

Read that again. They felt faster while being measurably slower. That gap — between perceived productivity and actual productivity — is, in many ways, the whole story.

Microsoft Research and Carnegie Mellon found something similar in a survey of 319 knowledge workers: the more confidence workers placed in AI, the less critical thinking they did. The shape of work shifted from “information gathering and problem-solving” to “verification and integration of AI responses.” In plain English: from thinking to checking.

And a 2025 study of 666 UK participants found a strong negative correlation between heavy AI use and critical-thinking ability — strongest among the youngest users (17–25), the very group that will spend the longest careers in this environment.

So far so good. People who use AI a lot offload more thinking. They become faster (or feel faster). They check more, and create from scratch less. So what’s the harm — isn’t this just the next stage of using tools?

Here is where things get interesting.

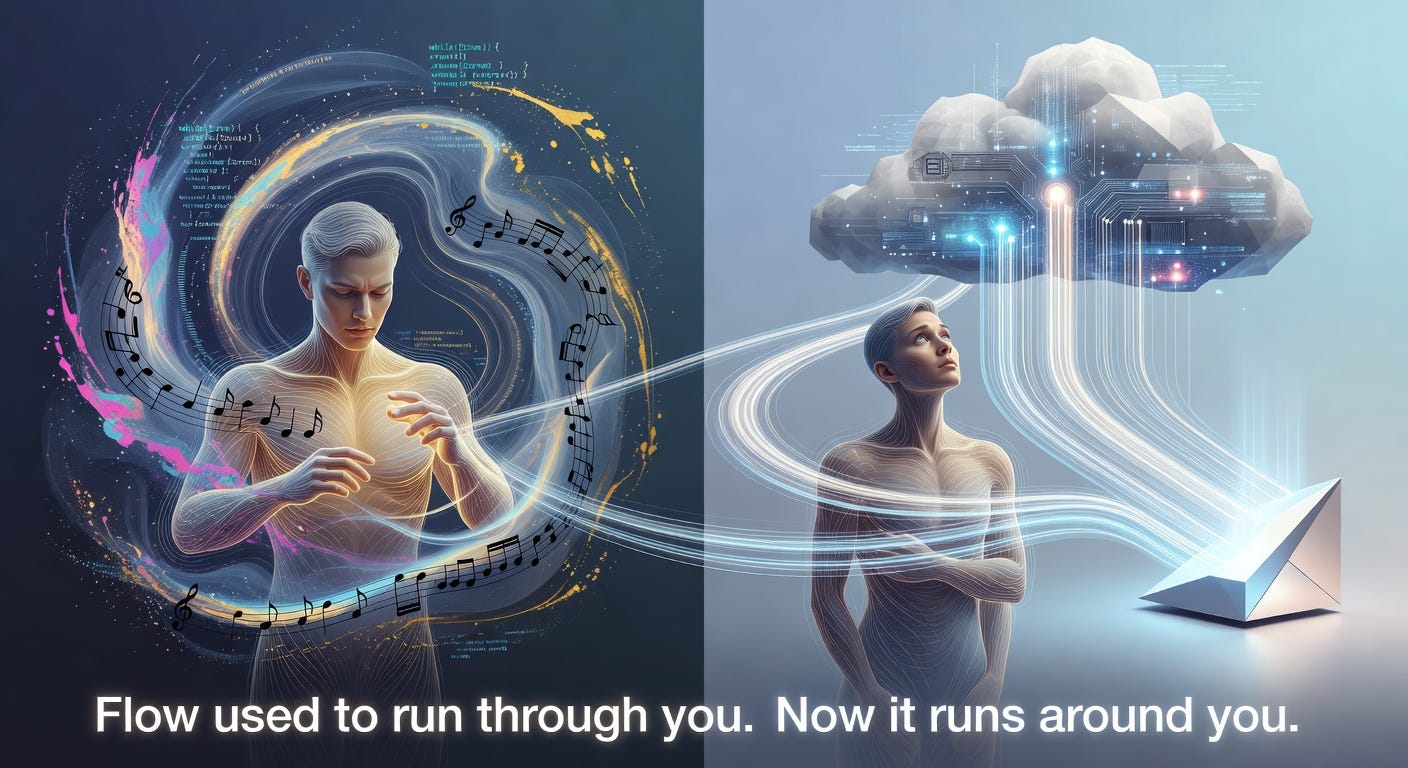

The disappearance of flow

Mihály Csíkszentmihályi spent his career studying flow — that state where a hard problem and your skill level meet at exactly the right angle, time disappears, and you produce your best work because you’ve become temporarily inseparable from it. Flow requires one specific thing: a challenge slightly above your current skill, that you face directly.

AI doesn’t break flow by being annoying. AI breaks flow by removing the challenge.

When the hard part of writing a function, a paragraph, a melody, or a layout is handled by a model, the human is left with the evaluation part — which is cognitively important but emotionally thin. You don’t get the satisfaction of having wrestled something into shape. You get the bureaucratic pleasure of having approved a draft.

Cal Newport, in a piece for The New Yorker, put it about as well as anyone has:

“The feeling of strain is often a by-product of getting smarter. To minimize this strain is like using an electric scooter to make the marches easier in military boot camp; it will accomplish this goal in the short term, but it defeats the long-term conditioning purposes of the marches.”

Paul Graham, less gently, calls the world we’re heading into one of “writes and write-nots” — a binary where, in his words, “writing is thinking” and the people who let AI do their writing are gradually letting AI do their thinking too.

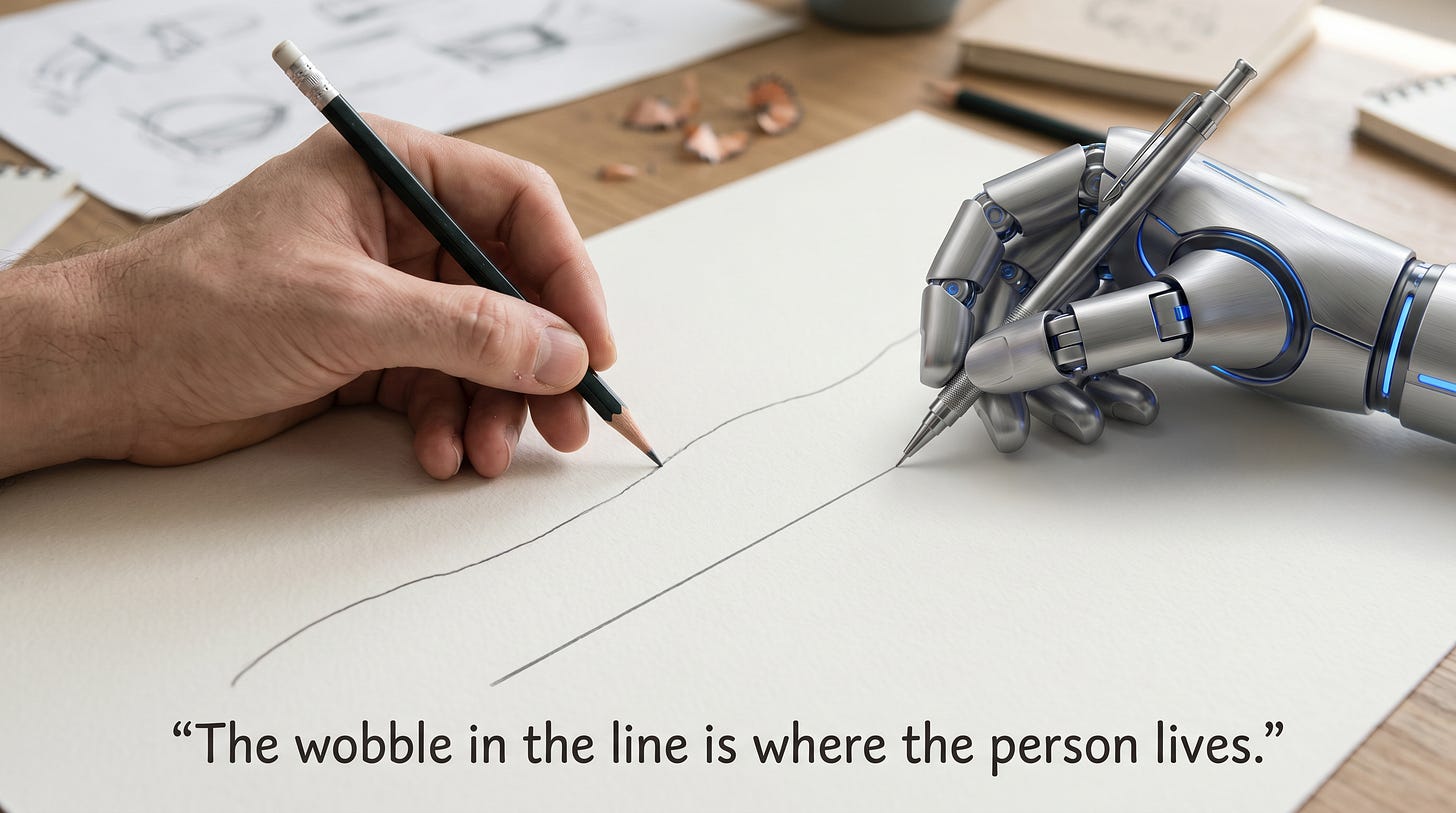

And the science fiction writer Ted Chiang — in what may be the cleanest sentence ever written about creative work and AI:

“Your first draft isn’t an unoriginal idea expressed clearly; it’s an original idea expressed poorly, and it is accompanied by your amorphous dissatisfaction, your awareness of the gap between what it says and what you want it to say.”

That gap — that amorphous dissatisfaction — is exactly what AI promises to spare you. And it’s exactly the thing that was making you a writer in the first place.

The empirical bruise

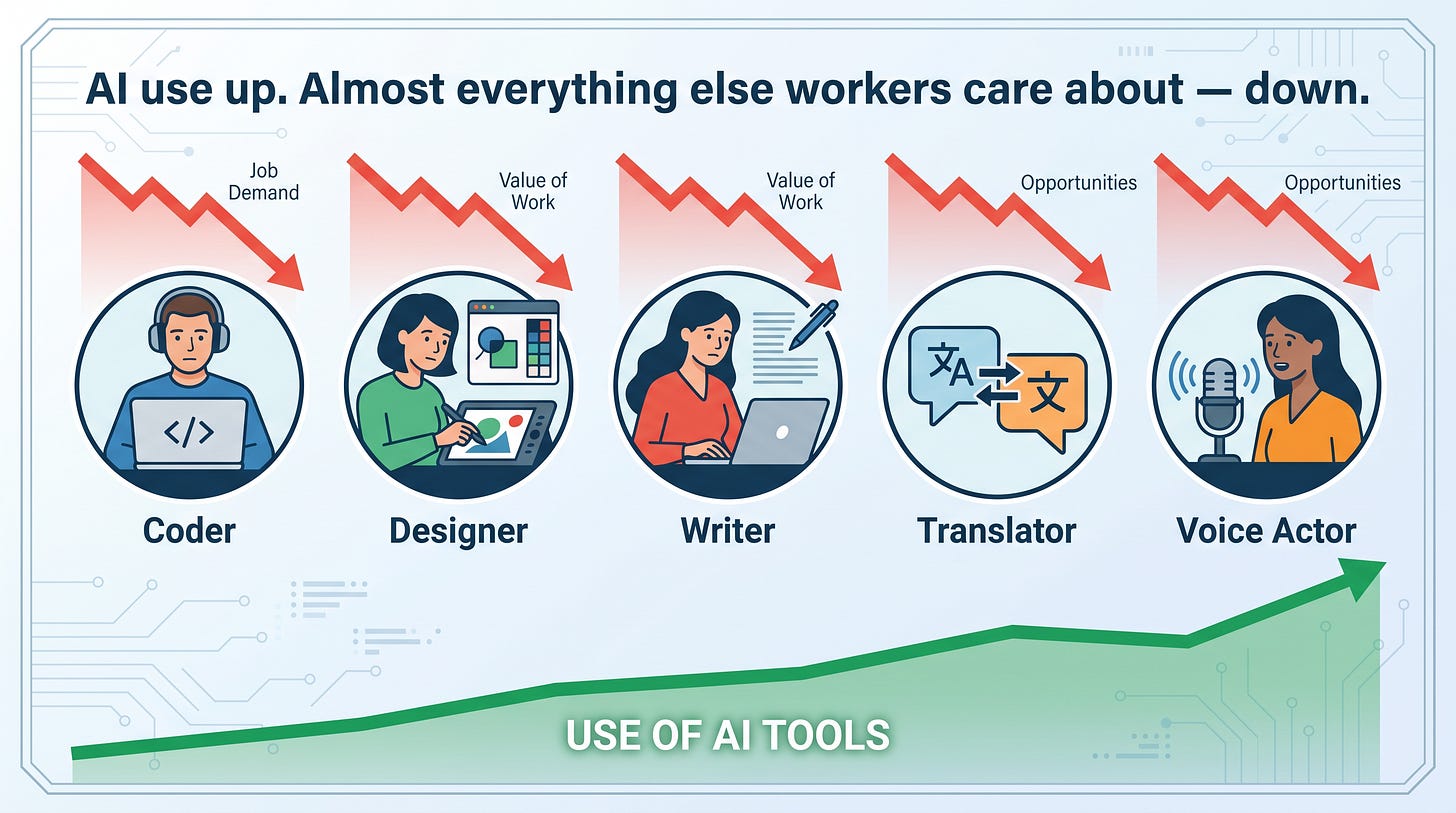

This is the part I find hardest to look away from. Across nearly every serious study from the last 18 months, the pattern is the same: AI use is rising, while the things workers actually report mattering to them are falling.

The 2025 Stack Overflow Developer Survey — the largest annual census of working programmers — found AI use at 84%, with trust collapsing to 33%. Two thirds of developers said they spend more time fixing “almost-right” AI output. (To be clear: AI helps. They just don’t trust it. And cleaning it up isn’t the fun part.)

Atlassian’s 2025 developer survey found that 63% of developers feel their leaders don’t understand their pain points — up from 44% the year before. Managers are banking the AI time savings. The friction is staying with the engineers.

The Upwork Research Institute’s “From Burnout to Balance” study — which deserves to be read by every executive — found that 77% of employees say AI tools have increased their workload, 71% are burned out, and 1 in 3 plan to quit within six months. While 96% of C-suite leaders expect AI to boost productivity.

A study published in Harvard Business Review coined a perfect new word: workslop. AI-generated content that looks finished but isn’t. 40% of desk workers received it in the past month. Each instance costs almost two hours to clean up. The polite phrase is “the burden shifts downstream.” The honest phrase is “your colleague used AI to make their problem your problem.”

Gallup’s 2025 State of the Global Workplace report shows global engagement falling for the second year running, from 23% in 2022/23 to 20% in 2025. Manager engagement has dropped from 30% to 22% in three years.

What does this mean for you, if you do this kind of work? It means your discomfort isn’t a personal failing. It isn’t a sign you’re behind on the curve. It’s the most well-documented psychological phenomenon in the modern workplace, and it’s happening to almost everyone.

What this looks like in the wild

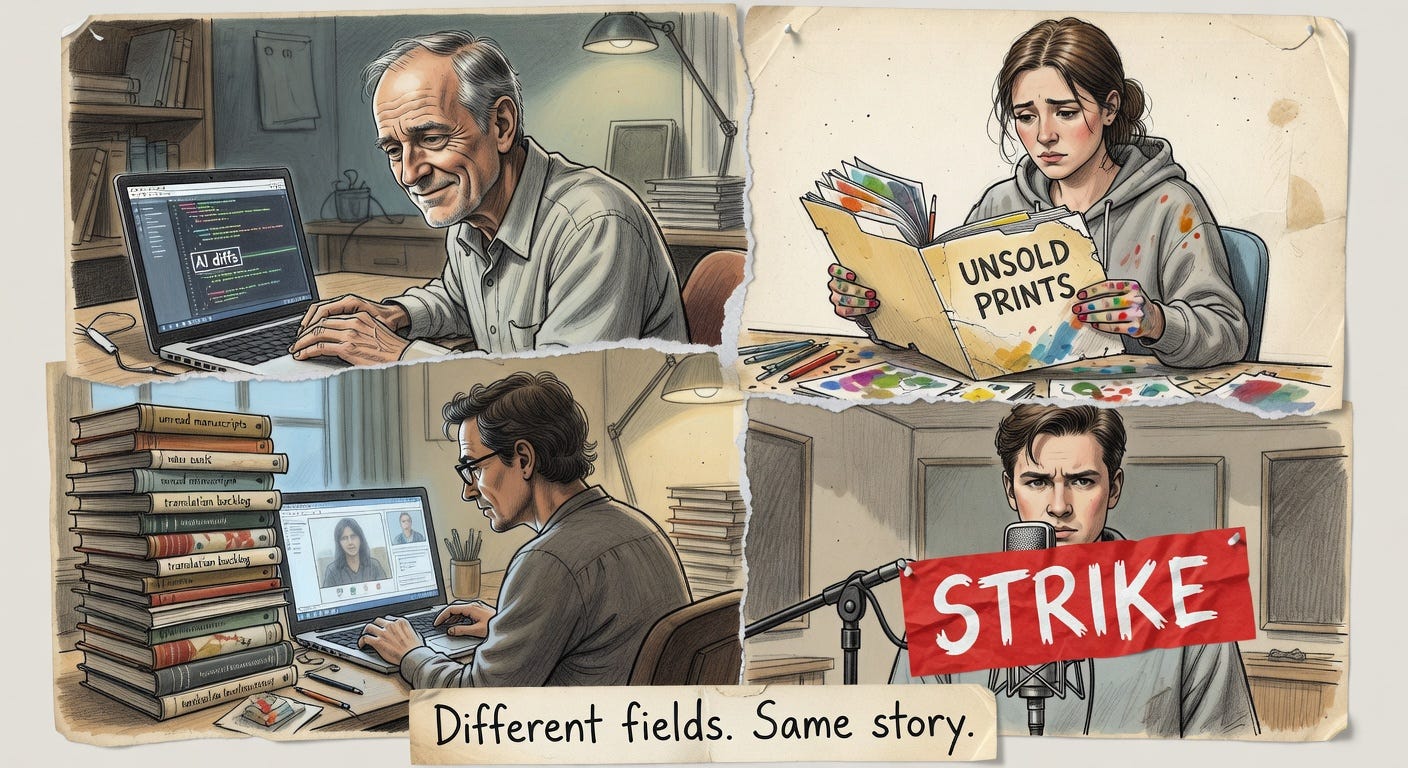

The numbers above describe a forest. Let me show you four trees.

The senior dev. Carla Rover, a 15-year veteran quoted in coverage of Fastly’s 2025 developer research, described in interviews spending “30 minutes sobbing after having to restart a project I vibe coded… It freaked me out because it sounded like a toxic coworker.” Or as a younger developer, Elvis Kimara, put it in the same piece: “There’s no more dopamine from solving a problem by myself.”

The illustrator. Karla Ortiz, a concept artist whose work has shaped Marvel films you’ve probably seen, testified before the U.S. Senate Judiciary Committee: “The fun parts of my job, the things that make artists live and breathe — all of that is outsourced to a machine.” The Society of Authors UK survey found 26% of illustrators and 36% of translators have already lost work to generative AI.

The voice actor. Jennifer Hale — the voice of half the games you’ve played — told Variety: “AI is just a tool like a hammer. If I take my hammer, I could build you a house. I can also take that same hammer and I can smash your skin and destroy who you are.” Roughly 2,600 voice actors and motion-capture performers struck for almost a year over precisely this issue.

The viral phrase that says everything. Author Joanna Maciejewska wrote the line that may end up defining this moment: “I want AI to do my laundry and dishes so that I can do art and writing, not for AI to do my art and writing so that I can do my laundry and dishes.”

That tweet has been quoted millions of times for a reason. It captures the bait and switch. The promise was: AI takes the drudgery, you keep the meaning. The reality, for an enormous number of working people, has been the opposite.

The career ladder is becoming a career cliff

A quick note on the economics, since you can’t talk about meaning without at least nodding at survival.

Erik Brynjolfsson and colleagues at Stanford published a paper in 2025 with a perfectly chilling title: “Canaries in the Coal Mine? Six Facts about the Recent Employment Effects of AI.” The headline finding: software developers aged 22–25 are down roughly 20% since late 2022. Early-career employment in the most AI-exposed occupations has fallen by 13% relative to less-exposed roles. Mid-career and senior workers are stable.

In short: the bottom rung of the ladder is being sawn off. McKinsey reports that 51% of organizations now say generative AI is reducing their need for entry-level roles. The traditional path — junior to mid to senior — assumes there’s something to be junior at. If the cognitively boring tasks that taught you the craft are now done by a model, the question becomes: how do you ever get good?

This matters for the meaning argument, too. It’s hard to feel like a craftsperson when there’s no apprentice tier left for you to have come up through.

To be clear: AI isn’t always the villain

I want to be careful here. I’m not in the camp that thinks AI tooling is bad, or that everyone using it is being deskilled. That would be both untrue and, frankly, dishonest about my own use.

GitHub’s research found 60–75% of Copilot users felt more fulfilled, and 73% reported staying in flow more easily. Microsoft’s 2024 Work Trend Index found 83% of users report enjoying their work more with AI. Armin Ronacher, the creator of Flask, wrote a thoughtful essay in late 2025 saying he genuinely prefers leading AI agents to typing code himself — and wants to hire more humans because of the energy it frees up.

So the picture isn’t AI ruins work. The picture is: AI is a profoundly powerful tool that, used carelessly or under management pressure, has a strong tendency to strip the cognitively rewarding parts of work and leave the verification parts behind. Used carefully, it can do the opposite.

The variable is intent, environment, and how much agency the worker has over the how. Which brings us neatly to what to do about it.

What you can do (as a person)

Self-Determination Theory — the most validated framework in the psychology of motivation — says intrinsic motivation depends on three things: autonomy (you choose how you work), competence (you experience yourself as good at something hard), and relatedness (you do the work alongside other humans who matter to you). AI used badly thwarts all three. AI used well can support all three.

A few things that seem to actually help, drawn from people who’ve thought about this carefully:

Keep an “AI-free” zone. Not your whole job — just the parts where the thinking is the point. The first draft. The architecture sketch. The rough character design. The melody. Strain isn’t the obstacle; it’s the point.

Refuse “Accept All.” It’s a small, almost trivial habit. But the moment you stop reading the diffs, you’ve quietly stopped being the engineer.

Maintain at least one craft AI can’t yet do well, and practice it deliberately. Live conversation, longhand writing, sketching from life, sight-reading, playing in a band. The point isn’t to be a Luddite; it’s to keep the muscle of doing-things-yourself attached to your sense of self.

Watch the gap between feeling productive and being productive. The METR study found a 39-point gap (24% expected speedup vs. 19% actual slowdown). When something feels too easy, it might be — and that ease may have a cost you’ll only notice in six months.

Talk about it with peers. Burnout and disengagement metastasize in private. Engagement is fundamentally relational.

What we should do (as a society)

The individual moves matter. They’re not enough.

A few directions where the conversation is heading, and where I think it should go faster:

Real protections for human-made creative labor. The Writers Guild of America, after a 148-day strike, won contractual language saying AI cannot write or rewrite literary material, and that AI-generated material is not “source material.” The SAG-AFTRA agreements for video game performers now require consent for digital replicas. The Authors Guild model AI clauses provide template language for translators, narrators, and writers. None of this is anti-technology. All of it is pro-worker. We need a lot more of it.

Disclosure and labeling. A “human-made” or “AI-assisted” disclosure regime — voluntary at first, regulatory eventually — is coming whether industries like it or not. The market for genuinely human work isn’t going away. It just needs to be findable.

Tax and policy that doesn’t subsidize the wrong thing. The Nobel laureate Daron Acemoglu has shown that the U.S. tax code currently taxes labor at roughly 25% and capital at roughly 5% — a structural incentive to automate even when automation produces only marginal productivity gains. This is a fixable problem if we want it fixed.

Education that protects the strain. Tyler Cowen — about as pro-AI as economists get — has nonetheless argued that students should still spend the majority of their time writing and thinking without AI, because the strain is what builds the mind. Cal Newport has been making the same case from the other direction. They’re both right.

Worker voice in AI deployment. Roosevelt Institute scholars and others have made a compelling case that the people using AI tools every day should have meaningful input into how they’re rolled out. Right now most don’t. The empathy gap Atlassian documented is the predictable result.

The lesson, and a small wish

There’s an old idea, from Robert Nozick’s Anarchy, State, and Utopia (1974), called the “experience machine.” Imagine a device that gives you any experience you want — successful career, beautiful art made, problems solved — without you actually having done any of it. Nozick’s question was: would you plug in?

His answer, and most people’s, is no. “We want to do certain things, and not just have the experience of doing them… we want to be a certain way, to be a certain sort of person.”

That’s the deepest thing the AI moment is asking us. Not whether AI is “good” or “bad.” Not whether it’ll take our jobs. But whether, in our rush to ship faster and produce more, we’re quietly building experience machines for ourselves at work — devices that give us the outputs of being a writer, a coder, a designer, a translator, a musician without our actually being any of those things.

The psychological cost of plugging in turns out to be real, and measurable, and showing up in survey after survey.

The good news — and I do think it’s good news — is that the people closest to this work are already pushing back. They’re forming unions, writing essays, reorganizing their practice, and saying out loud what a lot of us have only been muttering. The technology is here to stay. The question is whether we let it hollow us, or whether we shape it into something that lets us keep being who we wanted to be when we picked this work in the first place.

My vote? Keep the strain. Keep the wobble in the line. Use AI for what it’s brilliant at — the laundry and dishes of cognitive work — and protect, fiercely and on purpose, the parts where the thinking is the point.

That’s not nostalgia. That’s just remembering what work was supposed to be for.

If this resonated, the HAIA Foundation publishes regularly on the human side of artificial intelligence. Subscribe at substack.haia.foundation — and if you’re working through your own version of this, I’d genuinely love to hear about it in the comments.