The Hungry Machine

How AI’s appetite for power — and for war — could help engineer the next great famine

Let me start with a number that should stop you in your tracks: 266 million.

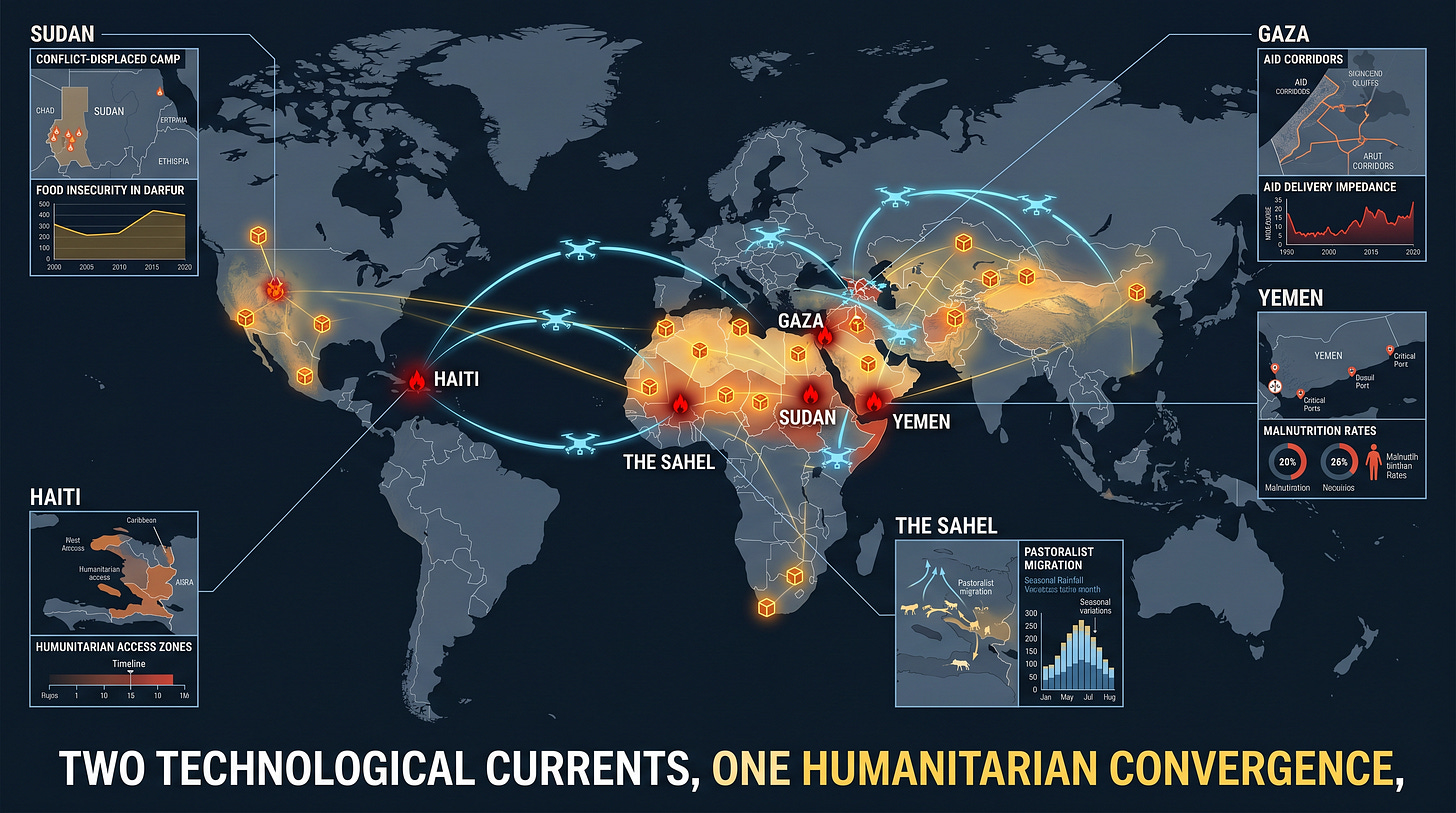

That is how many people, across 47 countries, faced acute food insecurity in 2025 — according to the Global Report on Food Crises 2026 released by the UN World Food Programme, the FAO, the EU and partners. For the first time in the report’s history, famine was confirmed simultaneously in two places: Gaza, and parts of Sudan including El Fasher. UN Secretary-General António Guterres called it “an unprecedented development.” Conflict was the primary driver in 19 countries, hitting roughly 147 million people directly.

Now, here is the uncomfortable question I want to put on the table: what happens to that already-fragile baseline when you layer on a technology that drinks freshwater by the billion-gallon, draws electricity by the gigawatt, and is being rapidly weaponized to make wars cheaper, faster, and more politically palatable to start?

That technology, of course, is artificial intelligence.

Part 1: The thirsty, hungry machine

Let me be clear about something up front — I am not anti-AI. I use these tools daily. I have seen them help diagnose diseases, accelerate drug discovery, translate languages between people who could never have spoken before. The promise is real.

But the bill is also real. And it is coming due in places that cannot afford it.

The International Energy Agency‘s 2025 Energy and AI report projects that global data-center electricity use will nearly double by 2030, to roughly 945 terawatt-hours — about 3% of all electricity consumed on Earth, and more than the entire country of Japan uses today. Goldman Sachs Research goes further, anticipating a 165% jump from 2023 levels and an estimated $720 billion in new global grid spending just to keep up. Morgan Stanley estimates AI-related data centers could be drinking more than one trillion liters of water per year by 2028 — an elevenfold leap in four years.

What does that look like on the ground? Researchers at UC Riverside calculated that a single 100-word ChatGPT answer evaporates about 519 milliliters of fresh water — roughly a standard plastic water bottle. (To be fair: OpenAI’s Sam Altman has cited a much lower figure of 0.3 mL per query, but that figure does not include the water used to generate the electricity. The Lawrence Berkeley National Laboratory put that at about 1.2 gallons per kWh.) Reporting on a single GPT-4 training run at Microsoft’s Iowa data centers? 11.5 million gallons in July 2022 alone.

Tech companies’ own disclosures tell the same story. Google‘s greenhouse-gas emissions have risen roughly 48% since 2019, according to NPR. Microsoft’s are up 23–30% since 2020 — despite a “carbon negative by 2030” pledge. Microsoft’s own 2025 sustainability report admits its water consumption has climbed 87% since 2020, to nearly 2.1 billion gallons.

Here is where things get personal.

In Memphis, Elon Musk‘s xAI “Colossus” supercomputer was built on the Memphis Sand Aquifer — the city’s sole drinking-water source — and ran roughly 35 unpermitted methane gas turbines that the Southern Environmental Law Center and Protect Our Aquifer say emit 1,200–2,000 tons of smog-forming nitrogen oxides per year. In April 2026, xAI quietly paused construction on its long-promised wastewater recycling plant.

In Northern Virginia — the world’s data-center capital — residential electricity rates have climbed 42% since 2019, per Brookings. The State Corporation Commission had to invent a brand new rate class to keep regular households from subsidizing hyperscalers’ bills.

In Cerrillos, Chile, residents had to win a 2020 referendum and a court fight to force Google to redesign a facility that would have drawn 169 liters of cooling water per second — in a region 15 years into drought. In Uruguay, during the 2023 “it’s not drought, it’s pillage“ protests, residents were drinking brackish tap water while a planned Google data center was projected to need 7.6 million liters per day.

So far so good — except for the farmers, the families, and the food. Because here is the thing about water and electricity: agriculture is already the world’s largest freshwater consumer, and climate change is shrinking the buffer. When firms with effectively unlimited capital outbid farmers for water and power in stressed watersheds, they are not creating a future food crisis. They are accelerating one already underway.

Part 2: The machine that makes wars easier

Now let us turn to the second front — and the more dangerous one.

Why? Because conflict, not climate alone, is the leading driver of mass hunger today. And AI is being woven into the practice of war faster than any policy framework can keep up.

Let me give you three data points.

First: in April 2024, +972 Magazine and Local Call — through reporting by Yuval Abraham — published an investigation, based on six Israeli intelligence officers, on a system called “Lavender.” Lavender reportedly ranked tens of thousands of Palestinian men by probabilistic scores. Operators were said to spend as little as 20 seconds confirming a target — mostly verifying that the target was male — and authorization was reportedly granted, in some cases, to kill 15–20 civilians per junior militant and up to 100 for a senior one, often in their family homes at night. The IDF disputes specifics. But even the Lieber Institute at West Point — hardly a fringe outlet — has stress-tested these claims and found the underlying systems represent a step-change in how war is conducted.

Second: in Ukraine, the CSIS, the Atlantic Council, and IEEE Spectrum all report that Ukrainian firms — including Swarmer — have moved AI-coordinated drone swarms from prototype to combat use, with over 100 documented operations. June 2025’s “Operation Spiderweb” used FPV drones with onboard AI to disable an estimated 20–41 Russian strategic bombers in a single coordinated strike. Russia’s Shahed-style drones now reportedly carry Nvidia chipsets for autonomous terminal guidance. We have crossed, quietly, from “human-in-the-loop” to “human-on-the-loop” — and in many cases, just “human-near-the-loop.”

Third: the U.S. Department of Defense raised the contract ceiling for Palantir‘s “Maven Smart System” to $1.3 billion in 2025, with reportedly more than 20,000 active users and the capacity to generate, by one official’s account, up to 1,000 targeting recommendations per hour. Palantir’s 75 separate DoD contracts were folded that same year into a $10 billion Army Enterprise Agreement. In February 2025, Alphabet — Google’s parent company — quietly reversed its longstanding pledge not to develop AI for weapons.

So here is the question that ought to keep us up at night: what happens to the political threshold for going to war when the human cost — at least on your side — drops to near zero?

That is not my framing. That is the framing of Stuart Russell at UC Berkeley, of Max Tegmark and Anthony Aguirre at the Future of Life Institute, of the International Committee of the Red Cross since 2015, and of the Stop Killer Robots coalition. In November 2025, the UN General Assembly passed a resolution supported by 156 states explicitly naming “lowering the threshold for and escalation of conflicts” as a core fear. Even more sober institutions like RAND and CSIS — which are not in the business of being alarmist — have flagged distorted strategic judgment, miscalculation, and a U.S.-China security-dilemma arms race as the realistic dangers. Not Terminators. Just human leaders making faster, worse decisions, advised by machines whose reasoning they cannot fully audit.

And then there is the secondary front: AI-generated disinformation. The Knight First Amendment Institute and Harvard’s Ash Center found that the predicted “deepfake apocalypse” of the 2024 election year did not arrive in the form anticipated. But a slower, more diffuse rot — what the Alan Turing Institute‘s CETaS team calls “death by a thousand cuts” — has measurably degraded trust in shared reality. In a tense moment between nuclear-armed states, that is its own escalation risk.

Part 3: Where the two rivers meet

Now let me sketch a scenario that I do not think is far-fetched. I think it is, to borrow a phrase, the median forecast.

It is 2032. AI data centers consume the equivalent of Japan’s annual electricity. In Arizona’s Imperial Valley and Spain’s Aragón region, where Amazon sought a 48% water-permit increase in late 2024, irrigation has been rationed for three consecutive summers. Farmers in Querétaro and Canelones have left the land. Wheat futures spike on every drought.

Meanwhile, two regional wars — pick your scenario from the CSIS playbook: the Sahel, the Korean peninsula, the Taiwan Strait, the Horn of Africa — are being fought primarily with autonomous swarms and AI-targeted strike systems. Casualties on the operating side are minimal; casualties on the receiving side, civilian among them, are catastrophic. Each conflict disrupts a grain corridor, a fertilizer pipeline, a port. Humanitarian funding — already at 2016 levels per the GRFC — collapses further. The IPC Phase 5 caseload, currently 1.4 million, multiplies.

A bolder scenario, taken seriously by Russell and the Future of Life Institute, contemplates cheap autonomous “slaughterbot” drones proliferating to non-state actors, or AI quietly embedded in nuclear command-and-control such that a misclassified radar return triggers an exchange no human chose. Low probability, perhaps. But the UN Secretary-General is now treating it as live policy.

None of this — and this is the part I want to underline — requires science fiction. Each step is an extrapolation from data published in 2024, 2025, and 2026.

So what does this mean for you?

I am not here to tell you to stop using AI. I use it. You probably use it. Many of the people building it are genuinely trying to do good work. The question is not whether AI exists; it is whether we choose to govern it like adults or sleepwalk into letting it govern us.

We have done this before — with nuclear weapons, with chlorofluorocarbons, with leaded gasoline, with anti-personnel mines. The pattern is consistent: a technology arrives faster than our institutions, harm accumulates, and eventually — sometimes too late, sometimes just in time — citizens demand a framework. The question is whether the framework arrives before, or after, the famine.

Lesson learned from history? It is almost always after. My vote is that this time, we make it before.

That means asking harder questions of the companies building this infrastructure — about water, about emissions, about military contracts. It means asking harder questions of our governments — about siting decisions, ratepayer subsidies, and the legal frameworks that let autonomous weapons be deployed without meaningful human control. It means supporting the work of organizations like Stop Killer Robots, the Future of Life Institute, the ICRC, and yes — humanitarian-AI conveners like the HAIA Foundation. And it means remembering, every time we type a query into a chatbot, that somewhere a turbine is spinning, a cooling tower is venting steam, and — if we are not careful — a farmer is wondering where her water went.

The hungry machine does not know it is hungry. That is our job to know.

One can only dream of a generation that chose to feed people before it fed servers. We are still that generation — just barely. Let us act like it.

If this piece resonated with you, share it. If it made you uncomfortable, share it twice.