The EU AI Act Compliance Countdown: What Non-Experts Need to Know Before August 2026

The clock is ticking on the world's first comprehensive AI law — and almost nobody is ready.

Here is a number that should make you sit up: 78% of organizations affected by the EU AI Act have not taken meaningful compliance steps. The deadline is August 2, 2026. That is less than four months away.

And here is another one: only 8 of 27 EU member states have even registered their enforcement contacts. The law is arriving, and the house is not in order.

So why should you — someone who probably did not build an AI model, does not run a tech company, and may not live in Europe — care about any of this? Because this regulation will change how AI touches your life whether you are aware of it or not. If you use a chatbot, apply for a loan, submit a résumé online, interact with a government service, or scroll through content filtered by an algorithm, the EU AI Act has something to say about how that experience works. And if GDPR taught us anything (remember those cookie consent pop-ups that took over the internet?), it is that European regulation has a way of becoming everyone’s problem.

Let me break this down.

What Is the EU AI Act, Exactly?

Think of it as the AI equivalent of food safety regulation. Before governments mandated ingredient labels and banned certain additives, you had no way of knowing what was in your food — or whether it might harm you. The EU AI Act does something similar for artificial intelligence: it classifies AI systems by how much risk they pose to people, bans the most dangerous ones outright, and requires safety checks on the rest.

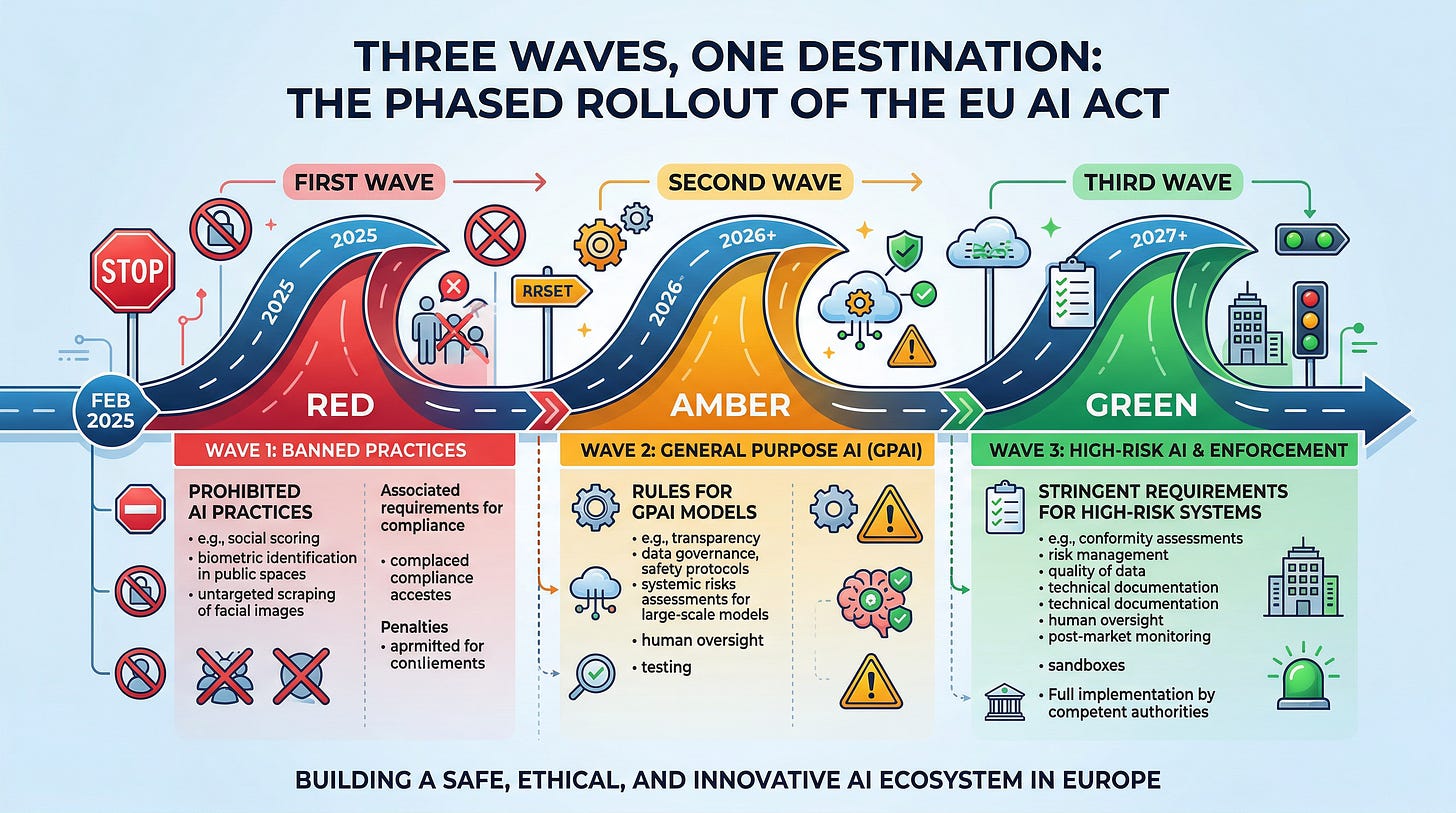

The law was published in the EU’s Official Journal on July 12, 2024, and entered into force on August 1, 2024. But — and this is important — it does not hit all at once. The European Commission designed a phased rollout stretching through 2030, with obligations kicking in at different dates depending on how risky an AI system is considered.

Some of those dates have already passed. Others are barreling toward us. The biggest one? August 2, 2026 — the date when high-risk AI system requirements, transparency obligations, deployer duties, and full enforcement powers all activate simultaneously.

The Timeline So Far — and What Arrives in August

Here is where things get interesting. The rollout has been staggered into three major waves:

Wave 1 — February 2, 2025 (already in effect). Eight categories of AI systems deemed to pose “unacceptable risk” were banned outright. We are talking about AI that manipulates people through subliminal techniques, social scoring systems (yes, the dystopian kind), untargeted facial recognition database scraping, emotion recognition in workplaces and schools, and predictive policing based solely on profiling. Also in this wave: every organization deploying AI became obligated to ensure their staff have adequate “AI literacy.” That requirement is live right now — and 62% of organizations using AI in workforce management have no formal AI literacy program.

Wave 2 — August 2, 2025 (already in effect). Obligations for providers of general-purpose AI (GPAI) models — think the large language models behind your favorite chatbots — took effect. Member states were supposed to designate their national enforcement authorities. The Commission’s GPAI Code of Practice was finalized. EU-level governance bodies — the AI Board, Scientific Panel, and Advisory Forum — became operational.

Wave 3 — August 2, 2026 (incoming). This is the big one. High-risk AI systems face full requirements: risk management systems, rigorous data governance, comprehensive technical documentation, human oversight mechanisms, conformity assessments, CE markings, and post-market monitoring with mandatory serious incident reporting. Transparency rules under Article 50 kick in — chatbots must identify themselves as AI, deepfakes must carry labels, synthetic content requires watermarking. Deployers of high-risk AI must conduct fundamental rights impact assessments. And the enforcement machinery — including the power to fine — becomes fully operational.

All at once. In less than four months.

The Risk Pyramid: How the Act Classifies AI

The entire architecture rests on a deceptively simple idea: not all AI is equally dangerous, so not all AI should be equally regulated. The Act sorts every AI system into one of four risk tiers, each carrying different obligations.

Unacceptable risk — banned. Already illegal since February 2025. Social scoring, manipulative AI, untargeted biometric scraping, emotion recognition in schools and workplaces. Gone. Done. (Well, in theory — enforcement is another story, which we will get to.)

High risk — heavily regulated. This is the tier that matters most in August 2026. It covers AI used in eight domains: biometrics, critical infrastructure, education, employment and worker management, access to essential services (including credit scoring and insurance pricing), law enforcement, migration and border control, and the administration of justice. If you have ever been screened by an AI hiring tool, had your loan application processed algorithmically, or been evaluated by an AI-powered educational assessment — that is high-risk territory. Providers of these systems must build in risk management across the entire lifecycle, ensure their training data is representative and free of bias, maintain detailed technical documentation, design for human oversight, and submit to conformity assessments before putting their product on the market.

Limited risk — transparency required. AI that interacts with people must disclose its artificial nature. Synthetic content must be watermarked. Deepfakes must be labeled.

Minimal risk — no mandatory requirements. Spam filters, recommendation engines, AI-enabled games. The vast majority of AI systems fall here and face no specific obligations, though voluntary codes of conduct are encouraged.

So far so good. The logic is sound. But here is where things get complicated for ordinary people and non-tech businesses.

Why This Matters If You Are Not a Tech Company

Here is the part almost nobody talks about, and it is — in my view — the most consequential dimension of this entire regulation.

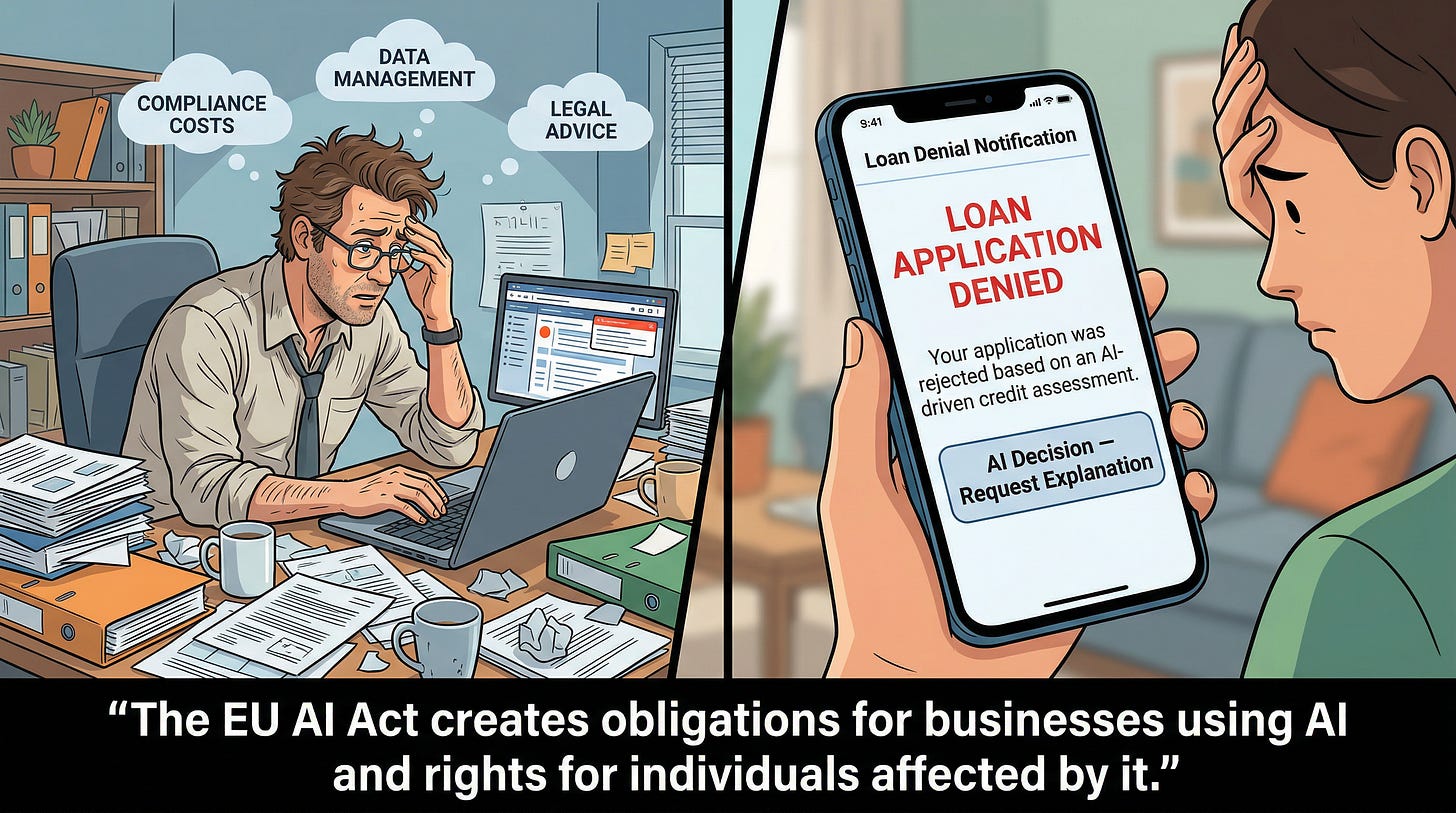

You do not have to build an AI system to fall under this law. You just have to use one.

Under the Act, any organization deploying an AI system “under its authority” in a professional capacity is classified as a “deployer”. That means the bank using AI credit-scoring software, the HR department screening résumés with an off-the-shelf AI tool, the hospital running AI-assisted diagnostics, the insurance company using algorithmic risk assessment — all deployers, all carrying compliance obligations starting August 2026.

What does this mean for you? Well, if you run a business that uses AI tools (and let us be honest — who does not at this point?), you may need to assign trained human overseers for high-risk systems, monitor AI system performance continuously, suspend use if risks emerge, report serious incidents to authorities, retain system logs, and — critically — conduct fundamental rights impact assessments.

And here is the real kicker: under Article 25, a deployer can be reclassified as a provider — with dramatically heavier obligations — if they significantly modify a system, use it outside its intended purpose, or slap their own branding on it. Imagine buying a pre-built AI hiring tool, customizing it heavily for your company’s specific needs, and suddenly discovering you are now legally responsible as if you had built it from scratch. That is not a hypothetical scenario. That is the law.

For individual citizens in Europe, the protections are tangible. Anyone affected by a high-risk AI decision gains a right to explanation, a right to lodge complaints with national authorities, and (through the Representative Actions Directive) the ability to participate in class-action-style lawsuits. If an AI system denies your loan, rejects your job application, or flags you at a border crossing, you will have legal recourse.

The Readiness Gap: Almost Nobody Is Prepared

Why you may ask? Because compliance is expensive, confusing, and the regulatory infrastructure itself is unfinished.

Let me give you the numbers. A Deloitte survey of 700+ senior leaders found only 18% of European respondents rated themselves as “highly prepared” for AI risk and governance. An appliedAI study of 106 enterprise AI systems found 40% had unclear risk classifications — meaning organizations cannot even confidently determine whether their systems fall under the high-risk category. Over half lack a complete inventory of the AI systems they use. Many treat AI as if it were traditional software, failing to recognize the unique documentation, testing, and oversight requirements the Act demands.

And the technical standards companies need as compliance benchmarks? Behind schedule. CEN/CENELEC — the European standards body — missed its August 2025 deadline and now targets the end of 2026 with what it calls “exceptional measures.” Companies are being told to comply with a law whose detailed compliance benchmarks do not yet fully exist. (If this feels like being told to pass an exam when the textbook has not been written, well, that is because it is.)

The cost dimension is contested but sobering. DIGITALEUROPE projects the Act could cost European innovators €31 billion total, with a small 50-person company developing high-risk AI facing initial costs of €320,000–€600,000. CEPS calls that figure “grossly exaggerated.” The truth is almost certainly somewhere in between — but for a small or medium-sized business, even the lower estimates are not trivial.

Meanwhile, the Centre for European Policy Network warns that SMEs worldwide must prepare now, and a survey by ACT | The App Association found EU/UK tech firms losing €94,000–€322,000 annually from regulatory-driven AI delays. Six in ten micro, small, and medium enterprises report delayed access to frontier AI models.

The General-Purpose AI Wildcard

Here is where things get particularly interesting for anyone who uses ChatGPT, Gemini, Claude, or any of the major AI assistants. The Act creates a dedicated regime for general-purpose AI (GPAI) models — AI systems displaying “significant generality” capable of performing a wide range of tasks.

All GPAI providers must maintain technical documentation, supply downstream companies with information about their models’ capabilities and limitations, establish copyright compliance policies, and publish training data summaries. Open-source models with publicly available parameters get a lighter regime — unless they present systemic risk.

And what qualifies as “systemic risk”? The Act sets a threshold: 10²⁵ floating-point operations (FLOPs) in cumulative training compute. Cross that line, and you face mandatory model evaluations, adversarial testing, serious incident reporting, and enhanced cybersecurity requirements. This captures the most advanced foundation models currently in existence.

The GPAI Code of Practice, finalized in July 2025, was signed by 26 major providers — including Microsoft, Google, Amazon, OpenAI, and Anthropic. Meta notably refused, creating one of the first enforcement flashpoints. The AI Office holds exclusive enforcement jurisdiction over GPAI models, and on January 8, 2026, issued a formal data retention order to X regarding its Grok chatbot — one of the first direct enforcement actions under the Act.

Now imagine — and this is where creative scenario-building gets both fun and terrifying — a world where AI agents (autonomous programs acting without real-time human supervision) are negotiating contracts, managing supply chains, and making medical recommendations on your behalf. These agents emerged at scale in 2025, and they fundamentally challenge the Act’s human oversight requirements. When three different AI agents from three different providers collaborate to produce a decision that affects your credit score, who is the “provider”? Who is the “deployer”? Who is accountable? The Act’s framework, designed primarily for static AI systems with clear human oversight chains, is already being stretched by the technology it is trying to regulate.

What the Experts Are Saying

Expert opinion splits along a revealing fault line. Innovation advocates worry the Act goes too far. Civil rights organizations argue it does not go far enough.

Access Now has called the AI Act “a failure for human rights, a victory for industry and law enforcement,” arguing the law substitutes genuine rights-based protections for risk mitigation exercises while leaving excessive loopholes. AlgorithmWatch has warned that developers themselves decide whether their systems are high-risk through self-classification, potentially circumventing the very requirements designed to protect people.

On the implementation side, EDRi (European Digital Rights), leading a coalition of civil society organizations, has warned that rights enshrined in the AI Act remain hollow without adequate enforcement infrastructure. When the European Commission proposed the Digital Omnibus — a “simplification” package — EDRi called it the most ambitious deregulation of the digital sphere the EU has ever attempted.

Stanford HAI’s AI Index Report 2026 documented that compliance costs vary up to 8x between jurisdictions — averaging $180,000 in Singapore to $1.4 million in the EU for mid-size deployers. Brookings analyst Alex Engler argues the Act will have global impact but a limited “Brussels Effect” — companies can maintain separate compliance tiers per jurisdiction rather than building to the EU standard everywhere.

The OECD, analyzing 200 government AI use cases, found that many public sector applications fall into the high-risk category — but government AI adoption already trails the private sector due to skills shortages and legacy systems. And the Ada Lovelace Institute found that 84% of the UK public fears governments will prioritize tech company partnerships over public interest in AI governance, with 89% supporting an independent AI regulator with real enforcement powers.

How the Rest of the World Compares

In contrast to Europe’s comprehensive horizontal regulation, the rest of the world is — to put it diplomatically — all over the place.

The United States has no comparable federal legislation. President Trump’s January 2025 executive order revoked Biden-era AI safety measures and pivoted explicitly toward deregulation. A December 2025 order established policy to preempt state AI regulations that obstruct national competitiveness. Meaningful regulation is happening at the state level — Colorado’s AI Act (effective June 2026) requires impact assessments for high-risk AI, California mandates AI-generated content disclosure, Texas requires transparency in consequential AI decisions — but these remain disconnected islands without a coherent federal framework.

The United Kingdom has deliberately positioned itself as more business-friendly post-Brexit, relying on five non-statutory principles enforced by existing sector regulators rather than a dedicated AI authority. A comprehensive AI Bill is reportedly planned but unlikely before mid-2026.

China regulates AI vertically through sector-specific rules that are in some respects stricter than the EU’s — mandating pre-approval of algorithms and alignment with “core socialist values.” South Korea’s AI Basic Act adopts an EU-influenced risk classification. Canada’s AIDA died in parliament. Brazil has advanced an EU-like risk-based bill.

The question for anyone operating globally is not whether AI regulation is coming — it is which overlapping, sometimes contradictory set of rules you will need to navigate. As one compliance advisor put it to me recently: “GDPR was a fire drill. The AI Act is the actual fire.”

The Fines — and the Consequences That Matter More

Let me be direct about the penalty structure because the numbers are eye-catching.

Violations involving prohibited AI practices carry fines up to €35 million or 7% of global annual turnover — whichever is higher. Non-compliance with high-risk or GPAI obligations: €15 million or 3%. Supplying misleading information to authorities: €7.5 million or 1%. For context, 7% of global revenue would equal roughly €8.5 billion for Meta, €14 billion for Google, or €16 billion for Microsoft.

GPAI-specific fines can reach €15 million or 3% of worldwide turnover, imposed directly by the AI Office — the only centralized enforcement element. Everything else is handled by national market surveillance authorities, with the Court of Justice of the EU having unlimited jurisdiction to review fine decisions.

But here is what I think matters more than the fines: market exclusion. Non-compliant systems can be ordered withdrawn from the EU market entirely. EU deployers must verify their vendors’ compliance, meaning non-compliant AI providers lose access to enterprise customers across the entire bloc. GDPR fines since 2018 have exceeded €5.88 billion across 2,245 penalties — demonstrating the EU’s willingness to impose significant financial consequences, even if the big enforcement actions took years to materialize.

What Happens Next — and What Could Go Wrong

Just imagine this scenario: it is September 2026, one month after the deadline. A European hospital’s AI diagnostic tool misclassifies a condition, leading to delayed treatment. The system — purchased from a US vendor, customized heavily by the hospital’s IT department — falls into a regulatory gray zone. Is the US vendor the provider? Has the hospital’s customization reclassified it as a provider under Article 25? The national competent authority investigates, but it was only fully staffed two months earlier. The harmonized technical standards the investigation would reference? Still in draft form.

Now multiply that scenario across banking, employment, education, law enforcement, insurance, and migration — across 27 member states with varying levels of enforcement readiness — and you begin to see the challenge.

The Digital Omnibus proposal, currently in trilogue negotiations, may push some high-risk deadlines to December 2027. Both Parliament and Council have independently converged on that date as a fallback if harmonized standards are not ready. But compliance advisors universally counsel: prepare for August 2026 regardless. The Omnibus may not pass. And even if it does, prohibited practices, transparency obligations, and GPAI rules remain firmly in effect.

Just imagine a different scenario — one where the Act works as intended. A job applicant in Berlin, rejected by an AI hiring tool, exercises their right to explanation and discovers the system was disproportionately filtering candidates from certain postal codes. The employer, as deployer, is obligated to investigate. The AI vendor, as provider, must demonstrate the system was tested for such biases. The national authority has the power to order corrective measures. The system is improved. The next round of candidates is evaluated more fairly. That is the promise of this regulation — not perfection, but accountability.

What Should You Actually Do?

Whether you are a business owner, an employee, a student, or simply someone who interacts with AI systems daily (which, at this point, means almost everyone), here is my honest assessment of what matters:

If you run a business that uses AI tools — even off-the-shelf ones — start by taking inventory. What AI systems do you use? Where did they come from? What decisions do they influence? Could any of them fall into the high-risk category under Annex III? If the answer to that last question is “I don’t know,” that itself is a problem. Regulatory sandboxes are being established in every member state to help — priority access is available for SMEs.

If you are an employee whose work involves or is affected by AI — in hiring, performance evaluation, workplace monitoring — know that emotion recognition systems in your workplace are already banned, and your employer will soon face specific obligations around high-risk AI used in employment contexts.

If you are a citizen — pay attention. The rights this law creates are only meaningful if people exercise them. Ask whether the AI system that denied your loan or your visa application was compliant. Lodge complaints. The Act’s architects designed enforcement pathways that depend partly on individuals holding organizations accountable.

And if you are outside Europe — do not assume this does not affect you. If your AI outputs reach EU citizens, the Act’s extraterritorial reach applies. Companies from Austin to Auckland are already adjusting their compliance posture. As Brookings notes, the global impact is real even if the “Brussels Effect” has limits.

The Bottom Line

The EU AI Act represents the most ambitious attempt to regulate artificial intelligence through binding law, and the August 2026 deadline will test whether that ambition can translate into practice.

Three things are clear. First, the readiness gap is genuine and wide — most organizations, most member states, and the standards bodies themselves are not where they need to be. Second, the Act’s real impact will depend less on its text than on enforcement consistency across 27 member states — a challenge GDPR demonstrated takes years to resolve. Third, the tension between the Act’s comprehensive framework and the deregulatory pressures embodied in the Digital Omnibus reflects a deeper European ambivalence: wanting to lead on AI governance while fearing competitive disadvantage against a deregulatory United States and a state-backed China.

I will not claim this article covers everything — the Act runs to over 400 pages, its implementing acts and delegated regulations are still being produced, and the technology it regulates is evolving faster than any legislature can keep pace with. But the core message is simple: August 2, 2026 is not someone else’s problem. Whether you build AI, buy AI, use AI, or are simply affected by AI decisions, this date marks a shift in the rules of the game.

The question is no longer whether AI should be regulated. It is whether we can regulate it well enough, fast enough, to matter.

One can only dream — but at least, for once, someone is trying.

The HAIA Foundation works to educate and empower communities at the intersection of technology, policy, and human rights. Subscribe to our Substack for more analysis on the issues shaping our digital future.