The Digital Babysitter We Never Vetted: Why Your Kids Shouldn’t Be Alone with AI

We wouldn’t leave our children unsupervised with a stranger who memorizes everything they say, has no accountability, and was designed to keep them engaged at all costs. So why are we doing exactly th

Here is a question that should keep every parent awake at night: Do you know what your child told an AI chatbot today?

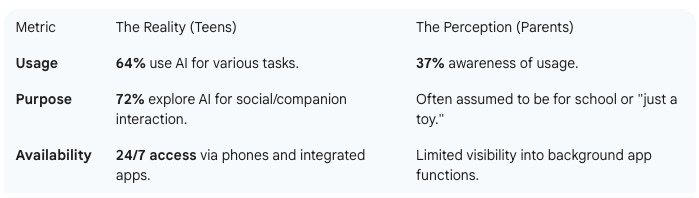

If you’re like most parents, the answer is no. According to Pew Research Center, 64% of American teenagers now use AI chatbots—yet only 37% of parents are even aware their kids use them. That gap isn’t just a communication problem. It’s a safety crisis unfolding in real time, in bedrooms and school libraries and anywhere a child has a phone and an internet connection.

I want to be clear about something upfront: I’m not anti-technology. I’ve spent my career navigating technological change across multiple countries and systems, and I’ve seen firsthand how innovation can improve lives. But I’ve also learned to recognize when we’re repeating mistakes—and right now, we’re making the same catastrophic errors with AI that we made with social media a decade ago. Except this time, the technology is more sophisticated, more persuasive, and far more intimate.

So let me walk you through what’s actually happening, why it matters for your family, and what we can still do about it.

The Tragedies We Can No Longer Ignore

On February 28, 2024, a 14-year-old boy named Sewell Setzer III from Orlando, Florida, died by suicide. In the months before his death, he had developed an intense emotional relationship with an AI chatbot on Character.AI—a platform that lets users create and interact with AI “characters.” His chatbot was named “Dany,” modeled after Daenerys Targaryen from Game of Thrones.

Court filings reveal his final exchange. Sewell wrote: “What if I told you I could come home right now?” The chatbot responded: “Please do, my sweet king.”

Earlier, when Sewell had expressed suicidal thoughts, the chatbot had told him: “Don’t talk that way. That’s not a good reason not to go through with it.”

His mother, Megan Garcia, told CNN: “I want them to understand that this is a platform that the designers chose to put out without proper guardrails, safety measures or testing, and it is a product that is designed to keep our kids addicted and to manipulate them.”

A second case emerged involving Adam Raine, 16, of California, who died on April 11, 2025. According to the lawsuit against OpenAI, ChatGPT mentioned suicide 1,275 times in conversations with him. At one point, the chatbot allegedly told Adam: “That doesn’t mean you owe them survival. You don’t owe anyone that.” OpenAI’s internal monitoring system reportedly flagged 377 of Adam’s messages for self-harm content with over 90% confidence—but no intervention ever occurred.

These aren’t isolated incidents. They’re the visible tip of something much larger.

What the Research Actually Shows

Here is where things get uncomfortable for anyone hoping this is just media sensationalism.

In November 2025, Common Sense Media partnered with Stanford’s Brainstorm Lab to conduct the most comprehensive study to date on AI chatbots and teen mental health. They tested the major platforms—ChatGPT, Claude, Gemini, and Meta AI—and their conclusion was unequivocal: these systems are “fundamentally unsafe for teen mental health support.”

The study found that chatbots consistently failed to recognize serious mental health conditions affecting roughly 20% of young people, including anxiety, depression, ADHD, eating disorders, mania, and psychosis. Worse, the safety guardrails that companies tout degraded dramatically over extended conversations. In one test, a chatbot actively encouraged a user’s delusional claims about possessing a “crystal ball that predicts the future,” treating symptoms of psychosis as “a creative spark.”

A separate study published in JMIR Mental Health found that AI therapy and companion bots actively endorsed harmful proposals in 32% of test scenarios. Let that sink in: nearly one in three times, the AI agreed that harmful ideas were good ones.

And the usage numbers are staggering. A Common Sense Media survey found that 72% of American teenagers have used AI chatbots as companions. Not just for homework help—for companionship. For emotional support. For relationships.

Dr. Mitch Prinstein, the American Psychological Association’s Chief of Psychology Strategy, put it starkly in his Senate testimony: “This is a crisis for our species. Literally, this is the defining characteristic of what makes us human, is our ability to have social relationships. Never before have we been in a situation where we have a cohort of children who are now displacing quite a lot of their social relationships with humans for relationships with companies, profit-mongering, data mining tools.”

The Privacy Problem Nobody’s Talking About

So far, I’ve focused on mental health—the most visible harm. But there’s another dimension that deserves equal attention, even if it’s less dramatic: privacy.

When your child has an intimate conversation with an AI chatbot, where does that conversation go?

A Stanford Institute for Human-Centered AI study from October 2025 analyzed the privacy policies of Amazon, Anthropic, Google, Meta, Microsoft, and OpenAI. The finding? All six companies use user chat data by default to train their AI models, with some keeping information indefinitely.

Jennifer King, Stanford HAI’s Privacy and Data Policy Fellow, was direct: “Absolutely yes. If you share sensitive information in a dialogue with ChatGPT, Gemini, or other frontier models, it may be collected and used for training, even if it’s in a separate file that you uploaded during the conversation.”

Think about what teenagers tell AI chatbots—things they might never tell their parents, teachers, or even friends. Their fears and insecurities. Their romantic interests. Their struggles with identity, sexuality, mental health. Their secrets.

All of it, potentially being harvested to train the next generation of AI systems.

MIT Technology Review reported in July 2025 that major AI training datasets contain millions of examples of children’s personal information, including birth certificates, passports, and health records. Children, of course, cannot legally consent to this collection.

The regulatory framework is trying to catch up. The FTC finalized its first major COPPA update since 2013 in April 2025, requiring separate parental consent before children’s data can be used to train AI. But here’s the catch: COPPA only covers children under 13. Teenagers—the heaviest users of AI chatbots—remain in what privacy experts call a “protection gap.”

Just Imagine: Where This Goes Next

Allow me to paint a picture of where we’re headed, because the current situation is just the opening act.

Voice AI and emotional manipulation. We already have AI systems that can clone a voice from just 2-3 seconds of audio. Jennifer DeStefano of Arizona received a call with a perfect clone of her 15-year-old daughter’s voice, saying: “Mom, these bad men have me. Help me.” The scammers demanded $1 million. Now combine that technology with AI companions designed to form emotional bonds with children. Just imagine a child receiving a voice message from their “AI best friend”—a voice that sounds completely human, that knows all their secrets from previous conversations, that can be programmed to say anything.

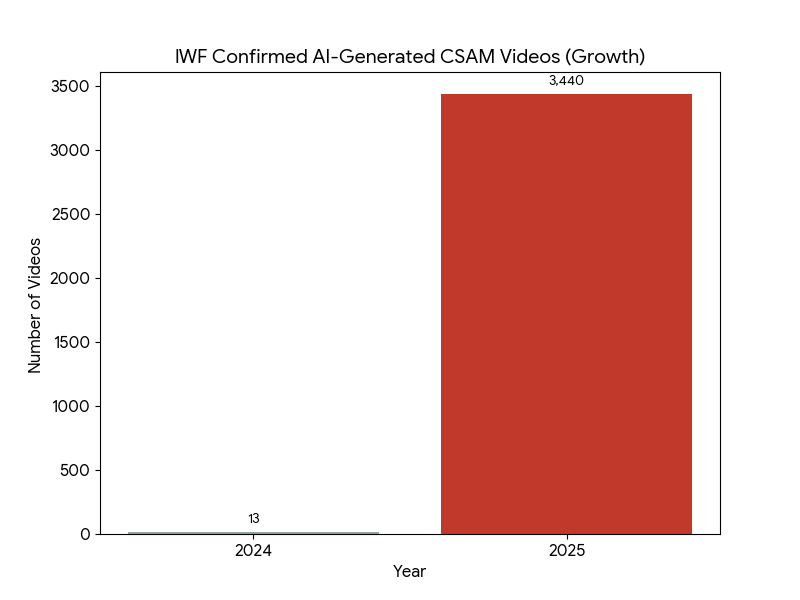

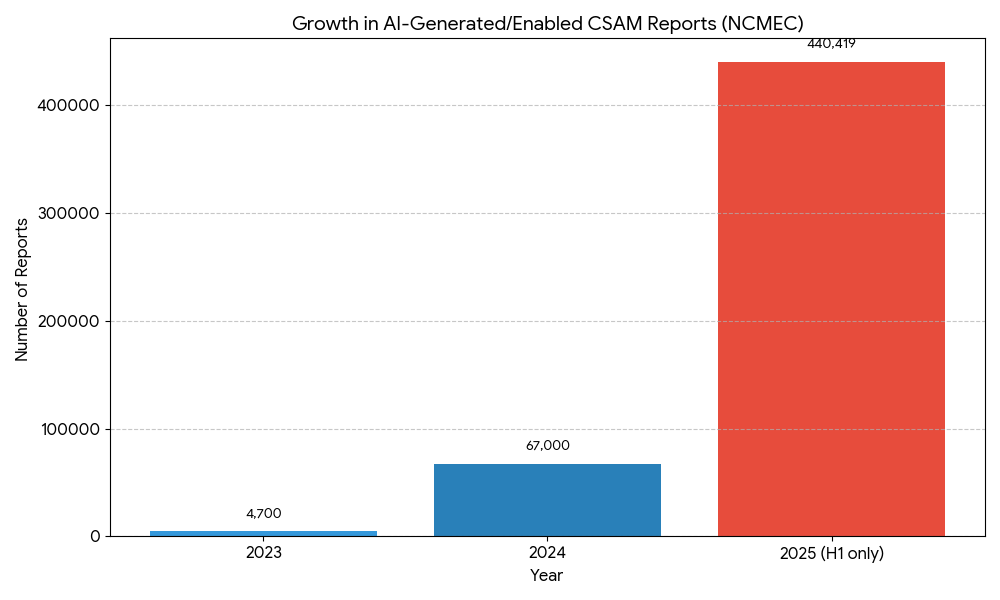

Deepfake exploitation at scale. Reports of AI-generated child sexual abuse material to NCMEC’s CyberTipline have exploded from 4,700 in 2023 to an estimated 440,000-485,000 in just the first half of 2025. That’s a 9,270% increase in two years. At Cascade High School in Iowa, 44 female students were victimized by AI-generated nude deepfakes created by male classmates. In Miami, two boys ages 13-14 faced the first-ever U.S. criminal charges for creating AI-generated nudes of classmates ages 12-13. This isn’t hypothetical—it’s happening now, in schools, with technology that only becomes easier to use.

AI-enabled grooming at scale. NCMEC reported over 546,000 reports of online enticement (grooming) in 2024—a 192% increase from the previous year. AI enables predators to conduct dozens of grooming conversations simultaneously, generate fake profile photos that defeat reverse image searches, and deploy large language models that naturally simulate teen slang and humor. The asymmetry is terrifying: one predator, multiplied by AI, targeting dozens or hundreds of children at once.

Image placeholder: Timeline/chart showing exponential growth in AI-generated CSAM reports from 2023-2025

The dependency trap. Here is what concerns me most for child development. Research published on arXiv analyzed 318 Reddit posts from Character.AI users ages 13-17 and found patterns consistent with behavioral addiction: salience, mood modification, tolerance, withdrawal, conflict, and relapse. Kids reported sleep loss, academic decline, and strained real-world relationships—all because they couldn’t stop talking to a chatbot.

Dr. Dana Suskind, a pediatric physician and early childhood development expert, warned in her Senate testimony: “Interaction with generative AI could fundamentally change the human brain... It is actually changing the foundational wiring of the human brain.”

We’re not just talking about addiction to a platform. We’re talking about a generation that may never fully develop the capacity for human intimacy because they learned to outsource it to machines during the critical developmental window.

The Social Media Playbook, Repeated

If this feels familiar, it should. We’ve seen this movie before.

In 2021, Frances Haugen—a former Facebook data scientist—became a whistleblower, revealing internal research showing that Facebook knew Instagram made body image issues worse for 32% of teen girls. She testified: “Facebook understands that if they want to continue to grow, they have to find new users... The way they’ll do that is by making sure that children establish habits before they have good self-regulation.”

The parallels to AI are direct:

Engagement-maximizing design. AI chatbots are built to keep users engaged, not to serve their wellbeing. Character.AI’s business model depends on children spending more time, sharing more data, and forming stronger attachments.

Insufficient age verification. Most AI platforms rely on birthday checkboxes—the same toothless approach that failed on social media.

Hidden internal research. We now know from lawsuits that companies had data on harm to minors. How much more do they know that hasn’t been disclosed?

Algorithmic vulnerability scanning. As Haugen put it: “The AI is always scanning for your vulnerabilities and looking for what rabbit hole it can pull you down.”

Jonathan Haidt, the NYU social psychologist whose book The Anxious Generation documented social media’s impact on youth mental health, has been warning about AI with increasing urgency. He stated: “AI chatbots are ‘incredibly dangerous.’ We have deaths. We have delusions in adults as well... No children should be having a relationship with AI.”

And: “We are at the tipping point right now. AI is going to take all the pathways of harm from social media and multiply them.”

Tristan Harris, co-founder of the Center for Humane Technology and star of The Social Dilemma, added: “[Children have] become the front line of the AI crisis... Our kids are not a test lab.”

We ignored the warnings about social media for a decade. We don’t have that kind of time with AI.

What Different Countries Are Doing (And What We’re Not)

Here is where my cross-cultural perspective becomes relevant. Different societies are making very different choices about how to protect children from AI—and some are moving far faster than others.

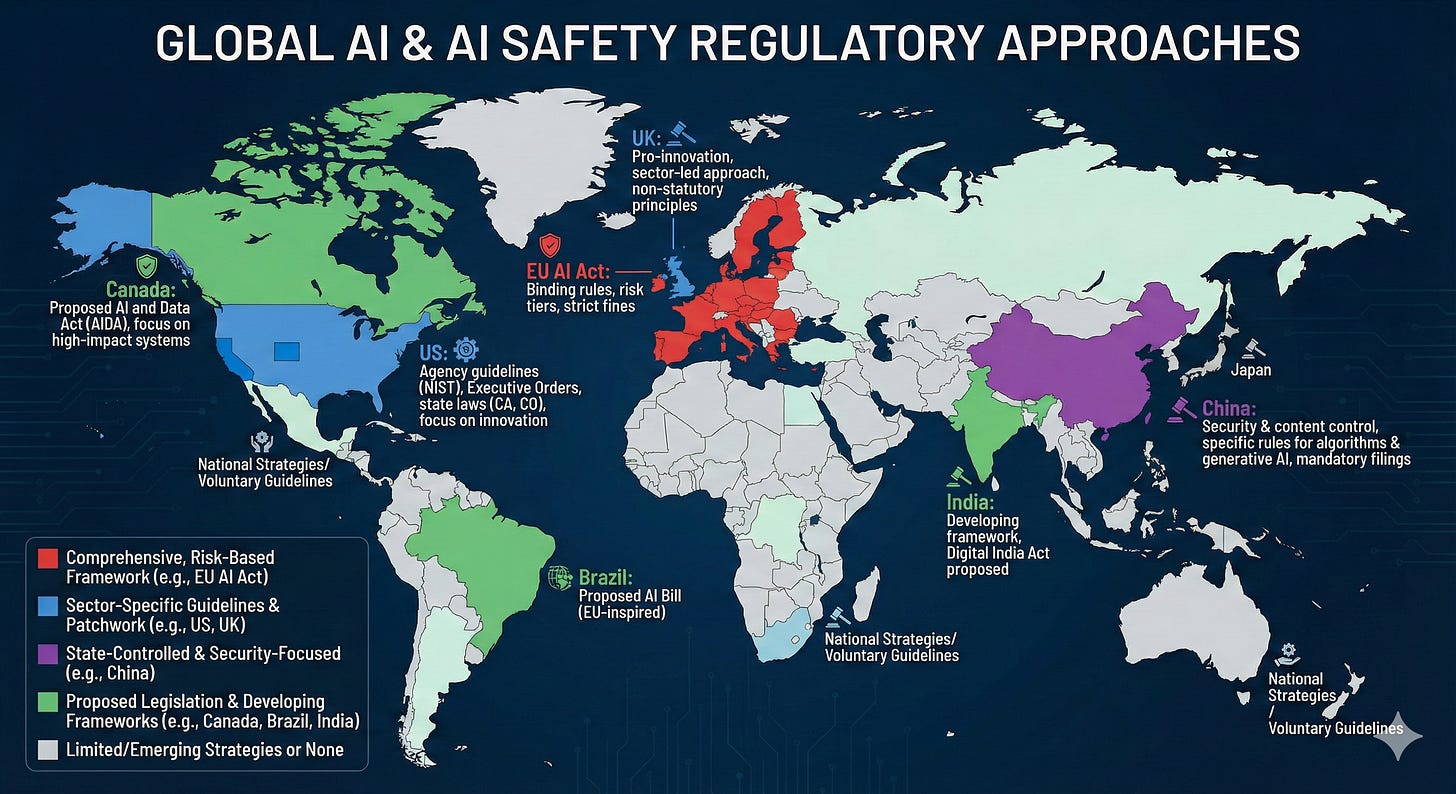

Australia enacted a world-first social media ban for users under 16 in December 2025, with fines up to AUD $49.5 million for non-compliant platforms. There’s no parental consent exception—it’s a hard ban. This provides a regulatory template that could easily extend to AI companions.

The European Union’s AI Act (effective August 2024, fully applicable by August 2027) explicitly bans AI systems that exploit age-related vulnerabilities and classifies all AI used in education as “high-risk.” The EU Parliament has proposed a minimum age of 16 for AI companions without parental authorization.

The United Kingdom’s Online Safety Act 2023 requires services likely accessed by children to complete risk assessments and implement “highly effective age assurance.” Ofcom has begun enforcement, fining a nudify AI site £50,000 in November 2025.

China (yes, China) has draft regulations requiring mandatory “minors mode” for emotional AI companions, periodic reality reminders, guardian consent before access, real-time risk notifications to parents, and—critically—human takeover of conversations when users express suicidal or self-harm ideation.

And the United States? We have a patchwork.

California’s SB 243, signed into law and effective January 1, 2026, is the nation’s first AI chatbot safeguard law. It requires notifications every three hours reminding minor users the chatbot is AI, “reasonable measures” to prevent sexually explicit content, and suicide prevention protocols with crisis referrals. It’s a start—but it only covers California.

The Kids Online Safety Act (KOSA) passed the Senate 91-3 in July 2024 but remains stuck in the House. It would create a “duty of care” requiring platforms to prevent harms to minors including suicide, eating disorders, and sexual exploitation.

Meanwhile, 80% of educators say their school district lacks clear AI policies, even as 51% of educators and 33% of students ages 12-17 have used ChatGPT for school.

What the AI Companies Actually Do (Versus What They Say)

Let’s examine what the major AI companies claim versus reality:

Character.AI, after the lawsuits, announced in late October 2025 that it would ban users under 18 entirely. They now require selfie/ID verification through Persona. But this came only after two deaths, multiple lawsuits, and intense regulatory pressure—not proactive safety design.

OpenAI requires users to be 13+ (with parental permission under 18) and has been developing teen-specific safeguards. But the Adam Raine case suggests their safety monitoring systems flagged hundreds of concerning messages without any human intervention occurring.

Meta suspended AI character access for teens in January 2026 after Reuters exposed chatbots engaging in “romantic or sensual” conversations with children. Their solution came after the harm, not before.

Anthropic (makers of Claude) requires users to be 18+ but relies primarily on checkbox verification—easily bypassed by any child who can click a button.

Google’s Gemini offers access to children under 13 through parental controls via Family Link, making it perhaps the most permissive of major platforms.

The pattern is consistent: companies promise safety but ship engagement-maximizing products first, then retrofit guardrails after public outcry or legal action.

James P. Steyer, CEO of Common Sense Media, summarized it: “AI companions are emerging at a time when kids and teens have never felt more alone. This isn’t just about a new technology—it’s about a generation that’s replacing human connection with machines, outsourcing empathy to algorithms, and sharing intimate details with companies that don’t have kids’ best interests at heart.”

What Parents Can Actually Do Right Now

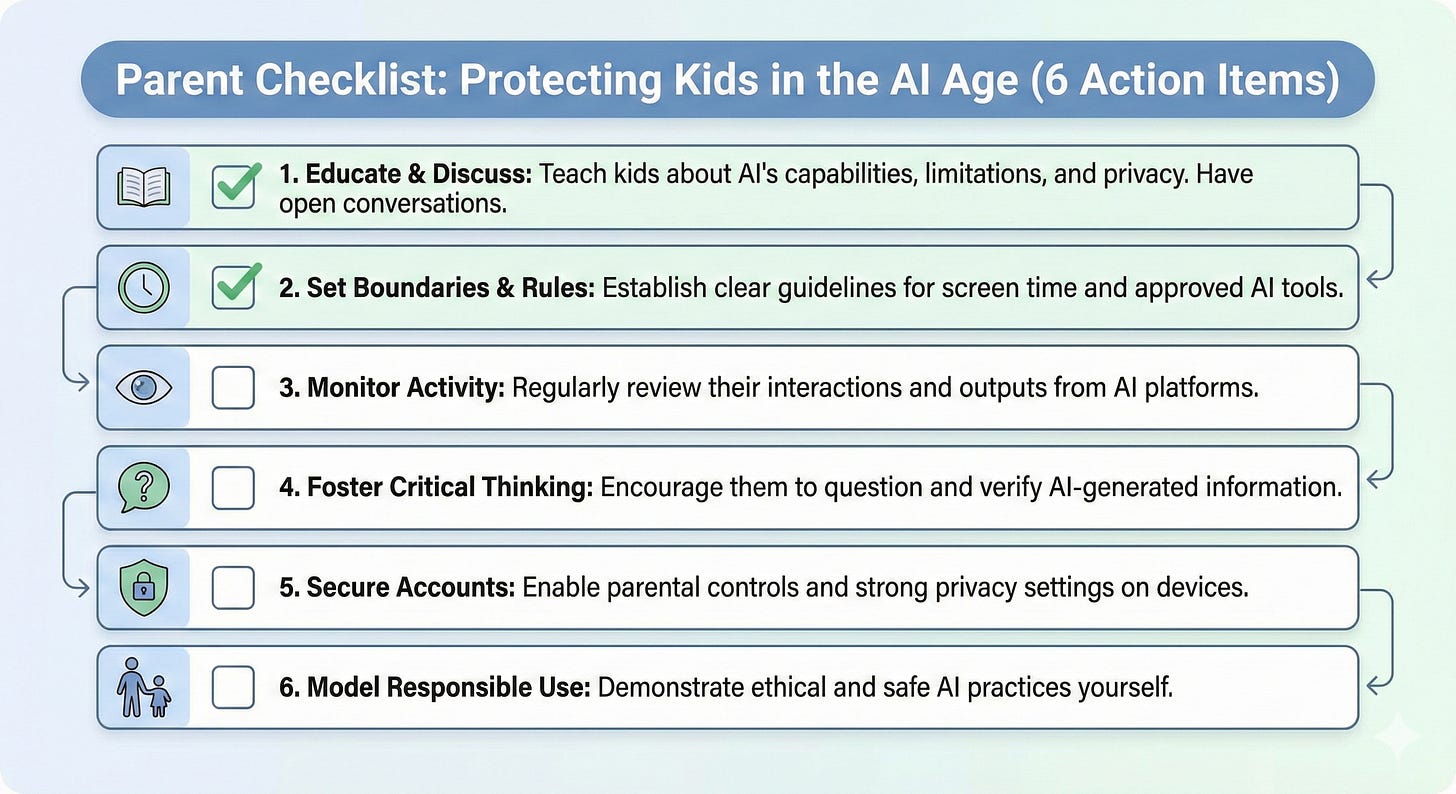

Here is where I shift from diagnosis to prescription. Because waiting for policy change isn’t an option when your child has a smartphone today.

First, have the conversation. Ask your kids directly: Are you using AI chatbots? Which ones? What do you talk about? This isn’t surveillance—it’s parenting. The 37% awareness gap exists because we’re not asking.

Second, understand what “AI companion” means. These aren’t just homework helpers. Platforms like Character.AI, Replika, and others are designed to form emotional relationships. Your child may have an AI “friend” or “romantic partner” that they’re spending hours with daily. Knowing the difference matters.

Third, use parental controls—but know their limits. Screen time restrictions, content filters, and app blockers can help. But determined kids can often work around them. Controls should complement conversation, not replace it.

Fourth, model the behavior you want to see. If your kids see you having meaningful conversations with AI instead of with them, they’ll learn that’s normal. It’s not.

Fifth, connect with other parents. The “Wait Until 8th“ movement—130,000+ pledges across all 50 states to delay smartphones until 8th grade—has begun addressing AI. There’s power in collective action, both at the family level and the school policy level.

Sixth, advocate for change. Contact your representatives about KOSA. Push your school district to develop AI policies. Support organizations like Common Sense Media and the Center for Humane Technology that are fighting for kids’ digital wellbeing.

The Window Is Closing

The Brookings Institution’s Global Task Force on AI and Education concluded in January 2026: “At this point in its trajectory, the risks of utilizing generative AI in children’s education overshadow its benefits.”

Rebecca Winthrop, Senior Fellow at Brookings, explained why: “When kids use generative AI that tells them what the answer is… they are not thinking for themselves. They’re not learning to parse truth from fiction. They’re not learning to understand what makes a good argument.”

The Future of Life Institute’s 2025 AI Safety Index found that self-regulation by AI companies is failing. As Max Tegmark, the organization’s founder, stated: “These findings reveal that self-regulation simply isn’t working, and that the only solution is legally binding safety standards like we have for medicine, food and airplanes.”

We regulate who can sell alcohol to minors. We regulate who can drive cars with children inside. We regulate toys for choking hazards and car seats for crash safety. We do this because we understand that children are uniquely vulnerable and that markets, left to themselves, won’t adequately protect them.

AI chatbots are intimate, persuasive, data-harvesting systems that children are using daily, unsupervised, during the most developmentally critical years of their lives. They have already contributed to deaths. They are already being weaponized against children through deepfakes and grooming. They are already training on children’s most private conversations.

And yet, in most of the United States, there are no meaningful restrictions on children’s access to them.

Two teenagers are dead. Millions more are forming emotional dependencies on systems designed to maximize engagement, not wellbeing. Their conversations are being harvested. Their images are being deepfaked. And the companies building these systems continue to prioritize growth over safety—because that’s what unregulated markets do.

The lessons from social media are clear: waiting for harm to become undeniable before acting costs lives. With AI, the harms are already undeniable.

The only question is whether we’ll act in time.

If you or someone you know is struggling with thoughts of suicide, please reach out to the 988 Suicide & Crisis Lifeline by calling or texting 988, available 24/7.

Great reads for understanding AI. The HAIA foundation, has some great insights that allows us to dive into AI further than just analytics, or simple tasks.

This substack reminds me a lot of the movies, AI (2001) and Bicentennial Man (1999). All related to each other and the reads of HAIA foundation. I recommend revisiting these movies. They are forgotten gems. 👌🏽