The Cloud Is Thirsty, and You’re Paying the Tab

How AI data centers are draining our water, straining our grids, and quietly raising your electricity bill — and why distributing the cloud might be the answer nobody’s talking about.

Here is something most people never think about: every time you ask an AI chatbot a question — every search query, every image generation, every email auto-reply — a server somewhere is working hard to produce that answer. And that server is hot. Extremely hot. To keep it from melting down, it needs two things in enormous quantities: electricity and water. Multiply that by the billions of queries happening every single day, and you start to see the outline of a crisis that is quietly reshaping energy markets, draining aquifers, and — here is the part that should concern you personally — driving up your electricity bill.

Let me explain.

The Scale of the Problem

The International Energy Agency published its landmark “Energy and AI” report in April 2025, and the numbers are staggering. Global data center electricity consumption hit roughly 415 terawatt-hours in 2024 — about 1.5% of all electricity generated on Earth. Under the IEA’s base projections, that figure will more than double to 945 TWh by 2030, equivalent to the entire national electricity consumption of Japan. In the United States alone, data centers already consume more than 4% of the country’s electricity, and that share is climbing fast.

Goldman Sachs projects AI will drive a 165% increase in data center power demand by the end of this decade. To meet that demand, U.S. utilities will need roughly $50 billion in new generation capacity — much of it, uncomfortably, fueled by natural gas and coal.

And then there is the water.

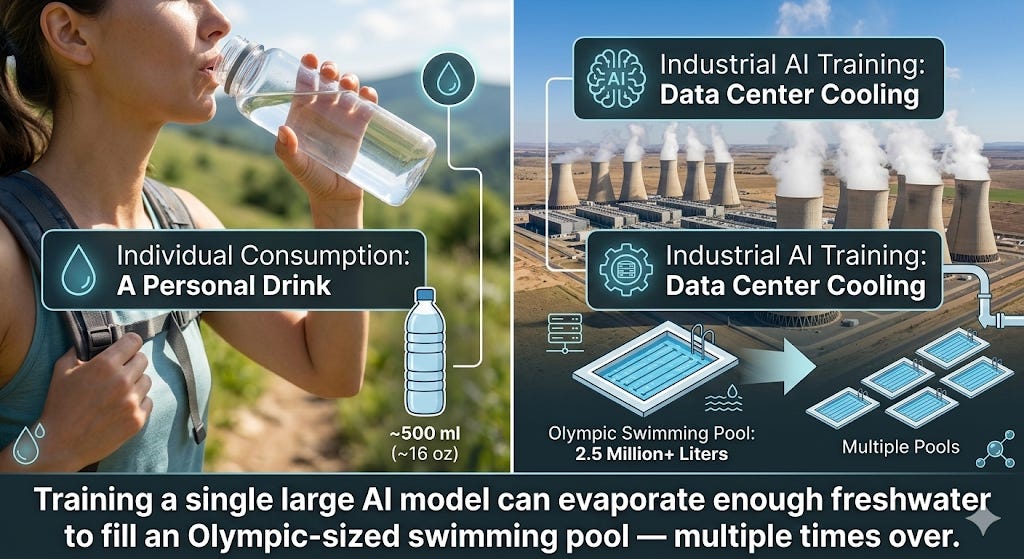

Shaolei Ren, a professor at UC Riverside and arguably the world’s leading researcher on AI’s water footprint, has documented that training a model like GPT-3 directly evaporated 700,000 liters of clean freshwater — enough to manufacture 370 BMWs. His research projects that global AI demand could withdraw 4.2 to 6.6 billion cubic meters of water annually by 2027, more than the total annual water withdrawal of four to six Denmarks. As Ren put it in his TED Talk in Vienna: every time you ask an AI chatbot a question, you are also consuming water — without realizing it.

The major tech companies’ own environmental reports confirm the trajectory. Google’s water consumption has more than tripled since 2016. Microsoft’s usage jumped 22% in a single year. A single Meta facility in Newton County, Georgia drinks 500,000 gallons per day — 10% of the entire county’s water supply. And two-thirds of new data centers built since 2022 have gone up in water-stressed areas.

So far so good — or rather, so far so alarming. But here is where things get personal.

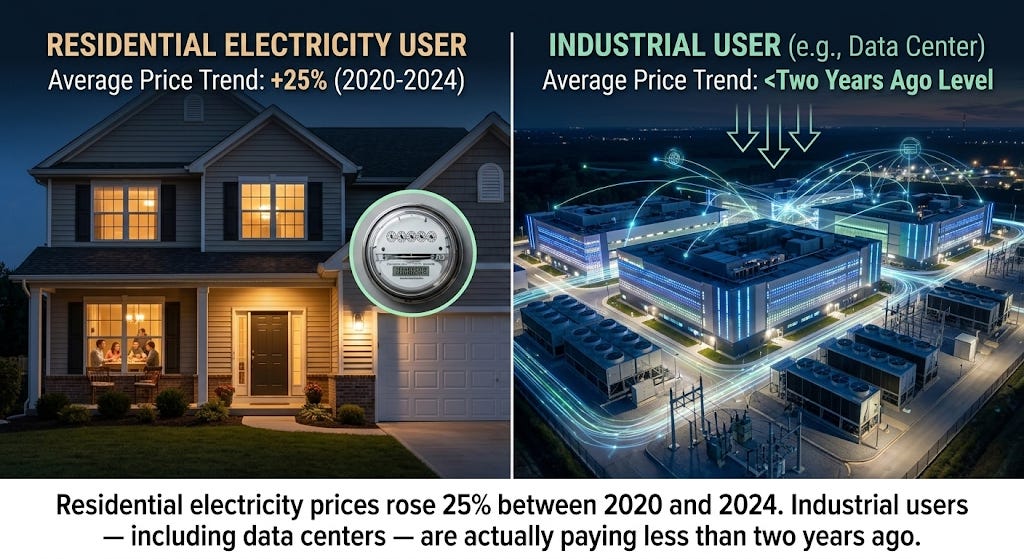

You Are Subsidizing Big Tech’s Electric Bill

Perhaps the most underreported dimension of the AI data center boom is who actually pays for the infrastructure it demands. Spoiler: it is you.

Here is the mechanism. When a massive data center moves into a region, the local utility needs to build new transmission lines, new substations, new generation capacity. Under traditional rate structures, those costs get spread across all ratepayers — residential customers included. The result? Your monthly bill goes up to finance infrastructure you will never use, built to serve a tech company that may be paying a lower per-kilowatt-hour rate than you are.

This is not speculation. Harvard Law School‘s Environmental and Energy Law Program has documented the dynamic in detail, concluding that utilities’ traditional approach of spreading infrastructure costs across all ratepayers forces residential consumers to subsidize computing facilities. A Georgetown University analysis found that data centers drove an estimated 63% of price increases in the PJM interconnection region — the grid serving much of the mid-Atlantic. Carnegie Mellon University found that data center growth could raise electricity bills by 8% nationally and as much as 25% in some regional markets.

Nowhere is this dynamic more visible than in Northern Virginia — so-called Data Center Alley — which hosts over 600 data centers and handles roughly 70% of the world’s internet traffic. Virginia’s Joint Legislative Audit and Review Commission projected that data center growth could push residential bills up by $444 per year by 2040. Yale Climate Connections reported the uncomfortable contrast: between 2020 and 2024, residential electricity prices in the U.S. surged 25%, while industrial users — including data centers — actually pay lower average prices than two years ago.

And it is not just electricity. The tax incentive picture is equally troubling. At least 37 states offer tax breaks for data centers — breaks that Good Jobs First found lose states between 52 and 70 cents for every dollar exempted. Virginia’s data center tax exemption alone costs $1.6 billion annually — the program was originally estimated at just $1.5 million when it was enacted. That is not a typo. A program designed for millions now costs billions, and taxpayers are paying roughly $1 million per permanent data center job created.

Why should you care? Because those lost tax revenues come out of your roads, your schools, your municipal services. The bait-and-switch here is elegant in its simplicity: tech companies promise jobs and economic growth, receive massive incentives, then create facilities that employ remarkably few people while consuming extraordinary amounts of public resources.

Communities Are Fighting Back

The backlash is real and growing. According to Data Center Watch, $64 billion in data center projects have been blocked or delayed by local opposition since mid-2024 — rising to roughly $100 billion by mid-2025. At least 142 activist groups across 24 states are organizing, and the opposition is notably bipartisan: 55% of opposing elected officials are Republican, 45% Democrat. More than 230 environmental organizations have called on Congress for a national moratorium on new data center construction.

In The Dalles, Oregon, a small city designated a critical groundwater area back in 1959, a major tech company’s data centers now consume a third of the municipal water supply. In Mesa, Arizona, where data centers requiring millions of gallons per day were approved during a historic drought, a vice mayor called it unconscionable. In Ireland, data centers now consume over 21% of national metered electricity — up from 5% in 2015 — exceeding the consumption of all urban households combined, prompting the grid operator to impose a years-long moratorium on new connections.

The pattern is consistent across geography and political alignment: communities are realizing that the promised economic benefits rarely outweigh the very real costs to their water, their grids, and their wallets.

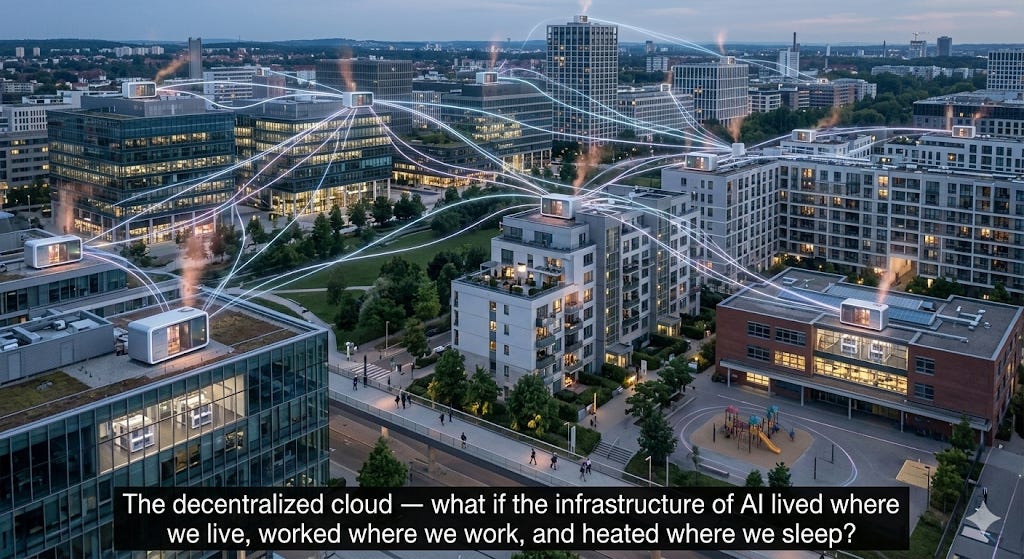

What If the Cloud Lived in Your Neighborhood?

Here is where things get interesting — and where some creative thinking could change the equation entirely.

What if, instead of concentrating millions of servers in monolithic facilities that devour entire regions’ water and power, we distributed them? What if your office building, your apartment complex, even your home contained a small compute node — not a roaring server room, but a quiet, modular unit the size of a filing cabinet?

This is not science fiction. It is already happening.

Qarnot Computing in France distributes servers into building heating systems, converting up to 95% of compute waste heat into hot water for residents. Heata in the UK installs servers inside homes, transferring waste heat into domestic hot-water cylinders. Leafcloud in Amsterdam places servers in apartment complexes, swimming pools, and retirement homes. In the Nordics, this is already mainstream — Microsoft heats thousands of homes in Denmark from data center waste heat, and a joint venture in Espoo, Finland provides 40% of the city’s district heating from compute.

The edge computing and micro data center sector is booming. Vapor IO is building hundreds of micro facilities at cell tower sites across 50 U.S. metro areas. The broader edge data center sector has attracted $3.92 billion in funding over the past decade. Meanwhile, decentralized compute platforms like Akash Network and Render Network are creating marketplaces where distributed GPU resources — even spare capacity on personal machines — can be pooled for AI workloads.

Just imagine: instead of one data center draining a third of a small town’s water supply, thousands of small nodes spread the load so widely that no single community bears the burden. Instead of waste heat venting uselessly into the atmosphere through massive cooling towers, that heat warms your shower water. Instead of your electricity bill subsidizing a transmission line to a tech campus you will never visit, the compute infrastructure pays you — in reduced heating costs, in rental income for hosting a node, in a more resilient local grid.

A 2025 study demonstrated that geographically distributing data center loads eliminates transmission violations and reduces solar energy curtailment by up to 61%. A field demonstration in Phoenix proved that distributed AI compute can cut power demand by 25% during peak hours without breaking a single service agreement.

Now, to be fair — and intellectual honesty demands this caveat — distribution is not a silver bullet. Training the largest AI models requires tightly interconnected GPU clusters that simply cannot function across dispersed nodes. You are not going to train GPT-5 on a network of home servers. But inference (the part where AI models actually answer your questions) is a different story entirely, and it represents the vast majority of real-world AI compute. For inference, edge computing, and the rapidly growing universe of IoT and real-time applications, distribution is not just viable — it is arguably superior.

What Needs to Happen

The European Union is ahead of the curve. Its revised Energy Efficiency Directive requires data centers above 500 kW to report annually on energy consumption, water usage, and waste heat output, with minimum performance standards coming in 2026. Germany goes further still, mandating 100% renewable energy by January 2027 and minimum waste heat reuse targets. Singapore imposed a moratorium on new data centers for three years until it could establish sustainability requirements.

The United States, predictably, lags behind — but the states are innovating. Virginia’s SB 253 would shift grid costs from residential customers to the data centers that actually cause them. Texas now requires data centers to participate in demand response programs and agree to disconnection during shortages. The National Caucus of Environmental Legislators tracks over 60 bills across 22 states addressing data center energy and climate impacts.

But here is what I think is missing from the policy conversation — a framework that actually incentivizes conservation and distribution rather than merely punishing excess. What would that look like?

Conservation credits: Tax incentives that scale with verifiable reductions in water and energy use per unit of compute — not flat exemptions for simply showing up and building.

Distribution bonuses: Preferential permitting and rate structures for companies that distribute compute across multiple smaller facilities rather than concentrating demand in a single location.

Waste heat mandates: Requirements (like Germany’s) that new data centers capture and redistribute waste heat, turning a liability into a community asset.

Transparency as a baseline: No more trade-secret claims over water usage data. If you are consuming public resources at industrial scale, the public has a right to know how much.

Ratepayer firewalls: Structural separation ensuring residential electricity customers never subsidize infrastructure built exclusively for data center demand.

Where Do We Go from Here?

The AI revolution is not going to slow down. Nor should it — the potential benefits to medicine, education, scientific research, and daily life are real and significant. But the infrastructure powering that revolution cannot continue growing on the backs of residential ratepayers, drought-stricken communities, and overtaxed grids. The current model — build enormous, concentrate demand, externalize costs, and classify consumption data as trade secrets — is not just unsustainable. It is, frankly, unfair.

A Cornell University study published in Nature Sustainability put it plainly: the AI infrastructure choices we make this decade will decide whether AI accelerates climate progress or becomes a new environmental burden.

The good news? The technology for a better model already exists. Distributed compute, waste heat recovery, zero-water cooling, edge infrastructure — these are not theoretical. They are deployed, they are funded, and they are working. What is missing is the political will to make conservation profitable and concentration costly.

One can only dream that policymakers — and the tech companies themselves — will recognize that the cloud does not have to be a storm. It could just as easily be a gentle, distributed rain.

The HAIA Foundation works at the intersection of technology, policy, and human welfare. Subscribe to our Substack for more analysis on how emerging technologies reshape the social contract.