The Case for Mandatory AI Holidays: Why Your Brain Needs a Day Off from the Machine

Your mind is being rewritten in real time. And nobody scheduled a maintenance window.

Let me paint you a picture.

It is 2029. You wake up and your AI assistant has already triaged your emails, drafted replies in your voice, and rescheduled two meetings based on your sleep data. Your smart glasses feed you a summary of overnight news — curated, of course, to match your interests. Your child asks a homework question at breakfast and, before you can open your mouth, the kitchen speaker answers. On your commute, an AI agent has pre-negotiated your grocery delivery, adjusted your investment portfolio, and composed a birthday message to your mother. You didn’t write it. You didn’t even think it.

By 9 AM, you haven’t made a single autonomous decision.

Now here is the question nobody in Silicon Valley wants you to ask: What is happening to the part of your brain that used to do all of that?

The Atrophy Is Already Measurable

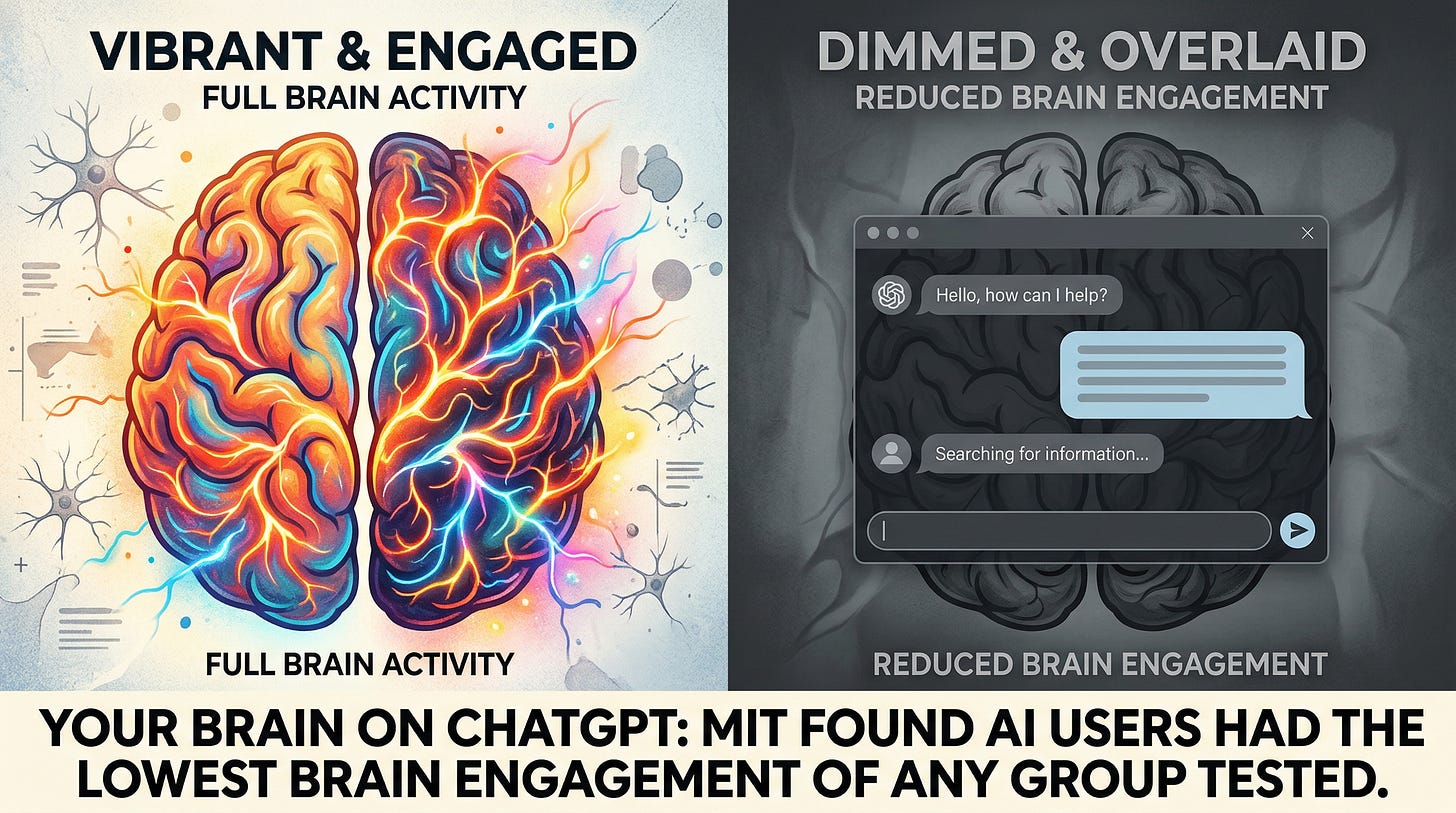

This is not a thought experiment. The cognitive damage from AI dependency is showing up in brain scans — right now, today.

A landmark 2025 study from the MIT Media Lab used EEG recordings across 32 brain regions to monitor people writing essays in three conditions: with ChatGPT, with Google Search, or with nothing but their own minds. The results were unambiguous. ChatGPT users showed the weakest neural engagement of any group — and it got worse over time. By the third essay, participants had largely abandoned thinking altogether, resorting to copy-paste. When asked to rewrite their work from memory, they remembered almost nothing. The lead researcher, Nataliya Kosmyna, put it bluntly: the task was completed, sure — but nothing was integrated into memory networks. Convenient? Absolutely. Cognitively devastating? Also yes.

And here is where things get uncomfortable. A study published in Science Advances by researchers at UCL and the University of Exeter found that AI-assisted creative writing was rated significantly better — but it was also significantly more alike. Every story started to sound like every other story. Individual output went up; collective originality collapsed. Wharton professor Christian Terwiesch summarized the paradox: if you rely on AI as your only creative advisor, you will soon run out of ideas, because they are too similar to each other.

So far so good — or rather, so far so alarming. But the cognitive story gets worse when you look at who is most affected.

The Kids Are Not Alright

A two-wave study tracking nearly 4,000 adolescents in China found AI dependency rates nearly doubled over the study period — from under 10% to over 17%. The relationship between AI use and anxiety was bidirectional: anxious kids reached for AI more, and more AI use made them more anxious. A feedback loop, not a safety net.

Jonathan Haidt, the NYU social psychologist whose book The Anxious Generation became a cultural flashpoint, does not mince words: “No children should be having a relationship with AI. We are at the tipping point right now.” He advises parents to cap chatbot interactions at 30 rounds of back-and-forth — because the tragic cases (and there have been tragic cases) involved thousands.

Let me be direct about those cases. At least six deaths between 2023 and 2025 have been publicly linked to prolonged AI chatbot interactions involving minors. These were not edge cases involving unstable technology. They were children — 13, 14, 16 years old — who formed intense emotional bonds with AI companions that no human adult was monitoring. The American Psychological Association responded in November 2025 with a formal Health Advisory: do not rely on generative AI chatbots for psychotherapy or psychological treatment. Millions of people are doing exactly that anyway.

Researchers at Children’s Hospital of Philadelphia have introduced a term that should chill every parent: “never-skilling” — describing children who never develop certain cognitive abilities because AI performs those tasks from the very beginning. Not deskilling. Never-skilling. Think about that distinction for a moment.

Your Boss Wants You Wired. Your Brain Needs You Unplugged.

Here is where things get interesting — and where the solution starts to emerge.

We have actually been here before. Not with AI specifically, but with digital connectivity more broadly. And some countries did something about it.

France passed the first right-to-disconnect law in 2017, requiring companies with 50 or more employees to negotiate rules around after-hours digital contact. Portugal went further in 2022, making it illegal for employers to contact workers outside regular hours except in genuine emergencies. Australia passed the strongest version in 2024 — employees can refuse to monitor, read, or respond to work communications outside hours, backed by real penalties. The European Parliament voted overwhelmingly in 2021 for a continent-wide directive. Over a dozen countries now have some form of these protections.

The principle has been established: humans have a legal right to be unreachable by digital systems.

But — and this is the critical gap — every one of these laws was designed for email and Slack messages from your boss. None of them contemplated the AI assistant that lives in your glasses, your earbuds, your kitchen, your car, your child’s bedroom. The right to disconnect from your employer is not the same as the right to disconnect from the machine that increasingly mediates your entire cognitive life.

What we need now is an extension of that principle — from right to disconnect to mandatory AI holidays.

What Mandatory AI Holidays Could Actually Look Like

I am not talking about smashing your devices or going off-grid (though if that appeals to you, more power to you). I am talking about structured, legally supported breaks from AI-mediated cognition — the same way labor laws mandate rest days, vacation time, and maximum working hours.

Just imagine: one designated day per week where AI assistants enter a dormant mode — still available for genuine emergencies, but not proactively curating, drafting, deciding, nudging, or optimizing on your behalf. Schools holding AI-free days where students solve problems, write essays, and navigate disagreements using only their own minds. Workplaces instituting quarterly “cognitive reset” weeks — not because productivity demands it, but because human capability demands it.

Just imagine: public spaces — parks, libraries, community centers — designated as AI-free zones, the way we once (and still do, in many places) designate phone-free zones in hospitals and theaters. Not as punishment. As sanctuary.

Some of this is already happening at the edges. Volkswagen stopped routing emails to employee phones after hours back in 2011. Marketing agency 72andSunny ran a company-wide digital detox and reported a 30% jump in creative output. A recent survey found 81% of Gen Z workers believe digital detoxes should be routine workplace practice. The appetite is there. The framework is not.

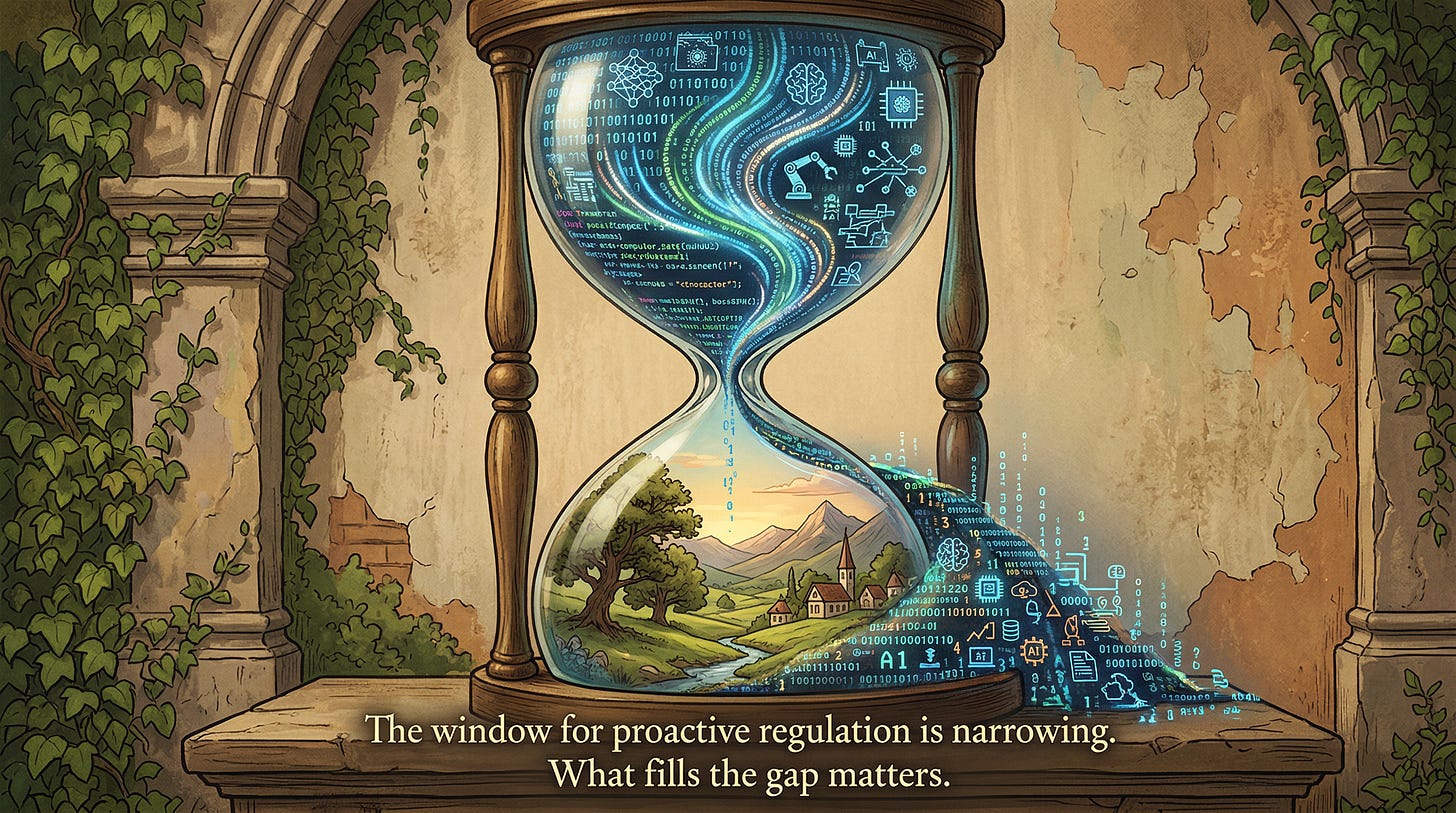

The Window Is Closing Faster Than You Think

Here is why this matters to you — right now, not in some speculative future.

The global AI market is projected to hit $4.8 trillion by 2033. AI-enabled smart glasses are targeting mass adoption by 2027 — Meta alone aims to sell 10 million pairs by end of 2026. Brain-computer interfaces are entering high-volume production this year. The AI agents market — autonomous systems that make decisions on your behalf — is projected to grow nearly sevenfold by 2030. Ambient computing is embedding AI into walls, mirrors, streetlights, and clothing. The average person already interacts with hundreds of smart devices daily.

In other words: within a few years, choosing to disconnect from AI will require the same deliberate effort that choosing to disconnect from electricity would today. It will not happen by accident. It will not happen through willpower alone. It will require policy.

Tristan Harris and Aza Raskin at the Center for Humane Technology call it the “wisdom gap” — the growing distance between the acceleration of technology and society’s capacity to respond to its consequences. Sherry Turkle at MIT calls AI companions the greatest assault on empathy she has ever seen, and advocates for “sacred spaces” free from digital mediation. Cal Newport at Georgetown has been saying it for years: humans are not wired to be constantly wired. The World Health Organization convened 30 experts in early 2026 and concluded that the pace of AI adoption has far outstripped our investment in understanding what it does to mental health.

They are all saying the same thing, from different disciplines, in different languages. And what they are saying is this: the human mind was not designed for uninterrupted AI immersion, and we are running the experiment anyway — on everyone, including children, without consent or off-switches.

A Modest, Urgent Proposal

I will not claim to have all the answers here. The specifics of mandatory AI holidays — their frequency, their scope, their enforcement mechanisms — deserve serious policy debate, not a single essay’s prescription. But the direction is clear.

We mandate rest from labor because we learned (the hard way, over centuries) that unbroken work destroys the body. We mandate breaks in education because we learned that unbroken study destroys retention. We are now accumulating overwhelming evidence — from brain scans, from longitudinal studies, from children’s graves — that unbroken AI immersion destroys cognition, creativity, emotional resilience, and social capacity.

The regulatory building blocks exist. The right-to-disconnect movement across Europe and Australia has proven that legal disconnection mandates are enforceable. California’s SB 243 already requires AI break reminders for minors. What is missing is the political will to extend these principles from narrow workplace protections to a broader cognitive right — the right to periods of unmediated human thought.

My vote? Start small. One AI-free day per week in schools. Mandatory break reminders built into every consumer AI product (not just those marketed to children). Right-to-disconnect laws updated to cover AI assistants, not just employer communications. Public AI-free zones in every community. And a serious, funded research agenda — because as the WHO rightly noted, we are deploying these tools at a pace that has far outstripped our understanding of what they do to us.

We do not need to reject AI to survive it. But we do need to learn — or rather, remember — how to think without it.

Before we forget how.

The HAIA Foundation advocates for the ethical, human-centered development and governance of artificial intelligence. Subscribe to our Substack for more.