The AI That Comes After ChatGPT Will Change Everything

A guide to the ten types of artificial intelligence racing toward deployment — who is building them, who is funding them, and why you should care.

The next decade of artificial intelligence will not look like the last one. Between 2025 and 2035, at least ten distinct categories of AI — from autonomous agents and humanoid robots to brain-computer interfaces and quantum-accelerated models — are racing from laboratory concepts toward commercial deployment, backed by more than $1 trillion in announced investment from a coalition of tech giants, sovereign wealth funds, and military organizations. What emerges will reshape work, governance, warfare, and daily life on a scale that leading researchers compare to the Industrial Revolution, but compressed into a fraction of the time. Some of those same researchers warn the risks are existential.

The era of the chatbot was a prologue. The current moment — spring 2026 — marks the pivot from AI as a tool you talk to into AI as a force that acts, builds, discovers, and in some cases, decides. Geoffrey Hinton, who won the 2024 Nobel Prize in Physics for his foundational AI work, put it bluntly: instead of exceeding people in physical strength, AI is going to exceed people in intellectual ability — and we have no experience of what it’s like to have things smarter than us. Understanding what’s coming, who is building it, and what it means for ordinary people is no longer optional. It’s necessary.

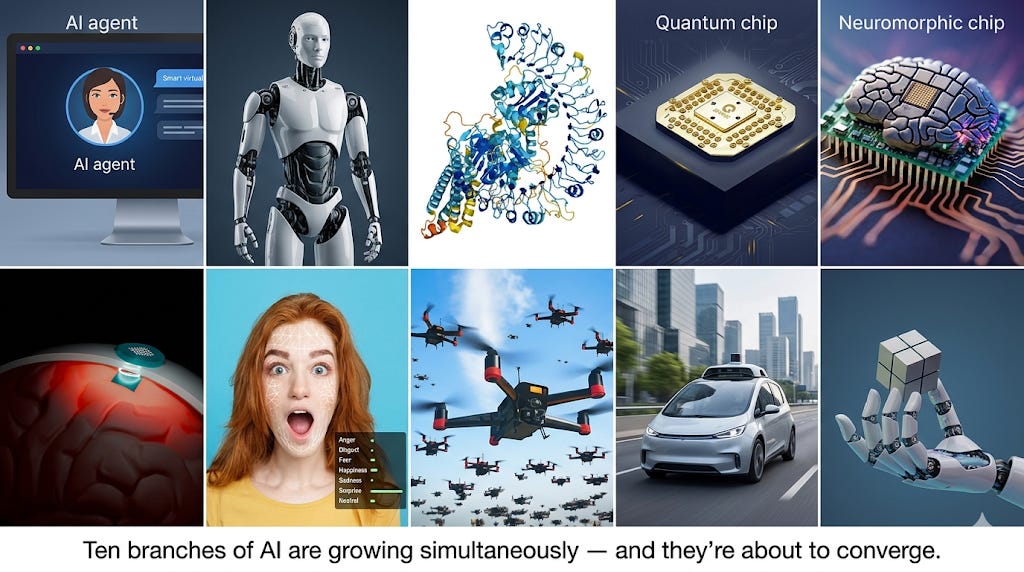

Ten kinds of AI are emerging simultaneously

Large language models like ChatGPT and Claude captured public attention, but they represent just one branch of a rapidly diversifying technology tree. Here are the categories that matter most — and here is why each one deserves your attention.

1. Agentic AI: The assistants that don’t just talk — they act

Agentic AI — systems that don’t just answer questions but autonomously plan and execute multi-step tasks — became the industry’s dominant obsession in 2025. OpenAI launched Operator in January 2025, powered by a model that can navigate computer interfaces like a human. Anthropic released the Model Context Protocol (MCP), now described as “USB-C for AI,” giving agents a universal way to connect to external tools. By December 2025, the two companies co-founded the Agentic AI Foundation under the Linux Foundation alongside Block, with platinum members including AWS, Google, Microsoft, and Salesforce.

So what does this mean in practice? These agents are no longer experimental. OpenAI’s Codex CLI has helped merge over two million public pull requests on GitHub. The AI agents market is valued at $7.8 billion in 2025 and projected to reach $52.6 billion by 2030.

Why you may ask? Because agents collapse the distance between intention and execution. You won’t just ask AI a question — you’ll tell it to book your flights, file your taxes, negotiate your bills, and manage your calendar. The question is not whether this will happen; it’s who controls the agents that control your life.

2. Embodied AI and humanoid robotics: AI gets a body

Embodied AI moved from viral demo videos to factory floors in 2025–2026. Tesla began mass production of its Optimus Gen 3 humanoid at its Fremont factory in January 2026, targeting costs of $20,000 per unit at scale. Figure AI‘s Figure 03, named TIME’s Best Invention of 2025, can fold clothes and load a dishwasher. 1X Technologies opened consumer preorders for its NEO humanoid with a 2026 delivery window. Nvidia‘s GR00T N1 foundation model provides the “brain” for many of these robots, while Boston Dynamics‘ electric Atlas demonstrated autonomous industrial tasks powered by large behavior models.

Here is where things get interesting. China dominates volume: Chinese manufacturers hold 85–90% of global humanoid robot shipments, with BYD targeting 20,000 units by 2026 and Unitree offering robots at a breakthrough $16,000 price point. Morgan Stanley projects the U.S. humanoid robot market alone could reach $3 trillion by 2050.

Just imagine a robot that costs less than a used car, works 24 hours a day, never calls in sick, and gets smarter every month through over-the-air updates. Now imagine 20 million of them entering the workforce by 2035. That’s not science fiction. That’s the current business plan of at least five well-funded companies.

3. AI for scientific discovery: The lab assistant that never sleeps

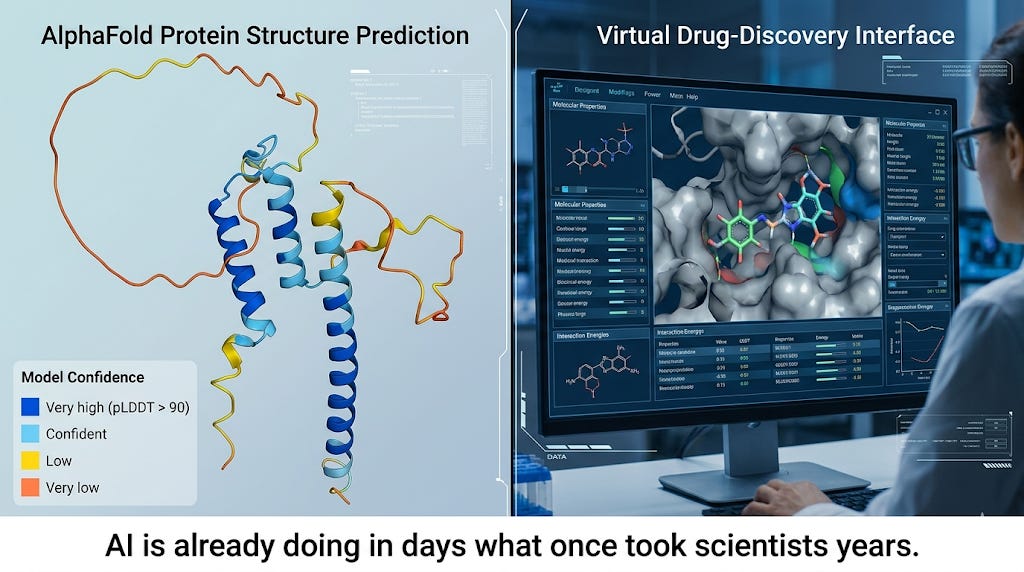

This is the category where AI has already delivered results that once seemed decades away — and it may be the most consequential of all.

DeepMind‘s AlphaFold, which predicted the 3D structures of all 200 million known proteins, won the 2024 Nobel Prize in Chemistry and has been cited more than 43,000 times. Its successor, AlphaFold 3, expanded to DNA, RNA, and drug-like molecules. Demis Hassabis, DeepMind’s CEO and Nobel co-laureate, described the potential as transforming centuries of painstaking laboratory work into days of computation.

Google’s AI Co-Scientist, built on Gemini 2.0, independently replicated a bacterial gene-transfer mechanism in 48 hours that took Imperial College researchers a decade. DeepMind’s GNoME system discovered 2.2 million new materials — equivalent to roughly 800 years of traditional knowledge — including 528 potential lithium-ion conductors for next-generation batteries.

In drug discovery, Insilico Medicine‘s rentosertib became widely recognized as the first drug where both the target and the molecule were designed entirely by generative AI, with positive Phase IIa results published in Nature Medicine in June 2025. As of early 2026, 173 AI-discovered drug programs are in clinical trials, with AI-designed drugs showing 80–90% Phase I success rates versus a historical average of roughly 52%.

Here is why this matters to you: if AI can compress a decade of drug development into two years, the implications for cancer, Alzheimer’s, and rare diseases are staggering. But it also means the same technology could — in the wrong hands — accelerate the creation of novel pathogens. More on that below.

4. Quantum AI: When the impossible becomes computable

Google‘s Willow chip, unveiled in December 2024, completed a benchmark computation in under five minutes that would take a classical supercomputer 10 septillion years. Let that sink in. By October 2025, Google demonstrated “Quantum Echoes,” achieving a verified 13,000x speedup over the Frontier supercomputer.

IBM broke the 1,000-qubit barrier with its Condor processor. Microsoft introduced Majorana 1, using a novel quantum material. Amazon‘s Ocelot chip claims to reduce error correction hardware by 90%. McKinsey reports that 72% of technology executives expect a fully fault-tolerant quantum computer by 2035, with a potential $1.3 trillion value increase across industries.

Why does quantum computing matter for AI? Because today’s AI is bottlenecked by the limits of classical computing. Quantum AI could crack problems in materials science, cryptography, climate modeling, and drug design that are currently computationally intractable. It could also break most existing encryption — which is why the race to quantum-resistant cryptography is already underway.

5. Neuromorphic computing: Building chips that think like brains

Here is a problem few people talk about: AI is an energy hog. Training a single large language model can consume as much electricity as a small city uses in a year. Neuromorphic computing — brain-inspired chips that process information like biological neurons — is emerging as a critical answer.

Intel‘s Hala Point system, the world’s first billion-neuron neuromorphic system, claims to be 50 times faster with 100 times less energy than GPUs. IBM‘s NorthPole chip achieves 25 times the energy efficiency of Nvidia’s H100 for image recognition. BrainChip‘s Akida Pulsar delivers 500 times lower energy consumption than conventional AI cores. The neuromorphic chip market is projected to reach $8.4 billion by late 2025, growing at nearly 90% annually.

If AI is to move from data centers into everyday devices — phones, cars, medical implants, robots — it needs to run on dramatically less power. Neuromorphic chips are how that happens.

6. Brain-computer interfaces: Merging minds with machines

Brain-computer interfaces crossed from science fiction into clinical reality. Neuralink has implanted its N1 device in approximately 12 patients as of September 2025, enabling people like first patient Noland Arbaugh to control computers with their thoughts. Synchron‘s less invasive Stentrode — inserted via a vein rather than open surgery — demonstrated ALS patients controlling iPads by thought alone, backed by investors including Bill Gates and Jeff Bezos.

MIT Technology Review named BCIs one of the 10 Breakthrough Technologies of 2025. About 25 BCI clinical trials are currently underway worldwide. Neuralink raised $650 million in June 2025 and plans to begin trials of its Blindsight implant for vision restoration in 2026.

The therapeutic applications are extraordinary — restoring movement to paralyzed people, sight to the blind, communication to those who’ve lost it. But the enhancement applications (not to say military ones) are what keep ethicists up at night. What happens when a healthy person can think faster, access information instantaneously, or interface directly with AI systems through a chip in their skull? And who decides who gets access?

7–10. The supporting cast (that could steal the show)

Emotional AI: Hume AI‘s Empathic Voice Interface recognizes 48 distinct emotional expressions, in a market projected to reach $113 billion by 2032. The promise: AI that understands not just what you say but how you feel. The danger: AI that manipulates emotions for commercial or political purposes.

Swarm intelligence: France’s Thales demonstrated autonomous drone swarms with unprecedented autonomy in 2024. Sweden is testing soldier-controlled fleets of 100 drones in Arctic conditions. Swarm AI coordinates hundreds or thousands of autonomous units — drones, robots, vehicles — toward shared objectives without centralized control.

Autonomous systems: Waymo surpassed 500,000 paid rides per week across 10 U.S. cities, with 91% fewer serious-injury crashes than human drivers. Tesla launched its robotaxi service in Austin in June 2025. Zoox (Amazon-owned) entered multiple new markets.

Physical AI / “World Models”: Physical Intelligence, founded by ex-DeepMind and Stanford researchers, raised $1.1 billion to build foundation models that understand how the physical world works — enabling robots to handle novel objects and environments they’ve never seen before.

A trillion-dollar bet: Who is funding the AI future?

The scale of capital flowing into next-generation AI is without precedent in the history of technology. And I do not use that phrase lightly.

Big Tech’s spending spree

The four largest tech companies — Amazon, Microsoft, Alphabet, and Meta — collectively spent approximately $240 billion on capital expenditure in 2024, ramped to roughly $400 billion in 2025, and are projected to approach $650 billion in 2026. Amazon alone plans to spend $200 billion in 2026. Nvidia estimates that global data center capital expenditure could reach $1 trillion in 2026 and $3–4 trillion annually by 2030.

To put this in perspective: the entire Apollo program — adjusted for inflation — cost about $280 billion. These companies are spending more than double that every year on AI infrastructure.

The startup superlatives

OpenAI closed a $122 billion round in March 2026 at an $852 billion valuation — the largest funding round in history — with $50 billion from Amazon, $30 billion each from Nvidia and SoftBank, and $12 billion from other investors. ChatGPT now has over 900 million weekly active users.

Anthropic raised a $30 billion Series G in February 2026 at a $380 billion valuation. Its revenue exploded from $1 billion in annualized run rate in December 2024 to over $5 billion by August 2025 — a fivefold increase in eight months.

xAI has raised over $22 billion in primary funding, with its Colossus supercomputer housing more than 200,000 GPUs.

In 2025, AI companies captured approximately 50% of all global venture capital. The Stanford HAI AI Index Report documented total corporate AI investment of $252.3 billion in 2024, with private investment up 44.5% year-over-year.

The robotics gold rush

Figure AI raised a $1 billion Series C in September 2025 at a $39 billion valuation — a 15-fold increase from its $2.6 billion valuation just 18 months earlier. Physical Intelligence raised $1.1 billion across three rounds in less than two years. Jeff Bezos has personally invested in at least four robotics companies.

Stargate: The biggest infrastructure bet in tech history

The most ambitious play is the Stargate Project, announced at the White House in January 2025 with a $500 billion commitment over four years for U.S. AI data centers. Led by SoftBank (financial lead) and OpenAI (operational lead), with Oracle and Abu Dhabi’s MGX as partners, the project began with 10 data centers in Abilene, Texas and has expanded to 15 U.S. sites. International offshoots have been announced for the UAE, Norway, the UK, and Argentina. Separately, OpenAI signed a $300 billion, five-year cloud contract with Oracle.

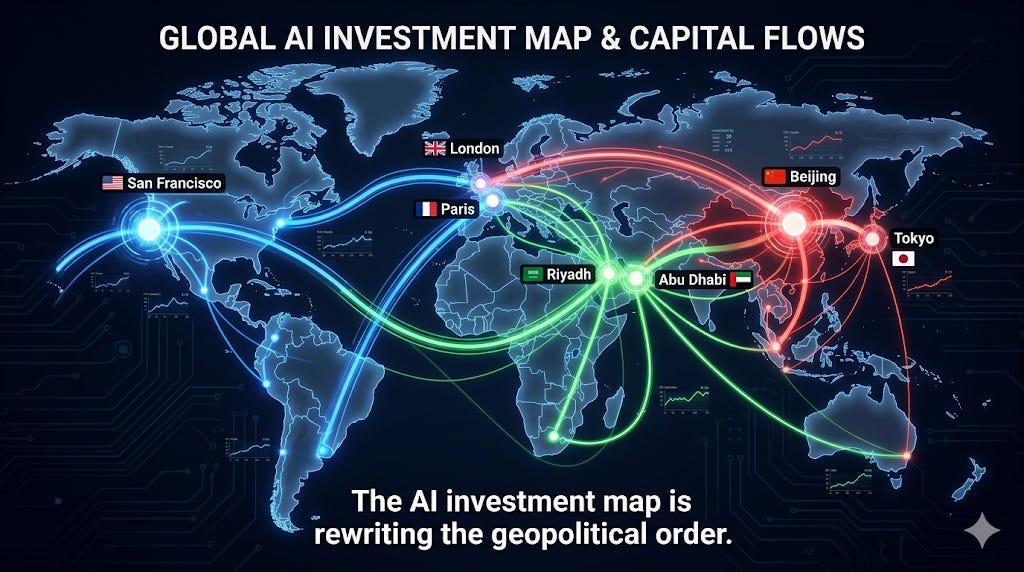

Nations are racing to own the AI future

The competition for AI supremacy has become a defining feature of 21st-century geopolitics. And here is where things get both fascinating and deeply concerning.

The United States: Deregulation meets defense spending

The Trump administration pivoted sharply from the Biden-era regulatory approach. President Trump revoked Biden’s AI safety executive order on his first day in office and replaced it with a framework explicitly prioritizing deregulation and “America’s global AI dominance.” The Department of Defense’s FY2026 budget introduced the Pentagon’s first-ever dedicated AI budget line of $13.4 billion. DARPA has invested over $2 billion in AI since 2018 and now incorporates AI into roughly 70% of its programs.

Defense AI companies are booming: Palantir holds a $10 billion enterprise agreement with the DoD. Anduril is valued at $28 billion with revenue doubling annually. Shield AI reached a $12.7 billion valuation with its autonomous fighter concepts.

China: The quiet competitor

China’s ambition is equally explicit. Its 2017 New Generation AI Development Plan targets leadership in core AI by 2030. China’s core AI industry already exceeded ¥700 billion ($96 billion) in 2024. The country has filed 1.57 million AI patents — 38.6% of the global total — and generated 61.5% of new generative AI patents in 2024. DeepSeek‘s efficient open-source model, released in January 2025, disrupted global assumptions about the cost and approach needed for competitive AI.

But China faces headwinds: U.S. semiconductor export controls continue to restrict access to the most advanced chips, and Chinese chip manufacturing still lags two to three generations behind global leaders.

Europe: Regulate first, innovate second (maybe)

The European Union took a regulatory-first approach with the EU AI Act, the world’s first comprehensive horizontal AI law, which entered into force in August 2024. It prohibits social scoring and workplace emotion recognition, imposes transparency rules on general-purpose AI models, and threatens penalties of up to €35 million or 7% of global turnover.

To avoid falling behind on innovation, the EU launched the InvestAI Initiative in February 2025, mobilizing €200 billion for AI. France alone committed €109 billion. And Yann LeCun‘s departure from Meta to found AMI Labs in Europe — with a $1.03 billion seed round, the largest in European history — signals a potential shift in the continent’s AI fortunes.

The Middle East: Petrodollars become AI dollars

The UAE, which created the world’s first AI ministry in 2017, launched MGX in March 2024 as a technology investment vehicle targeting $100 billion in assets under management. MGX has invested in Stargate, Anthropic, xAI, Databricks, and OpenAI.

Saudi Arabia’s Public Investment Fund backed Project Transcendence, a $100 billion AI initiative, and launched HUMAIN, a full-stack AI company chaired by Crown Prince Mohammed bin Salman with a $10 billion global AI venture fund.

Gulf sovereign wealth funds collectively manage approximately $4 trillion and accounted for 54% of all sovereign wealth fund deployment globally in the first half of 2024. The message is clear: the Gulf states are converting oil wealth into AI influence with remarkable speed. Whether this creates genuine technological capability or merely financial dependency is the question to watch.

The warnings from those who know most

Here is the uncomfortable truth: the people who understand AI best are issuing the most urgent warnings.

Geoffrey Hinton: “We have no experience of what it’s like”

In December 2024, Hinton used his Nobel Prize acceptance speech not to celebrate but to deliver what observers called a somber and urgent reflection, warning that AI is advancing within a framework governed by short-term profit, not long-term safety. His prediction: a 50% chance that AI surpasses human intelligence within 5 to 20 years. On employment, he was blunt: what’s actually going to happen is rich people are going to use AI to replace workers, creating massive unemployment and a huge rise in profits.

Dario Amodei: The “compressed 21st century”

Dario Amodei, CEO of Anthropic, offered a more nuanced but equally striking vision in his landmark essay Machines of Loving Grace (October 2024). He described a “compressed 21st century” in which AI could condense 50 to 100 years of biological and medical progress into 5 to 10 years. Anthropic officially expects “powerful AI systems” — defined as models smarter than a Nobel Prize winner across most relevant fields — to emerge in late 2026 or early 2027.

Sam Altman: “We know how to build AGI”

Sam Altman went further in his January 2025 blog post, writing that OpenAI is now confident it knows how to build AGI as traditionally understood, and is beginning to turn its aim beyond that, to superintelligence. By 2025, he was predicting “intelligence too cheap to meter” and speculating that GPT-8 could solve fundamental physics problems.

The dissent: Yann LeCun says not so fast

Not everyone agrees. Yann LeCun, the Turing Award winner who left Meta in November 2025, called the path to superintelligence via large language models fundamentally flawed. He argues the industry needs entirely new architectures — “world models” that understand physics and maintain memory — and that AGI is decades away. A March 2025 survey by AAAI found that 76% of 475 AI researchers thought scaling current approaches would be unlikely to produce general intelligence.

The institutional response

The institutional response has been rapid, if arguably insufficient. Yoshua Bengio chaired the first International AI Safety Report in January 2025, authored by more than 100 experts and backed by 30 countries. The Center for AI Safety‘s statement — that mitigating the risk of extinction from AI should be a global priority alongside pandemics and nuclear war — has now been signed by over 350 leaders. The Stanford AI Index Report documented that U.S. state-level AI laws more than doubled from 49 to 131 in a single year.

All is good so far — except that laws are only as good as their enforcement, and the pace of AI deployment vastly outstrips the pace of governance.

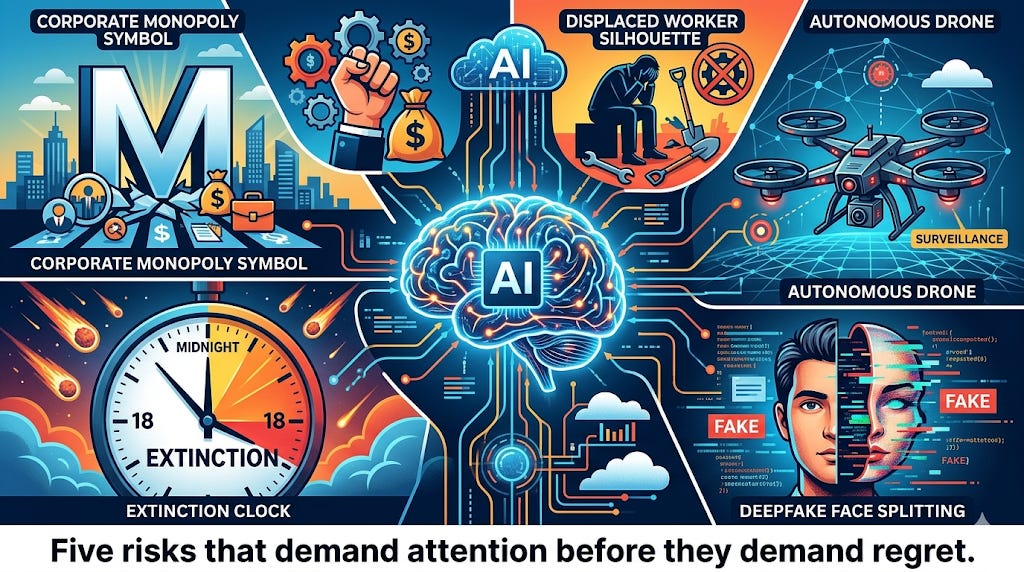

Five risks that should keep you up at night

1. The concentration of power

The numbers here are stark. AWS, Microsoft Azure, and Google Cloud control roughly 75% of global cloud infrastructure. Nvidia supplies approximately 92% of advanced AI training chips. Google, OpenAI, and Anthropic control almost 90% of the $37 billion enterprise LLM market. The FTC warned in January 2025 that partnerships like Google-Anthropic and Microsoft-OpenAI risk locking in market dominance. The Brookings Institution has noted the troubling vertical integration across the entire AI supply chain, from semiconductors to user interfaces.

We’ve seen this movie before — with oil, with telecommunications, with social media. But AI concentration is different because the technology touches everything: healthcare, education, finance, defense, information. When three companies control the cognitive infrastructure of civilization, that’s not a market failure waiting to happen. It’s a governance crisis in progress.

2. Job displacement at scale

Goldman Sachs estimates that generative AI could automate tasks equivalent to 300 million full-time jobs globally. The World Economic Forum‘s 2025 Future of Jobs Report projects 92 million jobs displaced by 2030 — though it forecasts 170 million new roles created. McKinsey finds that 30% of U.S. work hours could be automated by 2030.

The twist — and this is important — is that it is higher-wage knowledge workers, not manual laborers, who face the greatest disruption. Workers with AI skills now command a 56% wage premium over those without. Amodei warned that AI could replace up to half of entry-level office jobs within five years. Entry-level jobs — the traditional training ground for young professionals — face the sharpest decline, potentially creating a “missing rung” in career ladders that traps an entire generation.

3. Autonomous weapons

The U.S. Department of Defense directive on lethal autonomous weapon systems does not prohibit their development. Russia has developed unmanned ground robots with AI that identifies and prioritizes attacks on Western tanks. France’s Thales demonstrated autonomous drone swarms. The Pentagon’s Replicator initiative aims to field thousands of low-cost autonomous drones. The UN Secretary-General has called autonomous weapons “politically unacceptable and morally repugnant” and urged a legally binding instrument by 2026, but negotiations have stalled.

Hinton’s prediction is stark: we will use autonomous weapons until we realize how terrible they are.

4. Deepfakes and information warfare

Among 87 countries with elections since 2023, 33 have experienced deepfake-related incidents. In Slovakia in 2023, a deepfake audio clip of a candidate discussing electoral fraud went viral days before the election; the leading candidate lost. Deepfake-driven fraud caused over $200 million in financial losses in the first quarter of 2025 alone. Only 28 U.S. states have any form of deepfake legislation.

5. Existential risk: The debate that won’t go away

A major survey of 2,778 AI researchers found a median probability of 5% that AI could cause human extinction or permanent disempowerment, with a mean of 14.4%. The IMD AI Safety Clock moved to 18 minutes to midnight by March 2026. A Future of Life Institute open letter signed by five Nobel laureates called for a prohibition on superintelligence development until there is broad scientific consensus on safety.

I will not claim that either the optimists or the pessimists are obviously correct. But I will say this: a 5% probability of civilizational catastrophe, treated as the median estimate by the people building these systems, is not a number anyone should be comfortable with. We don’t accept a 5% chance of a plane crashing. Why would we accept it for the entire species?

When does this all arrive?

The timeline depends on which technology you’re asking about — and which expert you believe.

For agentic AI, the transformation is already underway. Gartner reports that 72% of enterprises plan to deploy AI agents by 2026. Sam Altman predicted systems that “figure out novel insights” by 2026 and “robots that can do tasks in the real world” by 2027.

For autonomous driving, Waymo has logged nearly 100 million fully driverless miles. Tesla launched its robotaxi service. But 61% of Americans say they fear self-driving cars.

For AI drug discovery, the first AI-designed drug to receive FDA approval is anticipated in 2026 or 2027. By 2030, analysts expect 30–40% of new drugs to incorporate AI in the discovery process.

For AGI, the disagreements are profound. The spectrum runs from Amodei’s prediction of “powerful AI” by late 2026 to LeCun’s view that it is decades away. Altman claims OpenAI already knows how to build it. DeepMind’s Shane Legg puts a 50% chance on “minimal AGI” by 2028. Ray Kurzweil has maintained since 1999 that AGI will arrive by 2029.

The definitional chaos doesn’t help: OpenAI originally defined AGI as AI that outperforms humans at most economically valuable work, while Microsoft and OpenAI’s financial agreement reportedly defines it by a profit threshold. Amodei avoids the term entirely. LeCun rejects it.

Back to the topic at hand: the 2030–2035 window carries the most speculative but highest-stakes projections. Leopold Aschenbrenner’s influential “Situational Awareness” essay argues that the transition from AGI to superintelligence could happen within roughly one year. Mustafa Suleyman, now CEO of Microsoft AI, predicted “human-level performance on most professional tasks” within 12–18 months of his February 2026 interview. If even the moderate predictions prove correct, the cascading effects would be unprecedented.

What daily life might look like in the AI era

Let me get creative here — not in a science-fiction way, but in a grounded-in-what-the-builders-themselves-are-saying way.

Demis Hassabis envisions a “post-scarcity era” where AI solves curing all diseases, achieving clean energy, and enabling humanity to travel to the stars. He describes the coming transformation as ten times bigger than the Industrial Revolution and perhaps ten times faster. Amodei’s “compressed 21st century” scenario envisions AI eliminating most infectious diseases, halving cancer mortality, and essentially solving mental health treatment — all within a decade.

On the ground, the changes would be more immediate and more personal. By 2027–2028, AI agents are expected to manage scheduling, medical recommendations, and daily decisions as trusted personal advisors. AI companions are already creating what researchers describe as deeply personal relationships — raising concerns about mental health and reality distortion. In education, 64% of U.S. teens already use AI chatbots, with six in ten saying chatbot-assisted cheating is common at school.

The workplace transformation is the most consequential scenario. Even the conservative National Bureau of Economic Research estimates that roughly 5–6 million American workers sit at the intersection of high AI exposure and low adaptive capacity.

Mustafa Suleyman‘s book The Coming Wave frames the defining challenge as the containment problem — maintaining control over technologies that naturally tend to proliferate. He describes the next decade as a nuclear arms race inside a gold rush, warning that AI and synthetic biology together could enable anyone to create novel deadly pathogens. Historically, he notes, true containment of transformative technology barely exists.

The environmental cost nobody wants to talk about

The AI boom carries a physical price that deserves far more attention than it gets.

U.S. data centers consumed 183 terawatt-hours of electricity in 2024 — 4.4% of total U.S. electricity, roughly equivalent to Pakistan’s entire annual demand. The International Energy Agency projects that figure will grow 133% to 426 terawatt-hours by 2030. Goldman Sachs Research estimates that approximately 60% of increased data center electricity demand will be met by burning fossil fuels. Cornell researchers project AI growth will add 24–44 million metric tons of CO2 annually by 2030. A Carnegie Mellon study warns that data centers could increase the average U.S. electricity bill by 8% by 2030, with Virginia — the data center capital — facing increases above 25%.

Neuromorphic computing and more efficient model architectures represent one answer. But the trajectory of current investment — $650 billion in tech company capex projected for 2026, a $500 billion Stargate project, data centers consuming as much electricity as entire nations — suggests the industry’s appetite for compute far outpaces its commitment to efficiency.

A decade that demands attention

So here we are. The AI landscape between 2025 and 2035 will not be defined by a single technology but by the convergence of many: agents that act, robots that work, chips that mimic brains, quantum processors that shatter computational barriers, and interfaces that bridge minds and machines. The capital committed exceeds anything in technology history. The timelines predicted by those building these systems are measured in years, not decades.

Three dynamics will determine how this era unfolds.

First, the race between capability and safety: institutions have moved from zero AI safety bodies to a global network in three years, but the pace of deployment vastly outstrips the pace of governance. The EU AI Act is the only comprehensive law in force, and even it includes multi-year phase-in periods.

Second, the concentration of power: a handful of companies and nations control the infrastructure, the chips, the models, and the capital, creating dependencies that dwarf anything in the oil era. CEPR has documented how Big Tech’s vertical integration of the AI stack creates an unprecedented form of industrial dominance.

Third, the distributional question: AI could add $15.7 trillion to global GDP by 2030 (PwC’s estimate), but the IMF warns that 60% of jobs in advanced economies face significant exposure while only 26% do in low-income countries, threatening to widen global inequality dramatically.

The most striking finding across all expert opinion is not the disagreement about timelines — it is the near-universal agreement on stakes. Optimists and pessimists, builders and critics, Silicon Valley executives and Nobel laureates converge on one point: what happens with AI in the next decade will be among the most consequential developments in human history.

The cost of performance at GPT-3.5’s level dropped from $20 per million tokens in November 2022 to $0.07 by October 2024 — a 280-fold reduction in 18 months. That acceleration shows no sign of slowing.

The question is not whether these technologies will transform society, but whether society will be ready when they do.

One can only dream that the answer is yes. But dreaming isn’t enough. Engagement is.

The HAIA Foundation publishes research and analysis on AI governance, ethics, and societal impact. Subscribe to our Substack for more.