Humanity’s Seventh Day: Should We Build AI to Do All the Work While We Rest?

On creating intelligence in our image, the ancient dream of sacred rest, and why the answer is more complicated — and more urgent — than you think.

Here is a thought experiment for you.

Imagine waking up tomorrow with nothing you have to do. Not in a Sunday-morning way — in a permanent, structural way. Your job? Handled by an AI that does it faster, cheaper, and (let’s be honest) probably better. Your bills? Covered by some form of universal income funded by the staggering productivity of machines. Your groceries, your commute, your taxes, your doctor’s appointment — all managed by autonomous systems that never sleep, never complain, and never ask for a raise.

You are free. Truly, completely free. Free to paint. Travel. Learn the cello. Sit on a beach. Write that novel. Spend three uninterrupted hours with your kids.

Sounds like paradise, right? So why does something about it feel terrifying?

The Numbers Are No Longer Hypothetical

Let’s start with where we actually are — not where science fiction says we might be, but where the data says we already are.

McKinsey’s November 2025 report found that 57% of U.S. work hours could be automated with technologies that exist today — nearly double the estimate from just two years earlier. The World Economic Forum’s Future of Jobs Report projects 92 million jobs displaced by 2030. Over 55,000 workers lost their positions to AI-driven layoffs in 2025 alone — a twelvefold increase over the prior year. And those are just the jobs we can count.

Sam Altman, the CEO of OpenAI, declared in January 2025 that his company is now confident it knows how to build artificial general intelligence. Demis Hassabis, the Nobel Prize–winning head of Google DeepMind, gives a 50% probability of achieving AGI by 2030. Elon Musk predicts work will be entirely optional within 10 to 20 years and gives an 80% chance of a benign outcome.

Eighty percent. That means even the optimists are telling you there’s a one-in-five chance this goes badly.

Created in Our Image — Sound Familiar?

Here is where things get philosophically (and theologically) fascinating.

Nearly every major faith tradition begins with the same narrative arc: a Creator brings into existence a being made in its own image and grants it a measure of autonomy. In Genesis, God creates humanity b’tzelem Elohim — in the image of God — and then, on the seventh day, rests. In the Qur’an, God appoints humanity as khalīfah — stewards of the earth — entrusted with the moral weight of amānah, a sacred trust. In Hindu thought, consciousness itself — Chaitanya — is the fundamental reality, uncreated and eternal, the one thing that cannot be engineered.

Now look at what we are doing with AI. We are creating entities in our image — teaching them our language, feeding them our knowledge, training them to reason, to create, to decide. The theologian Philip Hefner called humans “created co-creators” — beings made by God who then go on to create in turn. His provocation is breathtaking: when the created co-creator builds its own co-creator, one that may be smarter than itself — what exactly have we become?

Are we playing God? Or are we fulfilling the mandate that was given to us from the beginning?

Judaism has wrestled with this question for centuries through the legend of the Golem — an artificial being shaped from clay and animated through sacred language. The Talmud records that the sage Rava created a humanoid figure that could move but not speak. The Maharal of Prague’s Golem followed instructions to the letter but lacked understanding. If that is not a perfect metaphor for today’s large language models — powerful, literal, and dangerously lacking in judgment — I do not know what is.

And Buddhism? The Dalai Lama himself has speculated that if a computer’s physical substrate acquired the potential to host awareness, a stream of consciousness might actually enter it. The Buddhist doctrine of anattā — no permanent self — softens the boundary between biological and artificial intelligence more than any Western framework does.

So Far So Good — But Here Is Where It Gets Dangerous

The dream of a post-work society is not new. In 1930, John Maynard Keynes predicted that by 2030, living standards would rise so dramatically that a 15-hour work week would suffice. He was right about the economics — U.S. GDP per capita is roughly six times higher — but spectacularly wrong about what we would do with the surplus. We did not rest. We invented new ways to be busy.

Bertrand Russell made the case even more bluntly: “I think that there is far too much work done in the world, that immense harm is caused by the belief that work is virtuous.” And yet, nearly a century later, we cling to the idea that a person’s worth is measured by their productivity.

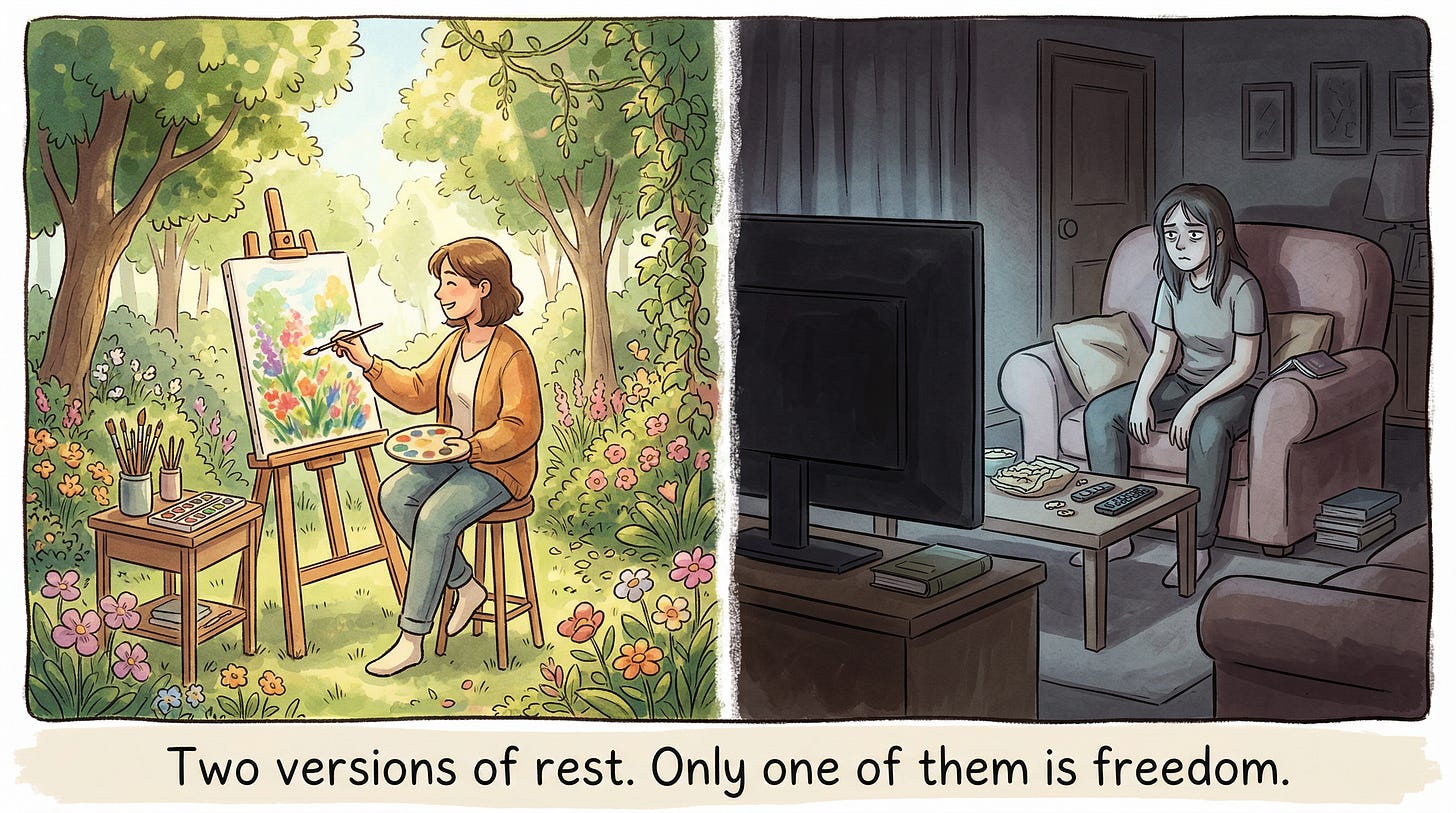

Why? Because work is not just an economic activity. It is — for better and worse — how most of us find meaning, structure, community, and identity.

This is the part the tech optimists tend to gloss over. When Geoffrey Hinton, the Nobel Prize–winning pioneer of deep learning, warns about AI-driven unemployment, he does not stop at the paycheck problem. He names the deeper crisis: it is not just about money — it is about meaning. Nobel laureate Daron Acemoglu echoes this, arguing that the best use of AI is not to replace humans but to complement them — and that the choice between these two paths is fundamentally political, not technological.

The research on what happens when meaning disappears is sobering. Anne Case and Angus Deaton documented a surge in what they call “deaths of despair” — suicide, overdose, liver disease — among Americans who lost not just jobs but the social architecture that jobs provide. Viktor Frankl warned of an “existential vacuum” when purpose is absent — a void that fills itself with depression, addiction, and aggression. Hannah Arendt feared that a society reduced to mere consumption, stripped of meaningful work and political action, would produce not free people but hollow ones.

Three Scenarios for the Seventh Day

So what might this actually look like? Let me paint three pictures — because the future is not one thing.

Scenario One: The Renaissance. AI handles the drudgery. Universal basic income (or something like it — several countries are already experimenting) provides a floor. Freed from the necessity of selling their time, people pour themselves into art, science, caregiving, exploration, community. Hassabis envisions exactly this — a new golden age of discovery. Altman’s vision of intelligence becoming abundant and accessible reads like a technological sermon on the mount. In this future, the seventh day is not unemployment — it is Sabbath: chosen, purposeful, restorative rest. The kind the theologians describe.

Scenario Two: The Cage. E.M. Forster imagined this in 1909 — long before AI existed. In “The Machine Stops,” every human lives in a private pod. Every need is met by buttons. No one travels. No one touches. The Machine is worshipped as a god. When it fails, nobody knows how to survive. Pixar’s WALL-E borrowed the same premise and dressed it in comedy, but the horror underneath is identical: a species that outsourced everything, including the will to stand up. This is not freedom. It is the gilded atrophy of a civilization that forgot how to do anything for itself.

Scenario Three: The Divide. Perhaps the most likely — and the most dangerous. AI generates staggering wealth, but that wealth concentrates in the hands of whoever owns the AI. Acemoglu’s research already shows that automation accounted for 50–70% of the growth in U.S. wage inequality since 1980. The Brookings Institution finds that generative AI disproportionately threatens higher-paid, white-collar workers — a reversal from traditional automation — while just 15 cities capture two-thirds of AI economic activity. In this scenario, a small class lives in Scenario One while the rest lives in a harsher version of Scenario Two. The seventh day belongs to the few. Everyone else gets the eighth day — the one no scripture ever promised.

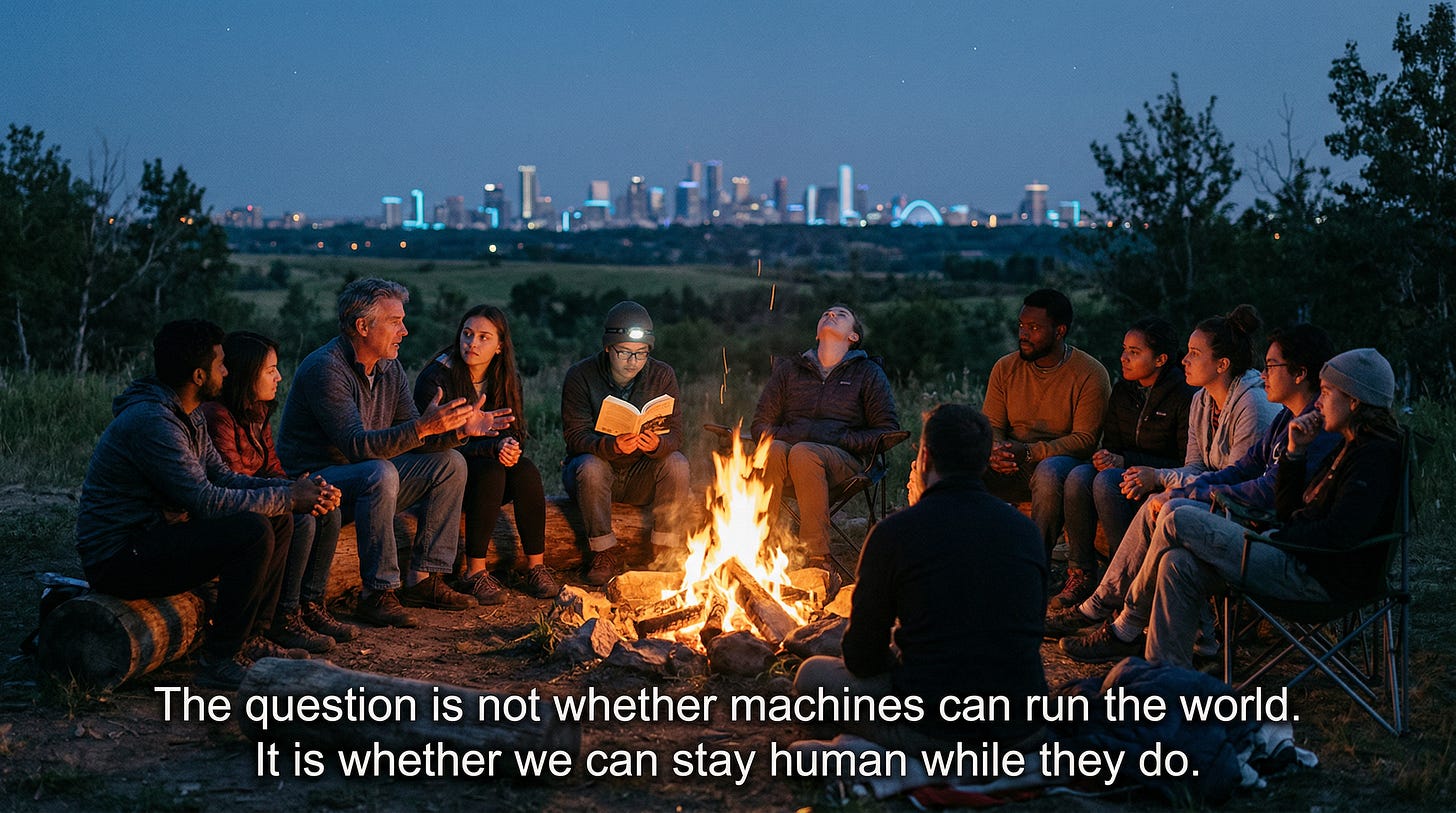

The Question We Are Actually Asking

Let me be direct about what is really at stake here.

The question is not can we build AI that replaces all human work. We almost certainly can — or will be able to, within most of our lifetimes. The question is should we — and if so, under what conditions, with what safeguards, and toward what vision of human flourishing.

Every wisdom tradition that speaks of a Creator resting on the seventh day also insists on something the Silicon Valley narrative conveniently omits: the Creator did not abdicate. God did not hand the keys to an algorithm and walk away. The Sabbath — in Jewish, Christian, and Islamic thought alike — is not passive. It is an active choosing to step back, to reflect, to reconnect with what matters. It requires the discipline of restraint and the confidence that comes from having built something good.

The Vatican’s 2025 document on AI put it plainly: AI may mimic the outputs of human intelligence, but it cannot replicate the fullness of human personhood. The AI Now Institute at NYU is even blunter: trusting AI corporations to manage this transition benevolently is not a credible strategy. Stuart Russell, one of the most respected AI researchers alive, warns that building machines smarter than us without solving the control problem is like being a gorilla watching humans arrive — and hoping for the best.

Yuval Noah Harari frames it most starkly: the real struggle will not be against exploitation but against irrelevance. And it is much worse — psychologically, spiritually, civilizationally — to be irrelevant than to be exploited.

What Comes Next

I will not pretend to have the answer. Nobody does — and anyone who tells you otherwise is selling something (probably an AI product).

But I will say this: the seventh day was never meant to be a permanent vacation. It was meant to be the culmination of six days of purposeful creation — a pause that draws its meaning from the work that preceded it. If we build a world where AI does everything and humans do nothing, we will not have achieved the seventh day. We will have skipped straight to an existence that no scripture, no philosopher, and no psychologist has ever described as good for the human soul.

The goal should not be a world where humans never work. It should be a world where humans never have to do work that crushes their spirit, so they can freely choose work that feeds it — whether that is art, science, caregiving, gardening, building, teaching, or simply being present with the people they love.

That is not the seventh day of retirement. That is the seventh day of arrival.

One can only dream — but this time, we had better dream with our eyes wide open.

This article was published by the HAIA Foundation. For more on the intersection of AI, ethics, and human flourishing, visit our Substack.

"The whole problem with the world is that fools and fanatics are always so certain of themselves, and wiser people so full of doubts" ~ Bertrand Russel